Promise.all, allSettled, race & any

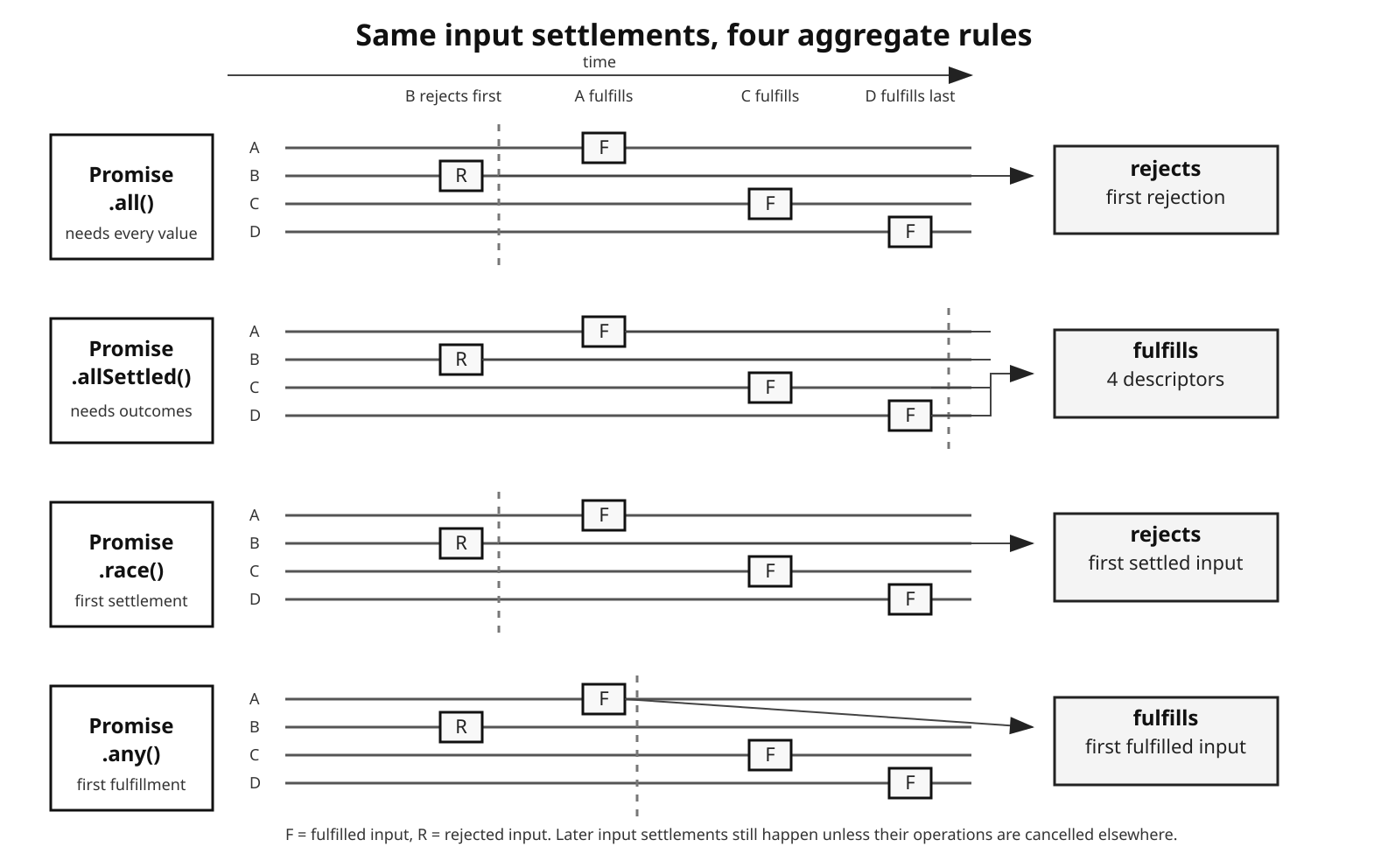

Promise combinators turn several inputs into one promise. The differences between them are small on the surface, but each one answers a different question. Promise.all() asks whether every input fulfilled. Promise.allSettled() waits until every input has either fulfilled or rejected. Promise.race() follows the first settlement of any kind. Promise.any() follows the first fulfillment and reports the rejection set only when no input fulfills.

Promise Combinators

The combinators coordinate results after work has already been represented as promises. They do not make the work lazy, cap concurrency, retry failures, or cancel operations that are no longer useful. Those responsibilities stay with the code that creates and manages the promises around them.

That split affects resource ownership because many promise-returning operations in this book are wrappers around resources below JavaScript: sockets, file descriptors, timers, stream buffers, database connections, child processes, and libuv requests. The aggregate promise can settle while those resources keep doing work. Good combinator code therefore keeps three questions separate:

- When does each operation start?

- Which result or failure should settle the aggregate promise?

- What should happen to work that is no longer needed?

The API names are compact, but the consequences are not. Most production bugs around these APIs come from mixing those three questions together.

Figure 1 — Read the rows as all(), allSettled(), race(), and any(): the same inputs produce different aggregate timing depending on whether the rule waits for all inputs, every settlement, the first settlement, or the first fulfillment.

Promise.all()

Promise.all() takes an iterable and returns a promise for an array of fulfillment values. It fulfills only when every input fulfills. If any input rejects first, the aggregate promise rejects with that rejection reason.

const [user, posts, settings] = await Promise.all([

fetchUser(id),

fetchPosts(id),

fetchSettings(id)

]);The three function calls happen before Promise.all() receives the array. If they start HTTP requests, the requests are already in flight by the time the combinator attaches its handlers. From that point on, completion order affects only timing. The result array still follows input order, so if fetchPosts(id) finishes first, its value lands in the posts slot because that promise was at index 1.

The same attachment step gives Promise.all() its fail-fast behavior: the aggregate promise rejects as soon as the first input rejects.

That fast rejection does not cancel the other inputs. If fetchPosts(id) rejects after 50ms and fetchUser(id) fulfills after 200ms, the aggregate promise has already rejected by the time fetchUser(id) finishes. The later fulfillment cannot change the aggregate result, but the request still ran, its promise still settled, and the handler attached by Promise.all() still observed it.

Later rejections from other inputs therefore do not usually become unhandledRejection events; the combinator attached rejection handlers to every input during the initial iteration. The diagnostic loss is different. The aggregate exposes only the first rejection reason. If three of five database calls fail, the caller sees one failure unless the code collects the others separately.

An empty iterable returns an already-fulfilled promise:

const empty = Promise.all([]);Its state is fulfilled with [] immediately, but observers still run asynchronously. A .then() handler attached to empty, or an await empty, resumes through the promise job queue after the current synchronous stack finishes. "Already fulfilled" describes the promise state. "Asynchronous" describes observer execution.

Non-promise values are accepted. Each value goes through promise resolution, so this works:

const [count, response, label] = await Promise.all([

3,

fetch("https://api.example.com/user"),

"primary"

]);The plain values go through the same ordering path as promises. They do not make handlers run inline.

File fan-out is the common Node version:

import { readFile } from "node:fs/promises";

const contents = await Promise.all(

files.map(file => readFile(file, "utf8"))

);This starts all reads before waiting for the aggregate result. If any read rejects, the aggregate rejects, but the other reads keep running because Promise.all() does not own the filesystem requests.

That behavior is the right fit when every result is required and one failure invalidates the whole operation: loading required configuration files, running independent queries that all feed one response, or fetching resources where partial output would be wrong.

The internal ordering model is simple enough to rely on. The iterable is consumed synchronously. For each element, the combinator records an index and attaches fulfillment and rejection reactions. Each fulfillment stores its value at that index. The aggregate fulfills only after all input fulfillments have arrived, so completion order changes timing, not array positions.

Promise.allSettled()

Where Promise.all() stops at the first rejection, Promise.allSettled() waits until every input promise has settled and then fulfills with settlement descriptors.

const results = await Promise.allSettled([

fetchUser(id),

fetchPosts(id),

fetchSettings(id)

]);Each descriptor has one of two shapes, so the first thing consuming code does is branch on status:

for (const result of results) {

if (result.status === "fulfilled") handleData(result.value);

else logError(result.reason);

}An input rejection is recorded as data instead of becoming the aggregate rejection. Assuming the iterable itself is consumed successfully, allSettled() fulfills with one descriptor per input. That narrower wording is important because iterator failures are still failures of the combinator call. If the input iterable throws while being read, or the iterator protocol itself fails, Promise.allSettled() rejects just like the other combinators.

That makes it the partial-success combinator. Health checks, cache warming, batch cleanup, notification fan-out, and best-effort indexing jobs often need the complete outcome set. One failed input should not hide the state of the other inputs.

The descriptor format is also a guardrail. Destructuring the result array as plain values is a bug because the values are wrapped in outcome objects.

const fulfilled = results

.filter(result => result.status === "fulfilled")

.map(result => result.value);That extracts the successes. Rejections need the same explicit handling:

const rejected = results.filter(result => result.status === "rejected");

if (rejected.length > 0) {

logger.warn(

`${rejected.length} operations failed`,

rejected.map(result => result.reason)

);

}allSettled() moves failure policy from the runtime into your code. That is useful only when the descriptors are actually read. await Promise.allSettled(...) without checking the result is a deliberate decision to ignore every failure.

The word "settled" is precise promise vocabulary. A promise starts pending. It settles once, either fulfilled or rejected. allSettled() waits for every input to leave the pending state and records which transition happened.

Before allSettled() landed in ES2020, code used a reflect helper:

function reflect(promise) {

return promise.then(

value => ({ status: "fulfilled", value }),

reason => ({ status: "rejected", reason })

);

}Then Promise.all(promises.map(reflect)) produced the descriptor array. That workaround expresses the same policy, but it is harder to audit than the built-in API and easier to get wrong under mixed fulfillment and rejection. The built-in also gives engines a specific path to optimize.

Promise.race()

Promise.race() moves from "all inputs" to "first settlement." A fulfillment wins if it arrives first. A rejection wins if it arrives first.

The usual timeout helper rejects after a delay:

function timeout(ms) {

return new Promise((_, reject) => {

setTimeout(() => reject(new Error("Timeout")), ms);

});

}With that helper, a timeout race is just two promises:

const response = await Promise.race([

fetch("https://api.example.com/data"),

timeout(5000)

]);If the fetch fulfills first, the race fulfills with the Response. If the timer rejects first, the race rejects with Timeout. In both cases, the losing promise keeps running. A Promise.race() timeout is only a timeout for the value being awaited; it is not cancellation for the underlying operation.

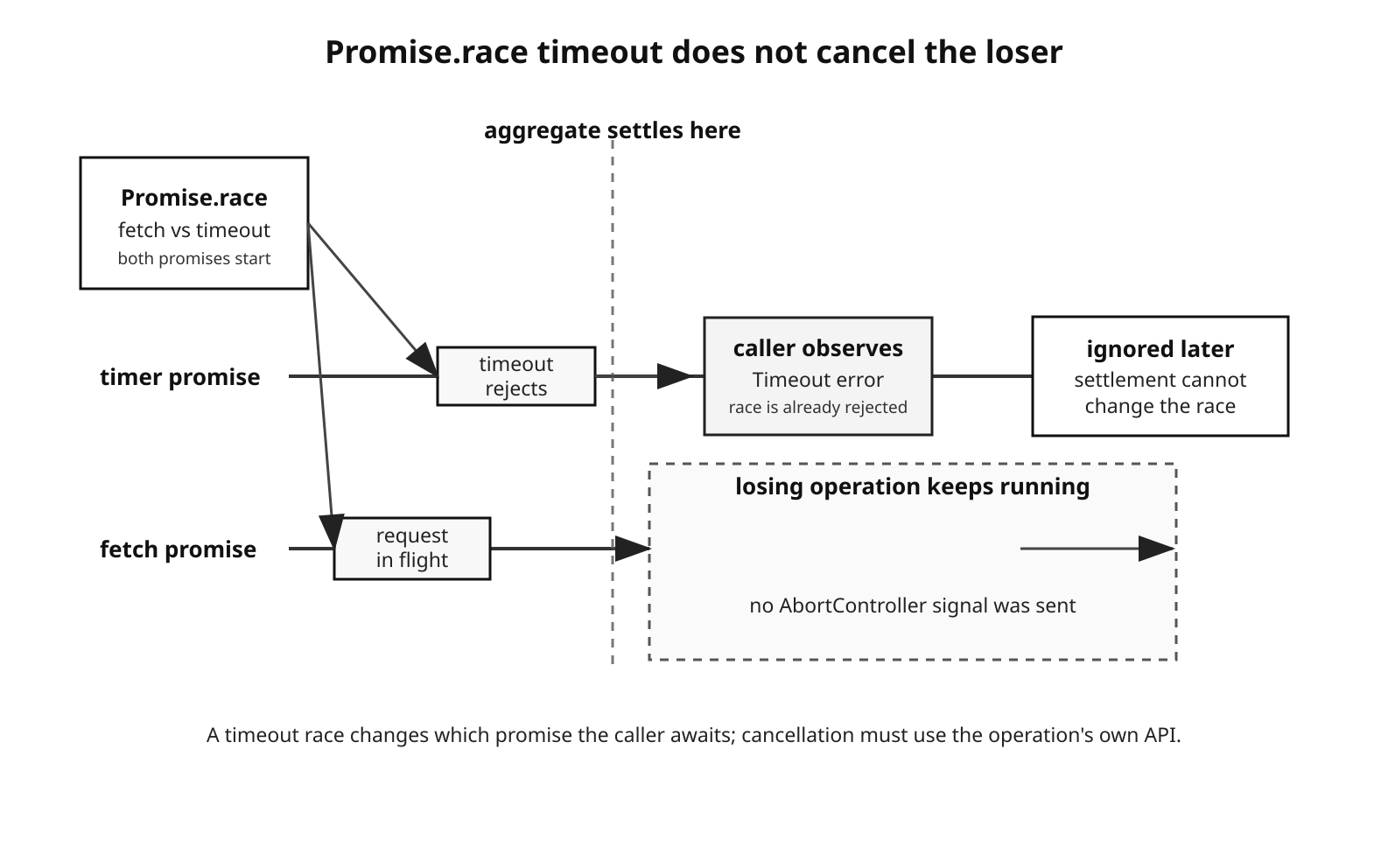

Figure 2 — A timeout race changes which promise the caller observes; it does not stop the losing operation unless that operation is cancelled through its own API.

That difference is where resource bugs start. If the timer wins, the HTTP request may still hold a socket, receive bytes, parse headers, and fulfill later. The returned response is ignored because the race is already settled. For short one-off calls that may be acceptable. In high-concurrency servers, repeated timeout races without cancellation can leave a lot of abandoned work in flight.

The empty case is another consequence of first-settlement semantics. Promise.race([]) returns a promise that never settles. This is a real edge case in dynamically built arrays. If filtering removes every candidate, the await hangs forever unless the code handles the empty case before calling race().

Race is also the wrong fallback primitive when fast failure should not win. If you race a mirror that rejects in 5ms against a mirror that fulfills in 100ms, race() rejects at 5ms. The successful mirror does not count. Fallback reads usually want Promise.any(), which ignores early rejections while a later fulfillment is still possible.

Repeated races in loops deserve extra care. This code starts a new check whenever the timer wins:

async function pollWithRefresh(checkFn, intervalMs) {

while (true) {

const result = await Promise.race([

checkFn(),

timeout(intervalMs).catch(() => "timeout")

]);

if (result !== "timeout") return result;

}

}The old checkFn() promise is not included in the next iteration's race. If it completes after losing its own iteration, its result is discarded. The loop can also accumulate outstanding checks if checkFn() regularly takes longer than intervalMs. For polling, either make each check cancellable, keep a tracked set of outstanding checks, or run one check at a time and sleep between attempts. An old race loser cannot settle a later race.

Promise.any()

Promise.any() is the success-oriented counterpart to race(). It fulfills with the first input to fulfill. Rejections are ignored while any input could still fulfill. If none fulfill, the aggregate rejects with an AggregateError.

Native fetch() needs one extra rule before it belongs in Promise.any() examples: HTTP status codes do not reject the fetch promise. A 503 response fulfills with response.ok === false; network failures and aborts reject. If HTTP 4xx/5xx should count as failure, wrap the response check.

async function fetchOk(url, options) {

const response = await fetch(url, options);

if (!response.ok) {

const err = new Error(`HTTP ${response.status}`);

err.status = response.status;

err.response = response;

throw err;

}

return response;

}With that wrapper, CDN fallback has the semantics the code claims:

const response = await Promise.any([

fetchOk("https://cdn-a.example.com/data"),

fetchOk("https://cdn-b.example.com/data"),

fetchOk("https://cdn-c.example.com/data")

]);The first HTTP-success response wins. A 503 or 429 response becomes a rejection reason because fetchOk() throws. Without that wrapper, the first HTTP response would fulfill the fetch() promise even if the server returned an error response.

If all inputs reject, inspect err.errors:

try {

await Promise.any(mirrors.map(url => fetchOk(url)));

} catch (err) {

console.log(err instanceof AggregateError);

console.log(err.errors.length);

console.log(err.errors.map(reason => String(reason)));

}AggregateError.errors contains rejection reasons, not necessarily Error objects. Code can reject with strings, numbers, objects, or errors. Real code should reject with Error instances, but diagnostic code cannot assume every reason has .message.

The AggregateError object itself is not iterable. Its .errors property is the useful array. In Node v24's V8, the rejection created by Promise.any() uses the message "All promises were rejected". A manually constructed new AggregateError(errors) can have an empty message unless you pass one:

throw new AggregateError(

[new Error("CDN-A down"), new Error("CDN-B timeout")],

"All CDNs failed"

);Promise.any() is for redundancy where success means "at least one source worked." Like the other combinators, it is not a cancellation primitive. The losing requests keep running unless you cancel them explicitly.

| Combinator | Fulfills when | Rejects when | Short-circuits | Empty input |

|---|---|---|---|---|

Promise.all() | All inputs fulfill | First input rejects, or iterable fails | On first rejection | Already fulfilled with [] |

Promise.allSettled() | All inputs settle | Iterable/protocol fails | No, for input settlement | Already fulfilled with [] |

Promise.race() | First settlement is fulfillment | First settlement is rejection, or iterable fails | On first settlement | Pending forever |

Promise.any() | First input fulfills | All inputs reject, or iterable fails | On first fulfillment | Rejected with AggregateError |

Concurrency Limiting

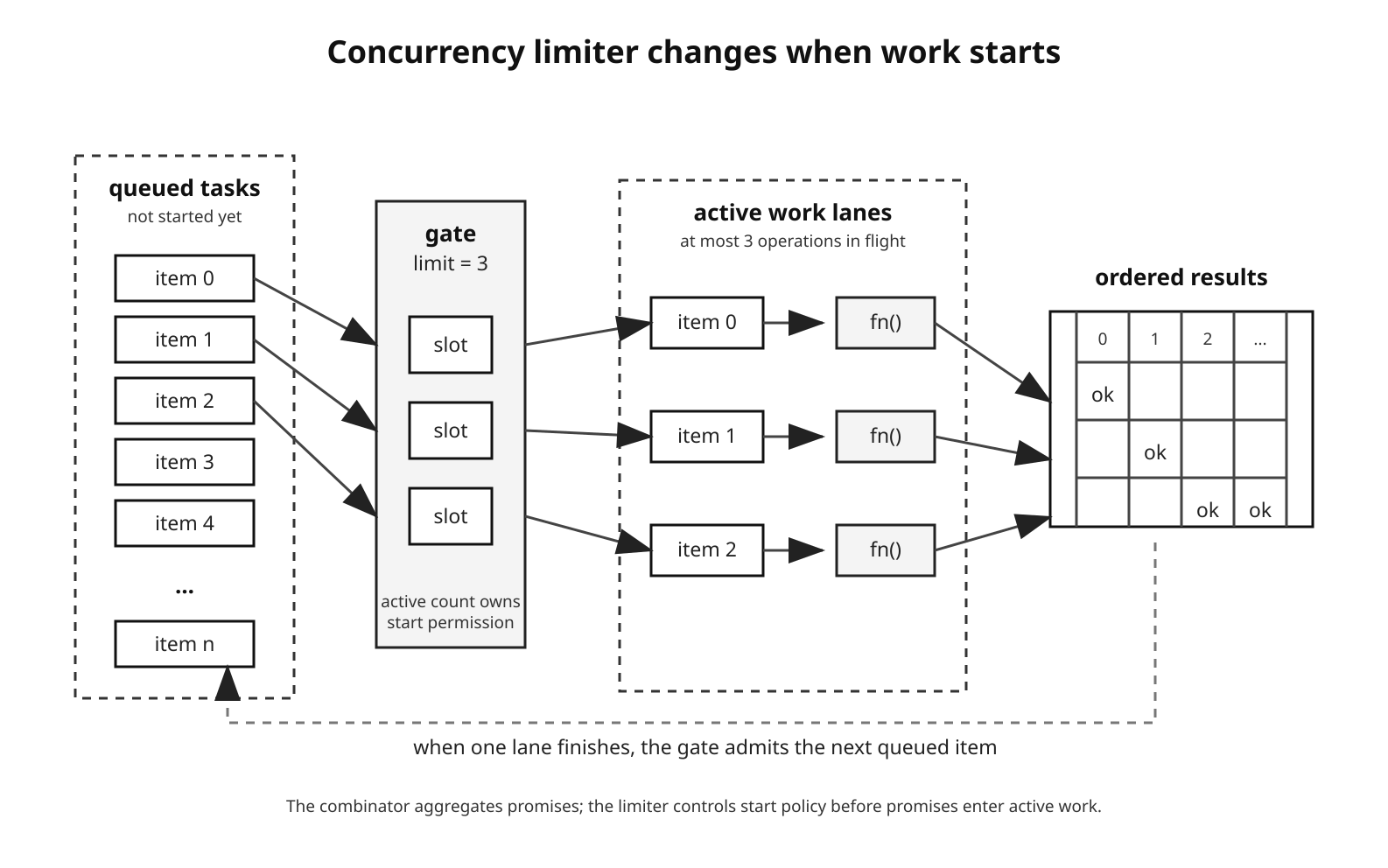

The same separation between aggregation and ownership shows up in concurrency. The combinators aggregate promises; they do not limit how many operations the surrounding code starts.

const results = await Promise.all(

urls.map(url => fetchOk(url))

);This starts one request per URL. For 500 URLs, the JavaScript fan-out is still 500, even though the actual socket count depends on the HTTP client, target origins, dispatcher configuration, protocol, pooling, and operating-system limits.

A worker-pool mapper changes the start policy by allowing at most concurrency operations to be active at a time:

Figure 3 — A concurrency limiter changes start policy: only a bounded number of operations are active while queued tasks wait for capacity.

async function pMap(items, fn, concurrency) {

if (!Number.isInteger(concurrency) || concurrency < 1) {

throw new RangeError("concurrency must be a positive integer");

}

const results = new Array(items.length);

let nextIndex = 0;

async function worker() {

while (nextIndex < items.length) {

const index = nextIndex++;

results[index] = await fn(items[index], index);

}

}

const workers = Array.from(

{ length: Math.min(concurrency, items.length) },

worker

);

await Promise.all(workers);

return results;

}Each worker takes the next index synchronously, then awaits the operation. The nextIndex++ step is safe here because JavaScript runs one piece of synchronous code at a time on this event loop. Another worker can resume only after the current worker reaches an await or returns to the runtime.

The caller still receives an ordered result array:

const responses = await pMap(

urls,

url => fetchOk(url),

10

);At most ten fetches are active. The result array preserves input order because each worker writes to its captured index. If fn() rejects, the internal Promise.all(workers) rejects. Already-started work continues, and later errors may be hidden behind the first rejection. Use allSettled()-style wrapping inside fn() when the batch needs complete failure reporting.

The limit also changes runtime shape. For roughly uniform latencies, total runtime is close to the number of waves multiplied by average latency. Real batches follow tail latency: the slowest item in each wave controls when the next queued item can start.

A limiter uses the same idea, but exposes it as a reusable gate that any part of the application can call:

function pLimit(concurrency) {

if (!Number.isInteger(concurrency) || concurrency < 1) {

throw new RangeError("concurrency must be a positive integer");

}

let active = 0;

const queue = [];

function runNext() {

if (active >= concurrency || queue.length === 0) return;

active++;

queue.shift()();

}

return function limit(fn) {

return new Promise((resolve, reject) => {

queue.push(() => {

Promise.resolve()

.then(fn)

.then(resolve, reject)

.finally(() => {

active--;

runNext();

});

});

runNext();

});

};

}The line to notice is Promise.resolve().then(fn): it handles promise-returning functions, synchronous return values, and synchronous throws through one path. The finally() block releases the slot even when fn() fails. A version that calls fn().then(...) directly breaks when fn() returns a plain value and can leak active when fn() throws before returning.

Use a pool when you have one fixed collection:

const bodies = await pMap(urls, fetchJsonOk, 20);Use a limiter when many call sites share one resource budget:

const dbLimit = pLimit(20);

const user = await dbLimit(() => loadUser(id));This is a counting-semaphore pattern in JavaScript form: active counts occupied slots, queue holds waiters, and finally() releases a slot. The limiter does not require locks because the shared counter is mutated only during synchronous JavaScript sections. The work behind the function may still use sockets, the kernel, or libuv's thread pool.

Production code often uses packages such as p-limit and p-map instead of local helpers. They add queue introspection, abort support, async-iterable variants, skip controls, and well-tested edge-case behavior. Check the specific package version before assuming an option exists.

Those limits usually come from outside the combinator:

- API rate limits return 429 responses or explicit rate-limit headers.

- Database pools have a fixed connection count.

- File descriptor limits are set by the shell, service manager, container, and host policy. Check

ulimit -nand service limits in the target environment. - Memory rises with every in-flight request, response buffer, parser state, closure, and retained request object.

Retry with Exponential Backoff

Retry builds on the same start-time rule. It belongs around a promise-returning function, not around a promise value. A promise represents one already-started attempt; a function can start a fresh attempt each time.

async function retry(fn, {

maxRetries = 3,

baseMs = 1000,

shouldRetry = () => true

} = {}) {

for (let attempt = 0; attempt <= maxRetries; attempt++) {

try {

return await fn();

} catch (err) {

if (attempt === maxRetries || !shouldRetry(err)) throw err;

const backoff = baseMs * 2 ** attempt;

const delay = backoff * (0.5 + Math.random() * 0.5);

await new Promise(resolve => setTimeout(resolve, delay));

}

}

}The delay grows after each failed attempt. With baseMs = 1000, retry delays start around 500-1000ms, then 1000-2000ms, then 2000-4000ms with the jitter formula shown above. AWS calls this family "equal jitter": part of the delay is retained and part is randomized. Full jitter, which randomizes from zero to the current backoff cap, spreads retry timing even more under heavy contention.

The shouldRetry predicate keeps permanent failures out of the retry loop:

const data = await retry(

() => fetchJsonOk("https://api.example.com/data"),

{

maxRetries: 3,

baseMs: 500,

shouldRetry: err => err.status === 429 || err.status >= 500

}

);That predicate depends on the earlier HTTP wrapper. fetchJsonOk() turns HTTP status into thrown errors with a .status property:

async function fetchJsonOk(url, options) {

const response = await fetchOk(url, options);

return response.json();

}The retry policy should follow the kind of failure. Retry transient failures: connection resets, temporary DNS failures, socket timeouts, 503 responses, and 429 responses where retrying is allowed. Respect Retry-After when the server sends it. Do not retry bad input, authorization failures, missing resources, or validation errors unless the application has a specific reason.

Mutating operations add another constraint. If a POST request creates a resource and the response is lost, retrying the same POST may create a duplicate. Use idempotency keys, client-generated operation IDs, or restrict automatic retry to safe reads and explicitly idempotent writes.

Immediate retries can amplify load exactly when the dependency has the least spare capacity. Backoff reduces the retry rate, and jitter prevents clients with the same retry policy from lining up on the same millisecond.

Timeout and Cancellation

The timeout examples so far have only changed what the caller observes. They have not stopped the losing operation.

AbortController is the standard cancellation mechanism for web-compatible APIs in Node. You create a controller, pass its signal to operations that accept it, and call abort() when the operation should stop. The operation must observe the signal. The promise combinator does not enforce that for you.

For fetch(), deadline cancellation can be compact:

async function fetchWithTimeout(url, ms, options = {}) {

const signal = options.signal

? AbortSignal.any([options.signal, AbortSignal.timeout(ms)])

: AbortSignal.timeout(ms);

return fetchOk(url, { ...options, signal });

}AbortSignal.timeout(ms) creates a signal that aborts after ms. In Node, a timeout signal used with fetch() rejects with a TimeoutError DOMException. A manual AbortController.abort() usually produces an AbortError unless you pass a custom reason.

Below JavaScript, the operation decides what "abort" means. For fetch(), aborting rejects the promise and aborts the request/body work. Transport cleanup, connection reuse, and protocol details are implementation concerns. Do not write code that depends on "abort always closes this TCP connection" as a portable guarantee.

node:timers/promises accepts a signal directly:

import { setTimeout as sleep } from "node:timers/promises";

await sleep(5000, null, { signal });If the signal aborts first, the returned promise rejects with AbortError. The promisified timer API has accepted AbortSignal since Node 15.

Custom async utilities should follow the same shape:

function delay(ms, { signal } = {}) {

return new Promise((resolve, reject) => {

if (signal?.aborted) return reject(signal.reason);

const timer = setTimeout(done, ms);

function done() {

signal?.removeEventListener("abort", onAbort);

resolve();

}

function onAbort() {

clearTimeout(timer);

reject(signal.reason ?? new DOMException("Aborted", "AbortError"));

}

signal?.addEventListener("abort", onAbort, { once: true });

});

}The listener uses { once: true }, and the timer-success path removes it. Without that cleanup, abort listeners can keep closures alive longer than intended.

A single signal can be passed through several operations:

const controller = new AbortController();

await Promise.all([

fetchData({ signal: controller.signal }),

processRecords({ signal: controller.signal }),

delay(1000, { signal: controller.signal })

]);Calling controller.abort() requests cancellation for all three. Only operations that accept and honor the signal will stop. If processRecords() ignores the option, its promise keeps running.

AbortSignal.timeout() is available in Node v17.3+ and v16.14+. AbortSignal.any() is available in Node v20.3+ and v18.17+. In Node v24, both are available globally:

const combined = AbortSignal.any([

userController.signal,

AbortSignal.timeout(10_000)

]);The combined signal aborts when any input signal aborts. This is cancellation composition, not promise-value composition.

AbortSignal support has spread through Node's API surface. Useful examples include node:fs/promises read/write methods, callback node:fs read/write methods that accept options, node:stream/promises.pipeline, events.once(), events.on(), child_process.exec(), child_process.spawn(), fetch(), and node:timers/promises. Filesystem aborts are best-effort around Node's internal buffering; an abort may stop further work but not necessarily undo bytes already read or written.

Composing Patterns

In real programs these pieces usually layer together: concurrency limit outside, retry around each attempt, deadline inside each attempt, and a combinator to aggregate the batch.

const results = await pMap(urls, url => {

return retry(

() => fetchWithTimeout(url, 5000).then(r => r.json()),

{

maxRetries: 3,

baseMs: 500,

shouldRetry: err => err.status === 429 || err.status >= 500

}

);

}, 10);The outer pMap() starts at most ten URLs at a time. retry() controls attempt policy for one URL. fetchWithTimeout() gives each attempt a cancellable deadline. The Promise.all() inside pMap() waits for the workers, not for all URLs at once.

Partial health checks often combine race and allSettled():

const health = await Promise.allSettled(

services.map(service =>

Promise.race([

checkService(service),

timeout(2000)

])

)

);That collects every service outcome while bounding how long each individual wait can affect the aggregate. It still does not cancel checkService() after timeout. Use an abortable check function when the underlying operation supports cancellation.

CDN fallback brings the layers together because it needs success semantics and loser cleanup:

async function firstJson(urls, timeoutMs) {

const deadline = AbortSignal.timeout(timeoutMs);

const controllers = urls.map(() => new AbortController());

const attempts = urls.map((url, index) => {

const signal = AbortSignal.any([deadline, controllers[index].signal]);

return fetchJsonOk(url, { signal }).then(data => ({ data, index }));

});

try {

const { data, index } = await Promise.any(attempts);

controllers.forEach((controller, i) => {

if (i !== index) controller.abort();

});

return data;

} catch (err) {

controllers.forEach(controller => controller.abort());

throw err;

}

}Each request gets its own controller, combined with the overall deadline. The winning request resolves only after fetchJsonOk() has consumed the response body, so aborting the losers cannot corrupt the returned body. If every CDN fails, Promise.any() rejects with an AggregateError that contains the individual rejection reasons. If the deadline fires first, the requests that honor the signal reject through abort.

The point is ownership. Promise.any() owns success selection. AbortController owns cancellation request. fetchJsonOk() owns HTTP status policy. No single primitive does all three.

How V8 Implements the Combinators

The observable behavior above comes from ECMAScript algorithms. V8 implements the main combinator paths as Torque builtins that mirror those algorithms while adding engine-specific fast paths. The relevant source lives in files such as src/builtins/promise-all.tq, promise-race.tq, and promise-any.tq. Torque is V8's internal language for defining builtins; it is not ordinary JavaScript, and the exact implementation can change across V8 versions.

For Promise.all(), the core bookkeeping is a remaining-elements count, a result array, and per-element reactions that know their input index. When an input fulfills, its reaction stores the value at the captured index and decrements the shared count. When the count reaches zero, the aggregate resolves with the result array.

The reject path points at the aggregate promise's reject capability. The first input rejection settles the aggregate. Later fulfillment or rejection handlers may still run, but attempts to settle the already-rejected aggregate are ignored by the promise capability's already-settled checks.

Promise.allSettled() uses the same broad shape, except both fulfillment and rejection reactions store descriptors and decrement the remaining count. Rejection from an input is data, not aggregate failure. Iterator/protocol failures are different; they reject the aggregate before normal descriptor collection can complete.

Promise.race() needs less bookkeeping because it does not need a result array, error array, or remaining count. It still has to resolve each input through the promise machinery and attach reactions. On V8 fast paths, native promises can avoid some general .then() overhead, but slow paths still exist for thenables, subclassing/species behavior, hooks, debugging, and other observable cases.

Promise.any() tracks rejection reasons by input index and a remaining count. Fulfillment points at the aggregate resolve capability. Each rejection stores its reason. If the rejection count reaches the input count, V8 creates an AggregateError from the reasons and rejects the aggregate.

For ordinary batches of tens of promises, this bookkeeping is not the performance issue. For huge batches, the real cost is up-front attachment: the combinator must iterate the input and attach handlers before it can observe results. A Promise.all() over 100,000 started operations creates 100,000 pieces of promise-observation work before the first useful aggregate result can be delivered.

Production Edge Cases

The edge cases below are different symptoms of the same ownership handoff: the combinator settles one aggregate promise, and the surrounding code owns everything else.

Lost diagnostics in Promise.all(). The aggregate rejects with the first rejection reason. Later rejection reasons are observed internally but not surfaced by the aggregate. Use allSettled() when the caller needs the whole failure set.

Manual all-settled wrapping. Sometimes you want Promise.all() to fulfill with mixed values and errors:

const results = await Promise.all(

urls.map(url =>

fetchJsonOk(url).catch(error => ({ error }))

)

);That is a local policy choice. It turns failures into values, so the caller must inspect the array.

Sequential work by accident.

const a = await fetchA();

const b = await fetchB();

const c = await fetchC();fetchB() starts after fetchA() fulfills. fetchC() starts after fetchB() fulfills. If the work is independent, start the promises first:

const [a, b, c] = await Promise.all([

fetchA(),

fetchB(),

fetchC()

]);The difference is start time. The function call starts work; await observes completion.

Ignored race losers. If a timeout wins a Promise.race(), the losing operation may still fulfill later. That is an ignored fulfillment, not an unhandled rejection. The resource cost can still count.

AggregateError.errors is the iterable. for (const err of aggregateError) throws because AggregateError itself is not iterable. Use aggregateError.errors.

Microtask ordering. Already-fulfilled inputs do not make combinator observers run inline. Promise.all([Promise.resolve(1)]) still delivers .then() handlers and await continuations asynchronously. Avoid teaching exact microtask counts. The count can depend on native-promise fast paths, thenables, and surrounding scheduling. The stable rule is current stack first, promise observers later.

Sparse arrays. Array holes are treated as undefined values during iteration:

const result = await Promise.all([

Promise.resolve("a"),

,

Promise.resolve("c")

]);

// ["a", undefined, "c"]No warning is emitted. If a dynamically built promise array has holes, the combinator faithfully preserves them as undefined results.

allSettled() in loops. This is a bug unless failures are inspected:

for (const batch of batches) {

await Promise.allSettled(batch.map(processItem));

}The loop processes every batch and drops every rejection descriptor. If failure should affect control flow, count, log, aggregate, or throw based on the descriptors before advancing.

The recurring rule is the same one the chapter started with. Combinators own settlement. Your code owns start time, cancellation, retry policy, result inspection, and the size of the fan-out.