Node.js Microtasks: Promise Jobs, process.nextTick, and Timer Order

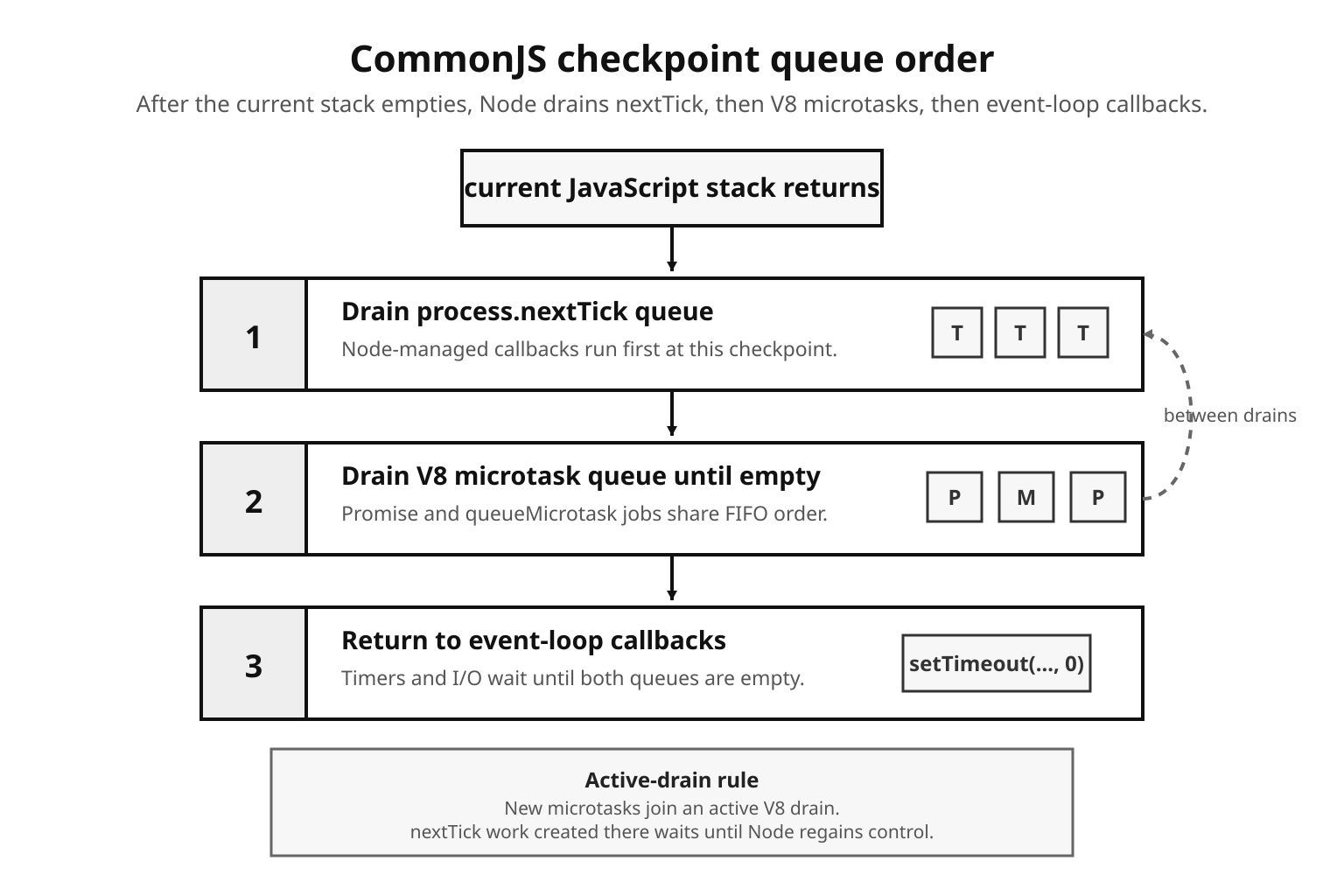

Promise syntax can make asynchronous code look linear, but the runtime behavior still comes from queues, checkpoints, and promise state. A promise reaction runs after the current JavaScript stack has emptied. In many cases it runs before timers, I/O callbacks, and setImmediate() callbacks, but the exact ordering depends on the place where the work was scheduled.

That context counts because Node coordinates more than one queue. At a CommonJS main-script checkpoint, it drains process.nextTick() callbacks before V8 microtasks. ES module top-level code and code that is already running inside a microtask use a different active context, so the same small example can print in a different order. The rest of this chapter keeps those contexts named instead of treating "microtask ordering" as one universal rule.

Microtask Order at a Glance

The compact table keeps those scheduling contexts separate.

| Scheduling context | Node v24 order after the current stack |

|---|---|

| CommonJS main script | process.nextTick() callbacks, V8 promise jobs and queueMicrotask() callbacks, then event-loop callbacks such as timers, most I/O, and setImmediate() |

| ES module top level | V8 promise jobs and queueMicrotask() callbacks already in the module evaluation drain, then process.nextTick() callbacks, then event-loop callbacks |

| Inside an existing microtask | V8 microtasks queued during the active drain, then process.nextTick() callbacks created during that drain, then later event-loop callbacks |

Promise Microtasks

A promise reaction is the work scheduled by .then(), .catch(), .finally(), or await continuation. Chaining adds more reactions to the same microtask system, and rejection handling uses it too. That is why unhandled rejection reporting waits briefly: the runtime needs to see whether a rejection handler appears before it decides that the promise was left unhandled.

The usual timer comparison follows from that queue placement. Promise.resolve().then() often runs before setTimeout(fn, 0) because the promise reaction is ready at the next microtask checkpoint. The timer callback goes through libuv's timer system, and zero delay still means "after the current turn and timer scheduling rules," not "right now."

Those two callbacks live in the same process, but they do not enter the same queue. The .then() handler becomes a V8 microtask. The timer callback waits in libuv's timer machinery. Node decides when to drain each side as it moves between JavaScript execution and event-loop work.

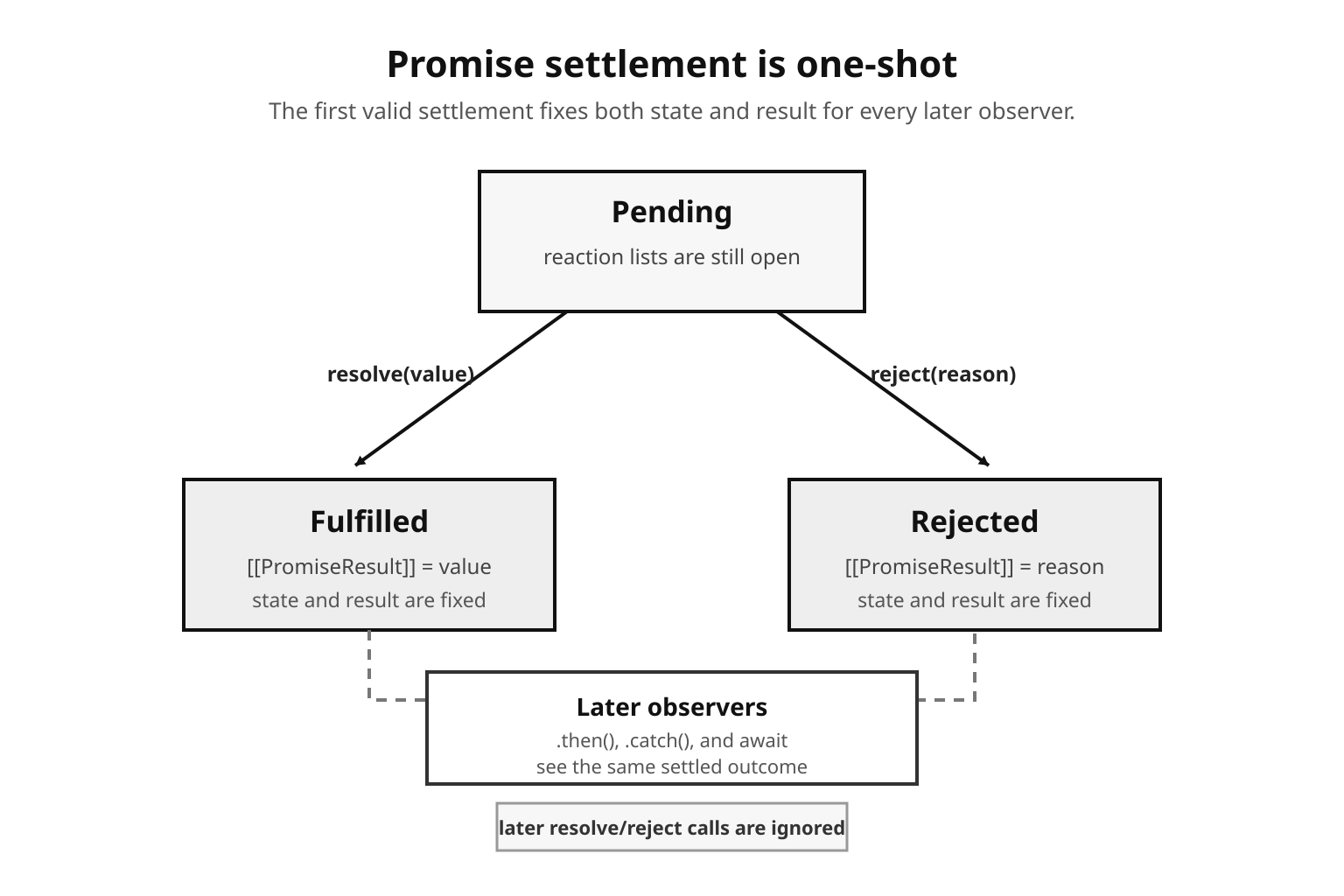

Before looking at those checkpoints, it helps to pin down what the promise is carrying. A promise is a one-settlement state machine. It starts pending, can become fulfilled, or can become rejected. The only valid transitions are from pending to fulfilled and from pending to rejected. After settlement, both the state and the result stay fixed.

Figure 1 — A promise can settle only once: pending becomes fulfilled or rejected, and the selected state and result remain fixed for every later observer.

The ECMAScript model describes that state with internal slots. [[PromiseState]] is pending, fulfilled, or rejected. [[PromiseResult]] holds the fulfillment value or rejection reason. A pending promise also keeps fulfillment and rejection reaction lists for handlers registered through .then(), .catch(), and .finally(). Engines can store those pieces however they like, but the observable rule is one-shot dispatch in registration order.

JavaScript code cannot read those slots directly. console.log(promise) in Node may show <pending> or a fulfilled value through inspector formatting, but there is no property access for [[PromiseState]]. Observation happens through reactions. That restriction keeps all observers on the same scheduling path: state changes through settlement, and observation goes through jobs.

The constructor's resolve and reject functions are part of a promise capability. The capability binds the promise object, the function that fulfills it, and the function that rejects it. Promise reaction jobs use that capability so each .then() link can settle the promise it returned. A handler return value therefore affects the next promise in the chain, not the one that triggered the handler.

const p = new Promise((resolve, reject) => {

resolve(42);

resolve(99);

reject(new Error("too late"));

});

p.then(v => console.log(v)); // 42The first resolve(42) fulfills the promise. The later resolve(99) and reject() calls return without changing the result. The resolving functions share an already-resolved flag, so only the first settlement attempt can affect the promise.

That single-settlement rule removes one of the callback-era failure modes from the previous subchapter. A callback can fire twice if an API author makes that mistake. A promise capability can be called twice as well, but only the first call changes observable state.

The fulfillment value can be any JavaScript value: a primitive, an object, undefined, or another promise. Rejection can also use any value, although real code should reject with Error instances. A string rejection gives downstream code only a bare string reason; it has no name, message, or stack.

Promise resolution also guards against self-resolution. If code tries to resolve a promise with itself, the promise rejects with a TypeError. Without that guard, adoption would create a cycle where the promise waits for its own settlement forever. The ECMAScript algorithm checks for that case before thenable assimilation begins.

let resolve;

const p = new Promise(r => { resolve = r; });

resolve(p);

p.catch(e => console.log(e.name)); // TypeErrorYou mostly see that edge case in hand-written adapters. It exists because promise resolution is recursive by design, so the runtime needs a specific stop condition for the promise that points back to itself.

The Executor Runs Inline

The function passed to new Promise() is the executor, and the constructor calls it immediately on the current stack.

console.log("before");

const p = new Promise((resolve, reject) => {

console.log("executor");

resolve("done");

});

console.log("after");The output is before, executor, after. Construction is synchronous. If the executor resolves immediately, the promise is already fulfilled by the time the constructor returns. Its handler still runs later, because .then() schedules a reaction job instead of calling the handler inline.

That split is the first scheduling rule to keep in mind. Construction can validate inputs, capture state, and even settle the promise before returning. Observation remains asynchronous, so a handler runs on a clean stack after the current JavaScript turn finishes.

The constructor creates the resolve and reject capabilities and passes them to the executor. After construction, outside code can attach reactions with .then(), .catch(), and .finally(), but it cannot settle the promise unless the executor leaked one of those functions.

Leaking the capability is possible:

let finish;

const p = new Promise(resolve => {

finish = resolve;

});

finish("done");That pattern is sometimes called a deferred promise. It can be useful at integration points, such as waiting for a one-time event, bridging a callback API, or wiring a test harness. It also moves settlement authority away from the code that created the promise. Keep resolve and reject scoped tightly; a leaked capability is mutable shared state, and any holder can settle the operation.

If the executor throws, the constructor turns the throw into a rejection:

const p = new Promise(() => {

throw new Error("executor exploded");

});

p.catch(e => console.log(e.message)); // "executor exploded"The constructor wraps the executor call. A synchronous throw becomes the rejection reason, while the handler that observes that reason still follows the normal asynchronous scheduling rule.

Promise.resolve(value) and Promise.reject(reason) create settled promises without the constructor form. Promise.resolve(42) returns a fulfilled promise. Promise.reject(new Error("no")) returns a rejected promise. Tests, adapters, and small library branches use these helpers constantly.

Native promises get an identity fast path:

const original = Promise.resolve(1);

const wrapped = Promise.resolve(original);

console.log(original === wrapped); // trueWhen the input is already a promise created by the same constructor, Promise.resolve() returns that same object. That identity rule is part of the standard promise algorithm, and engines can avoid wrapper work on that path. Thenables follow a different path.

Subclassing changes the identity check. Promise.resolve() looks at constructor identity, so a promise created by a different promise constructor may be wrapped in a new object with the requested constructor. Application code rarely depends on that allocation detail, but Promise subclass authors do.

Resolving With a Promise

Resolving a promise with another promise does not fulfill the outer promise with the inner promise object. It makes the outer promise adopt the inner promise's eventual state.

const inner = new Promise(resolve => {

setTimeout(() => resolve("delayed"), 100);

});

const outer = new Promise(resolve => {

resolve(inner);

});

outer.then(v => console.log(v)); // "delayed" (after 100ms)outer remains pending while inner is pending. When inner fulfills with "delayed", outer fulfills with "delayed". If inner rejects, outer rejects with the same reason. The result is state adoption, not nesting.

The same resolution procedure works for thenables: objects with a callable .then property. Promise resolution reads the property, checks whether it is callable, and schedules work that calls it with the outer promise's resolve and reject capabilities. Native promises, old promise libraries, and custom promise-like objects interoperate through that protocol.

The .then property is read once. That is important for unusual objects. If a getter for .then throws, the promise rejects with that thrown error. If the getter returns a non-callable value, the object becomes the fulfillment value. If the getter returns a function, the promise algorithm schedules the thenable job using that function reference, and later mutations to obj.then do not affect the already-scheduled job.

const thenable = {

then(onFulfill) {

onFulfill("from thenable");

}

};

Promise.resolve(thenable).then(v => console.log(v));The output is "from thenable". The object is treated as promise-like, and the outer promise follows the result produced by its .then() method.

Thenable assimilation adds scheduling work. A plain value can fulfill the promise immediately, with attached handlers still deferred as reaction jobs. A thenable goes through PromiseResolveThenableJob, which calls the .then() method later from the microtask queue. When that thenable calls the supplied fulfillment capability, the usual PromiseReactionJob work for handlers is scheduled. Precise ordering tests can observe that extra microtask turn.

The check is deliberately duck-typed: object plus callable .then is enough. That makes interop possible, but it also affects data objects with an accidental then method. A database document, API response, or mock object containing a callable then property gets treated as a thenable when passed to resolve(). The resolution procedure calls it. If the method throws, the outer promise rejects. If it never calls either callback, the outer promise stays pending.

One fix is to wrap the value in another object, rename the property, or return it from a handler that already runs after the assimilation point. This mostly appears in integration code, where untyped external data crosses a promise handoff.

Thenables can also be malformed or adversarial. The .then() method can call fulfillment twice, call rejection after fulfillment, throw after calling fulfillment, or call both callbacks from different turns. The promise resolution procedure creates resolving functions with an internal already-resolved flag. First call wins. Later calls return. A throw after the first successful callback is ignored because the outer promise has already settled.

const messy = {

then(resolve, reject) {

resolve("ok");

reject(new Error("late"));

}

};

Promise.resolve(messy).then(v => console.log(v));The output is "ok". The rejection attempt arrives after fulfillment and changes nothing. That already-resolved flag is separate from [[PromiseState]]; it protects the capability during adoption, before the outer promise necessarily reaches its final state.

This adoption path is one reason promise code can look synchronous and still run later. A thenable may call resolve() inline from its .then() method, but the outer promise still schedules reactions through microtasks. Handler execution waits for the checkpoint even when the foreign object behaves oddly.

Chaining

.then() takes two optional handlers, onFulfilled and onRejected, and it returns a new promise every time.

const result = Promise.resolve(5)

.then(v => v * 2)

.then(v => v + 1)

.then(v => console.log(v)); // 11Those three .then() calls allocate three promises. Each handler receives the previous settled value. A normal return fulfills the next promise with that return value. A throw rejects it. Returning a promise makes the next promise adopt that returned promise's state.

The chain's control flow comes from those returned promises. Each link owns the promise to its right. Each handler transforms success or failure into the next state. The indentation stays flat, but the runtime is still building a sequence of exactly-once settlements.

Missing returns still cause quiet bugs:

Promise.resolve("user")

.then(name => {

name.toUpperCase();

})

.then(v => console.log(v)); // undefinedThe first handler returns undefined, so the next promise fulfills with undefined. The runtime does not know that the missing return was accidental. Linters catch many of these; the runtime behavior stays silent.

Throws become rejections:

Promise.resolve("ok")

.then(v => { throw new Error("oops"); })

.then(v => console.log("skipped"))

.catch(e => console.log(e.message)); // "oops"The second .then() has only a fulfillment handler, so the rejection passes through it. .catch(fn) is .then(undefined, fn), which means it attaches a rejection handler to the promise produced by the previous link.

Placement changes what that handler can see. A .catch() at the end handles failures from every previous link. A .catch() in the middle can recover and send a normal value downstream.

Promise.reject(new Error("fail"))

.catch(e => "recovered")

.then(v => console.log(v)); // "recovered"The catch handler returns a string, so the promise it creates fulfills with that string and the following .then() receives it. Re-throwing from the catch handler would keep the chain on the rejection path.

.finally(fn) runs for both outcomes. It receives no value, and it passes the original value or reason through unless it throws or returns a rejected promise.

Promise.resolve(42)

.finally(() => console.log("cleanup"))

.then(v => console.log(v)); // "cleanup" then 42Use it for cleanup that should observe completion without changing the result: close a handle, clear a timer, release a lock, or decrement an in-flight counter.

The two-argument form of .then() has a narrower attachment point. In .then(onFulfilled, onRejected), the rejection handler handles rejection from the previous promise. It does not handle a throw from onFulfilled in the same call. A chained .catch() handles the promise returned by .then(), so it catches throws from the fulfillment handler. End-of-chain .catch() is usually the clearer shape.

Empty .then() calls create pass-through promises. promise.then() follows the original promise and adds allocation. These calls often appear after refactors; delete them when they carry no handler.

One more chaining detail pays off during debugging: handlers attach to the promise on their left. In a.then(f).catch(g), g handles rejections from a and throws from f, because it attaches to the promise returned by then. In a.then(f, g), g handles only rejection from a. The returned promise from that call receives whatever f or g produces. Same API. Different control-flow graph.

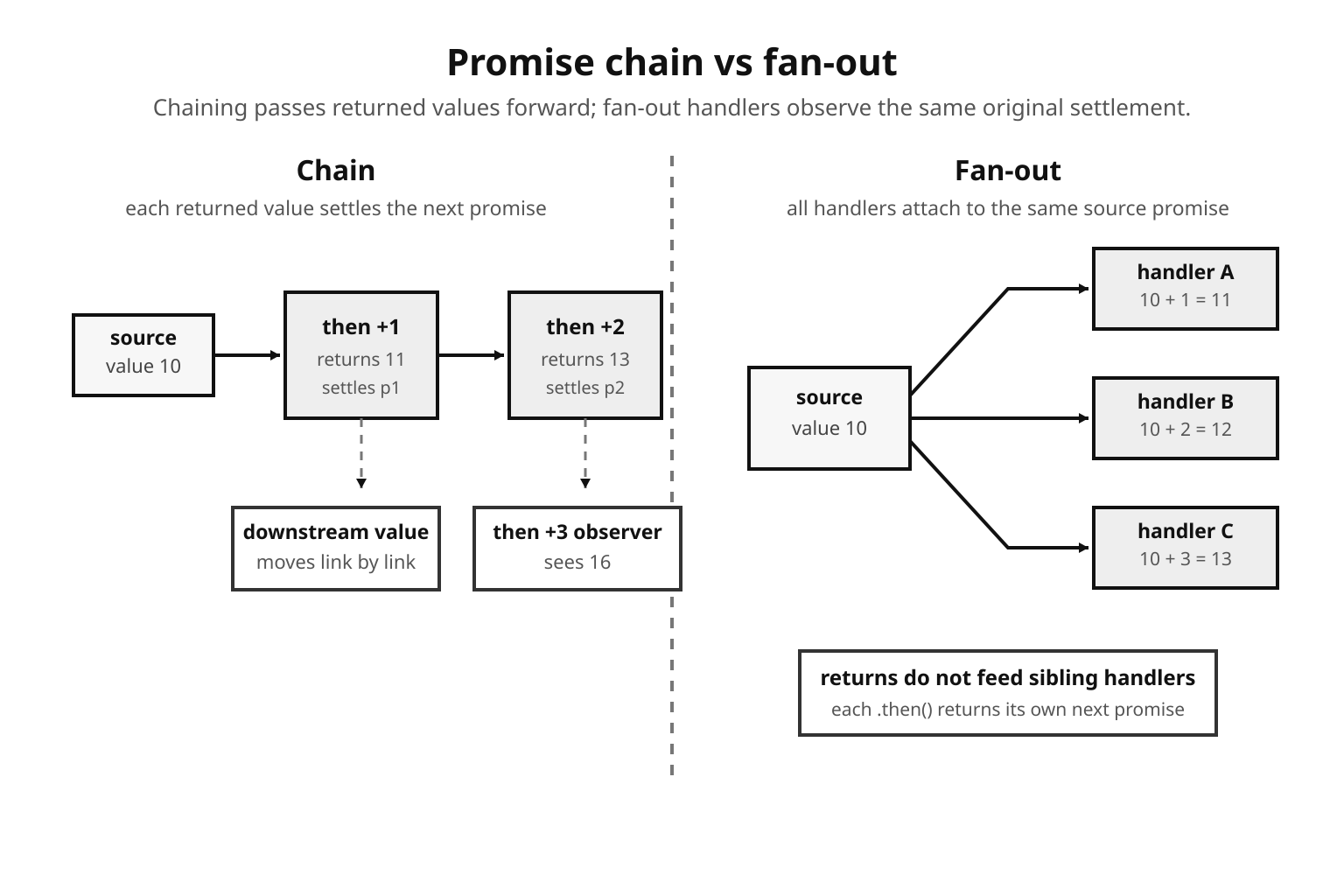

Multiple handlers on the same promise are not a chain. They all observe the same settlement value, and each .then() call returns its own next promise.

const p = Promise.resolve(10);

p.then(v => console.log(v + 1));

p.then(v => console.log(v + 2));

p.then(v => console.log(v + 3));The output is 11, 12, 13. All three reactions attach to p, so they run in registration order when p settles. The return value from the first handler feeds only the promise returned by the first .then() call. It has no effect on the second or third handler because those handlers also attach to p.

Now compare a chain:

Promise.resolve(10)

.then(v => v + 1)

.then(v => v + 2)

.then(v => console.log(v + 3));The output is 16. Each handler attaches to the promise returned by the previous .then(), so the value moves link by link. That difference explains many confusing refactors. Splitting a chain into separate .then() calls on the original promise changes data flow while the visible method calls still look similar.

Figure 2 — A chain passes each returned value to the next link, while multiple handlers attached to the same promise observe the same original settlement independently.

The same rule applies to errors. Three .catch() calls attached to one rejected promise all see the same rejection. Three catch handlers chained together form a recovery sequence, where each handler's return or throw decides the next link. Handler placement is the control-flow graph.

How Promise Handlers Run

The handler never runs from inside .then(). If the promise is pending, .then() appends fulfillment and rejection reactions to the promise. If the promise is already settled, .then() creates the matching reaction job immediately. In both cases, the handler runs later from V8's microtask queue.

V8's microtask queue sits beside the event-loop machinery. It holds promise reactions and queueMicrotask() callbacks. Libuv timers, I/O callbacks, and setImmediate() callbacks live outside it. process.nextTick() is separate again: it is a Node-managed queue, rather than a V8 microtask queue.

The ECMAScript job for a promise reaction is PromiseReactionJob. Its observable work is small: call the correct handler with the settled value, inspect the handler result or thrown exception, and settle the promise returned by the .then() call. The reaction carries the handler, the reaction type, and the promise capability for the next promise in the chain.

Missing handlers have defined pass-through behavior. For fulfilled promises, a missing fulfillment handler behaves as identity and passes the same value to the next promise. For rejected promises, a missing rejection handler behaves as a thrower and passes the same reason to the next promise's reject capability. Engines do not have to allocate user-visible functions for those paths; only the downstream behavior is observable.

The specification models pending promises as holding fulfillment and rejection reactions. Engines can store those reactions however they choose, but the stable behavior is registration order and one-shot dispatch. Once a promise has settled, later .then() calls still work by enqueueing new reaction jobs against the already-settled result.

When V8 begins a microtask checkpoint, it runs microtasks until the queue is empty. Promise jobs created during that drain join the same queue. queueMicrotask() jobs created during that drain do the same. Native callbacks, timers, and I/O wait outside the active drain.

Figure 3 — At a CommonJS checkpoint, Node drains nextTick work and V8 microtasks before event-loop callbacks such as timers get their turn; work queued during an active drain stays with that drain.

console.log("1");

setTimeout(() => console.log("2"), 0);

Promise.resolve().then(() => console.log("3"));

process.nextTick(() => console.log("4"));

console.log("5");In a CommonJS main script, the output is 1, 5, 4, 3, 2.

The synchronous lines run first. setTimeout() registers a libuv timer. Promise.resolve().then() enqueues a PromiseReactionJob in V8. process.nextTick() appends to Node's nextTick queue. The last console.log() runs before any queued callback.

When the stack empties, Node enters a checkpoint. In this CommonJS context, it drains nextTick first, printing 4. Then it drains V8 microtasks, printing 3. Only after those queues are empty does the event loop advance to expired timers and print 2.

Different active contexts produce different output. ES module top-level evaluation already runs through the microtask machinery, so the same scheduling shape prints the promise line before the nextTick line. Code resumed after await has the same active-microtask caveat. The table near the start of the chapter is the short version; the nested examples below show where the split lands.

The native callback return point still controls ordering. When a libuv completion enters JavaScript and the callback returns, Node coordinates its nextTick queue, V8 microtask checkpoint, and rejection bookkeeping before moving back to more event-loop work. Exact internal function names are version-specific; the portable claim is the observable checkpoint behavior.

The key nuance is that nextTick has priority at CommonJS and native-callback checkpoints, but it does not interrupt an active V8 microtask drain. If a .then() handler calls process.nextTick(), that nextTick callback waits until V8 finishes the current microtask queue. Then Node gets control again and can drain nextTick. The priority rule applies between drains.

That produces a slightly non-obvious order when queues create each other:

Promise.resolve().then(() => {

process.nextTick(() => console.log("tick"));

queueMicrotask(() => console.log("micro"));

});The queueMicrotask() callback runs before the process.nextTick() callback created inside the promise handler. V8 is already draining microtasks, so the new microtask joins the active drain. The nextTick callback waits for control to return to Node's checkpoint loop. After V8 finishes, Node sees the nextTick queue and drains it.

Reverse the creation site and the order changes:

process.nextTick(() => {

Promise.resolve().then(() => console.log("promise"));

process.nextTick(() => console.log("tick"));

});The nested nextTick runs before the promise handler. Node is already draining the nextTick queue, and new nextTick entries stay in that queue until it empties. After that, Node runs V8 microtasks. Same checkpoint, different active queue.

Use that ordering knowledge sparingly. Code that depends on nested nextTick-versus-promise behavior is hard to review. Its main value is diagnostic: when a log line appears one turn earlier than expected, active-queue rules usually explain it.

queueMicrotask(fn) schedules directly into V8's microtask queue without allocating a promise for the scheduling operation. In Node v24, process.nextTick() is documented as Legacy, and queueMicrotask() is the better default for most userland deferral that does not need Node-specific nextTick priority.

process.nextTick(() => console.log("nextTick"));

queueMicrotask(() => console.log("microtask"));

Promise.resolve().then(() => console.log("promise"));In a CommonJS main script, the output is nextTick, microtask, promise. queueMicrotask() and promise reactions share FIFO ordering inside V8's queue. The nextTick queue runs ahead of both at that checkpoint.

Starvation

Exhaustive draining has a cost. A self-replenishing microtask queue prevents the event loop from reaching timers, poll, check, and close callbacks.

let count = 0;

function flood() {

if (++count === 100_000) return;

Promise.resolve().then(flood);

}

flood();Every handler queues another handler until the guard stops it. While that chain is active, the checkpoint keeps running JavaScript. Timers stay pending. I/O completions sit behind the checkpoint. The process consumes CPU and makes no event-loop progress. Remove the guard, and the loop can starve the process indefinitely.

process.nextTick() can create the same failure mode, and historically caused more production pain because people used it as a yield primitive. It yields from the current stack, but it stays ahead of event-loop phases.

Do not rely on a runtime queue-depth guard to save recursive promise or nextTick scheduling. Bounded recursion is fine. Unbounded recursion starves the loop.

Use setImmediate() when a large CPU-light batch needs to let the event loop return to phase work between chunks. setImmediate() runs in the check phase, and an immediate queued from inside an immediate runs on a later event-loop iteration. Ready timers and I/O still depend on phase timing, but microtask recursion gives them no opportunity until the queue empties.

The tradeoff is overhead versus latency. Microtasks are cheap and ordered tightly. setImmediate() costs more and gives other work a scheduling opportunity. For request-serving code, bounded latency for unrelated sockets is usually more important than finishing one batch through a microtask chain.

Batch size is the control value. Process a few hundred items, schedule the next chunk with setImmediate(), and keep per-request latency bounded. Process the entire batch through promise recursion, and every socket that became readable during the batch waits behind your microtask chain. The right number depends on item cost and latency budget, so measure it under load rather than copying a magic constant.

Starting Work vs Observing Work

API design gets cleaner when "start work" and "observe result" stay separate. Promise construction or function entry starts work. .then() observes completion. Those are different acts, and they can happen at different times.

const p = readConfig();

doSomethingSync();

p.then(config => applyConfig(config));readConfig() decides when the file read, cache lookup, or network call begins. The later .then() only registers a reaction. If readConfig() has already fulfilled by then, the reaction still goes through the microtask queue. If it is pending, the reaction waits in the promise's internal list until settlement.

That difference shapes lazy APIs. A promise usually represents work that has already started. A function returning a promise can be lazy because work starts when the function is called. Passing a promise around passes an in-flight or already-settled operation. Passing a function around passes the ability to start it later.

const eager = fetchUser(id);

const lazy = () => fetchUser(id);eager begins immediately. lazy begins when called. The scheduling rules after settlement stay the same, but resource timing changes: sockets open earlier, timers start earlier, and unhandled rejection tracking can begin before the consumer has attached handlers. Library APIs often accept functions for retry loops, concurrency limiters, and deferred batches because they need to control when each promise-producing operation starts.

Promise handlers also run after synchronous cleanup in the current turn. That can be useful, and it can be surprising.

const p = Promise.resolve();

let closed = false;

p.then(() => console.log(closed));

closed = true;The handler prints true. The assignment runs before the microtask checkpoint. If the handler needed the earlier value, capture it in a local before scheduling the handler. Microtasks preserve ordering; they do not freeze variables.

One small naming convention helps during reviews: name promise-returning functions with verbs, and name promise values as values in progress. loadUser() starts work. userPromise is an observable result from work already started. The names cannot enforce timing, but they expose the difference where mistakes happen. A retry helper should receive () => loadUser() because it needs a fresh attempt each time. A renderer can receive userPromise because it only needs to observe the result.

The same start-versus-observe difference is important during startup, retries, cache warmups, and request fan-out, where calling a promise-returning function can open sockets, start timers, or begin rejection tracking before a consumer is ready.

Errors and Rejections

Rejections propagate until a rejection handler handles them.

Promise.reject(new Error("bad"))

.then(v => console.log("skipped"))

.then(v => console.log("also skipped"))

.catch(e => console.log(e.message)); // "bad"Fulfillment-only handlers are bypassed. The rejection value moves through pass-through reactions until .catch() receives it. Inside .catch(), returning a normal value recovers, throwing keeps the chain rejected, and returning a rejected promise keeps the chain rejected.

Logging and re-throwing is the usual shape when one layer wants observability and another layer owns the response:

someAsyncOp()

.catch(e => { console.error("logged:", e); throw e; })

.then(v => processResult(v))

.catch(e => sendErrorResponse(e));The first catch logs and throws. The second catch sees the same failure. If the first catch returned normally, the chain would continue with that returned value, often undefined.

throw inside a handler and return Promise.reject(error) both reject the promise returned by that handler's .then() call. Prefer throw inside synchronous handler bodies because the intent is direct and the stack is cleaner. Promise.reject() fits expression-heavy code or helper functions that already return promises.

fetchUser(id).then(user => {

if (!user.active) throw new Error("inactive");

return user;

});That has the same behavior as returning Promise.reject(new Error("inactive")), with shorter syntax.

Unhandled Rejections

Node reports rejected promises that lack a rejection handler by the time its rejection check runs. In Node v24, the default --unhandled-rejections mode is throw: an unhandled rejection becomes an uncaught exception. The flag also accepts warn, strict, and none, but production code should treat unhandled rejections as process-level bugs.

The detection path starts in V8. When a promise rejects without a handler, V8 calls Node's promise rejection hook. Node records the promise and delays reporting until after the current turn has had a chance to attach a handler. If a handler appears in time, Node emits or records the handled transition instead of reporting it as unhandled. If the promise still lacks a handler, unhandledRejection fires on process, and the default mode turns that into a thrown exception.

The delay is small, but it prevents valid patterns from being reported too early. A promise can reject, then receive a .catch() later in the same synchronous turn. A chain can also attach rejection handling through a later .then() call before Node finishes the checkpoint. Node gives that code a chance to connect the handler before it reports the promise.

Two process events describe the lifecycle. unhandledRejection fires when Node decides the promise lacked a handler in time. rejectionHandled fires later if a handler appears after reporting. The second event is diagnostic cleanup, not a rewind of the first event. In throw mode, the process may already be headed through uncaught-exception handling.

That delay is observable with process-level diagnostic handlers:

process.on("unhandledRejection", e => {

console.log("unhandled:", e.message);

});

process.on("rejectionHandled", () => console.log("handled later"));

const p = Promise.reject(new Error("oops"));

setTimeout(() => {

p.catch(e => console.log("caught:", e.message));

}, 0);The setTimeout() callback runs after the microtask checkpoint and timer scheduling point. Node can report the rejection before the delayed catch attaches. Later, the catch causes a rejectionHandled event, but the original report already happened. Without those process handlers, the same delayed-catch shape exits under Node v24's default throw mode before the timer-attached catch runs.

Attach rejection handlers in the same chain you create. End a floating promise with .catch() when it is intentionally detached. With async functions, use try/catch around awaited work or return the promise to a caller that will handle it.

promise.catch(() => {}) counts as handling. It also hides the failure. Sometimes that is the intended behavior for best-effort telemetry or cache writes. Put that decision in code. Log at least enough context for later debugging unless the failure is intentionally silent.

Library code should avoid installing global process.on("unhandledRejection") policy. Applications own that decision. A library can return promises, document rejection reasons, and attach internal catches for detached background work it starts itself. Process-level rejection policy belongs at the entry point, next to process-level signal and exit handling.

util.promisify() and Callback APIs

Node still has many callback-shaped APIs, and many packages expose only error-first callbacks. util.promisify(fn) wraps one of those functions and returns a promise-returning function.

const { readFile } = require("node:fs");

const { promisify } = require("node:util");

const readFileAsync = promisify(readFile);

readFileAsync(__filename, "utf8").then(text => {

console.log(text.includes("readFileAsync"));

});The wrapper calls the original function with your arguments and appends a generated callback. If that callback receives a truthy err, it rejects the promise. Otherwise, it resolves with the success value.

Each wrapper call allocates a promise and a callback closure. For one file read, nobody cares. In busy library paths, those allocations appear in heap profiles.

Binding is also important. promisify() calls the original function as a plain function unless you bind it yourself. Methods that depend on this need binding before wrapping.

const { promisify } = require("node:util");

const obj = {

value: 7,

read(cb) { cb(null, this.value); }

};

const readAsync = promisify(obj.read.bind(obj));Core functions usually avoid that problem because they take all state through arguments or internal bindings. User-space classes often rely on this. A promisified unbound method can fail before it reaches async work.

Some Node APIs return multiple callback success values. fs.read() receives (err, bytesRead, buffer). Node core uses internal metadata so promisified versions can resolve with an object such as { bytesRead, buffer } instead of dropping everything after the first success value. Your own APIs can define util.promisify.custom for the same reason.

const { promisify } = require("node:util");

function myFn(cb) { cb(null, "a", "b"); }

myFn[promisify.custom] = () => {

return Promise.resolve({ first: "a", second: "b" });

};

promisify(myFn)().then(console.log);Prefer promise-native Node APIs when they exist. require("node:fs/promises") avoids a userland util.promisify() wrapper and exposes promise semantics directly. The public contract is cleaner, and the runtime does not need to route your code through a generated callback closure.

util.promisify() remains useful for third-party callback APIs and old internal modules. It is an adapter, so keep it at the split. Once data is in promise form, keep the rest of the code in one async style.

The reverse direction deserves the same handoff. If a callback-based public API must remain for compatibility, keep the callback adapter thin and route into a promise-native implementation. Mixed internal styles duplicate error handling and make ordering harder to reason about.

util.callbackify() adapts from promises back to callbacks. It calls a promise-returning function, attaches handlers, and routes fulfillment or rejection to an error-first callback. That adds the same promise reaction scheduling point. Callback consumers that expect exact timing may observe the extra microtask turn.

callbackify() has one odd case: rejected falsy values get wrapped, because an error-first callback uses a truthy first argument to signal failure. Rejecting with null or undefined gives the callback a generated Error object with the original reason attached. That is another reason to reject with real errors.

Cost Model

Every .then() allocates a promise. Every settled promise with a dependent handler schedules a PromiseReactionJob. Every handler closure can retain outer variables until the chain releases them.

Those costs only count when they survive profiling, but they are concrete: allocations, queued jobs, retained closures, and async-stack metadata.

Promise handlers always run asynchronously. Even Promise.resolve(42).then(fn) schedules fn in a microtask. That always-async behavior comes from the promise contract and removes the mixed sync/async callback behavior covered in the previous subchapter. Cached results and I/O results use the same observation timing: current stack first, microtasks next.

Long chains create short-lived objects. Ten .then() calls produce ten intermediate promises and ten reaction jobs. Modern V8 optimizes common promise paths, but heap churn can still become visible in code that builds huge numbers of tiny chains per second.

A callback-only internal path can still allocate less in measured hot loops. Database drivers, parsers, schedulers, and transport libraries sometimes expose promises at the public API and use callbacks or internal request objects below that line. That split is an implementation choice, not a reason to leak callback control flow into application code.

Most application code should keep promises. The readability and error-channel consistency usually count more than the allocation delta. Profile before replacing a promise chain with callback code. If promise allocation shows up in the profile, it may appear as young-generation churn, frequent minor GC, or promise-reaction frames in CPU profiles.

Memory retention is easier to miss. A chain like a.then(f1).then(f2).then(f3) creates intermediate promises that can die quickly when the next link settles. A handler closure, though, can retain whatever it captures from the outer scope. A .finally() closure that closes over a large buffer keeps that buffer reachable until the finally handler runs and the returned promise settles. A stored reference to a mid-chain promise can keep related state alive longer than expected.

The common retention bug is accidental capture:

function handle(req, big) {

return doWork(req.id).finally(() => {

metrics.observe(big.length);

});

}The finally closure keeps big alive until the chain settles. If big is a request body buffer and the operation waits on remote I/O, memory pressure climbs with concurrency. Extract the small value you need before creating the closure.

function handle(req, big) {

const bytes = big.length;

return doWork(req.id).finally(() => {

metrics.observe(bytes);

});

}The behavior is the same, but less memory stays reachable.

The debugging tradeoff is real too. Async stack traces improve rejected-chain debugging, but they can retain metadata. Keep diagnostics useful by default, then change diagnostic settings only when a measured workload points at them.

Performance work starts with shape, not syntax. A chain that serializes independent operations costs more than the promise mechanism itself. A detached promise with no handler creates failure ambiguity. A busy loop that builds millions of promises creates allocator pressure. Fix those first. Then look at whether a lower-level callback path earns its complexity.

In production code, the defaults stay simple:

- Use promise-native Node APIs instead of promisifying core APIs.

- Attach

.catch()in the chain that creates detached work. - Prefer end-of-chain

.catch()over two-argument.then()for most code. - Use

throwinside handlers unless a helper already returns a promise. - Use

setImmediate()between large independent batches that must let I/O proceed. - Watch heap profiles for retained closures around large buffers or request objects.

- Treat

PromiseReactionJobhotspots as profiling data, not as a reason to preemptively rewrite clean code.

Promise code is usually the right surface. The sharp parts are scheduling context, rejection ownership, and retained state; those are runtime facts, not style preferences.