Node.js Async Iterators: for await...of, Streams, and Backpressure

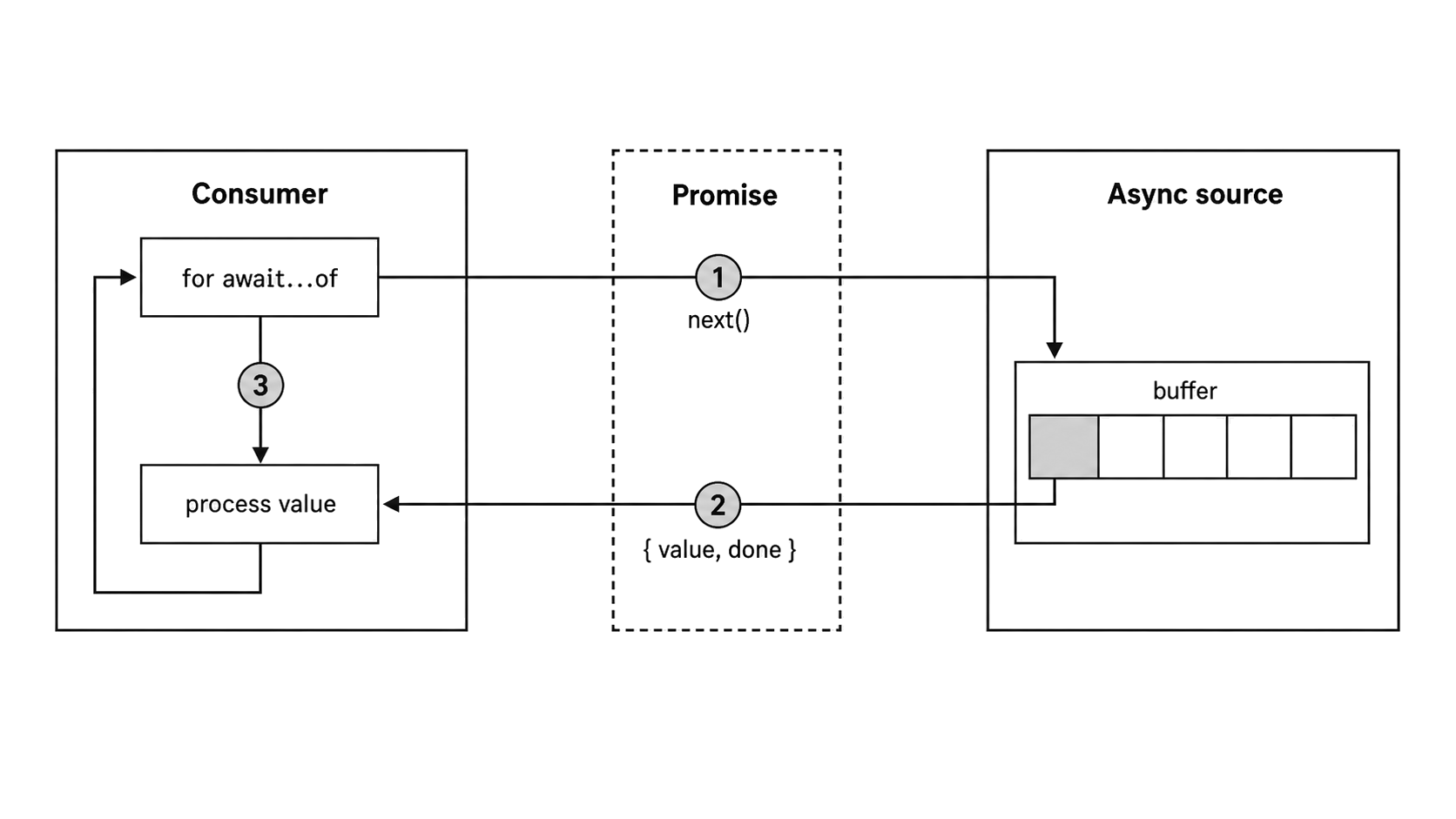

Async iteration gives Node a pull-based contract for data that arrives over time. The consumer asks for one item with next(), receives a promise for the next { value, done } result, and asks again only after it is ready. That small change in pacing is what lets the same loop shape consume file lines, stream chunks, event adapters, database cursors, and async generator transforms.

Baseline and Conventions

This chapter assumes Node v24 LTS. The APIs used here are available in that baseline: Readable[Symbol.asyncIterator]() has been non-experimental since Node 11.14, readable.iterator() exists since Node 16.3 and is stable in current LTS lines, events.on() exists since Node 12.16 and 13.6 with watermarks added across Node 20.0 and Node 20.13/22.0, and FileHandle.readLines() exists since Node 18.11. The fetch() and stream/promises.pipeline() examples use the Node v24 global and built-in module.

Code blocks are complete CommonJS examples unless the text calls them fragments. When a block is marked as a fragment, the named source, stream, emitter, or cursor already exists in the surrounding function.

The Sync Protocol You Already Know

The easiest way to understand async iteration is to start with the synchronous protocol that for...of already uses. A sync iterable exposes Symbol.iterator; calling that method returns an iterator object with a next() method. Each call to next() returns { value, done }. When done is true, the loop stops. Arrays, Maps, Sets, strings, and typed arrays all follow that same contract.

const arr = [10, 20, 30];

const iter = arr[Symbol.iterator]();

console.log(iter.next()); // { value: 10, done: false }

console.log(iter.next()); // { value: 20, done: false }

console.log(iter.next()); // { value: 30, done: false }

console.log(iter.next()); // { value: undefined, done: true }The for...of loop is syntactic sugar over that sequence. It gets the iterator, calls next(), checks done, runs the body with value when there is one, and repeats until the iterator reports completion.

That synchronous shape works when the data is already available. Array elements are in memory. Map entries are in memory. As soon as the loop asks, the iterator can answer. Async sources have the same conceptual shape, but not the same timing. A database cursor returns rows one by one across a network. An HTTP response streams chunks. A file read waits on the filesystem. The value is not available when the consumer calls next(); it becomes available later, after some I/O completes. The sync protocol has nowhere to put that delay because next() must return { value, done } immediately.

The Async Iteration Protocol

The async protocol keeps the same result shape and moves only the timing edge. Symbol.asyncIterator replaces Symbol.iterator. Instead of returning { value, done } directly, next() returns a promise that resolves to { value, done }.

const asyncIterable = {

[Symbol.asyncIterator]() {

let i = 0;

return {

next() {

if (i < 3) {

return Promise.resolve({ value: i++, done: false });

}

return Promise.resolve({ value: undefined, done: true });

}

};

}

};That object is a complete async iterable. Its Symbol.asyncIterator method returns an iterator, and the iterator's next() method returns promises. Each promise resolves to the familiar { value, done } shape, so the consumer still sees values and completion in the same form. The difference is that the consumer must await the result before it knows whether there is another value.

Figure 7.1 — Async iteration is pull-based: the consumer asks for one promised result, processes that value, and only then requests the next one.

The earlier streams chapter used this protocol through Readable streams. Every for await...of loop over a stream calls the stream's async iterator. The stream chapter introduced the syntax; this chapter follows the protocol itself, then uses that protocol to explain cleanup paths, custom async iterables, and the Node-specific stream and event adapters.

How for await...of Desugars

for await...of is the loop form built around that protocol. It hides the calls that fetch each value, and it also owns the cleanup path when the loop exits early.

This is the minimal happy-path desugaring:

const iterator = source[Symbol.asyncIterator]();

let result = await iterator.next();

while (!result.done) {

const item = result.value;

// ... loop body ...

result = await iterator.next();

}The loop gets the async iterator, calls next(), awaits the returned promise, and checks done. When done is false, it binds value to the loop variable, runs the body, and then asks for the next result. When done is true, the loop stops.

The real loop has more machinery than this fragment. It handles early cleanup through return(), accepts sync iterables through a fallback path, and turns rejected next() promises into throws. The await keyword behaves as it did in the previous subchapter on async/await: it suspends the enclosing async function, schedules the continuation as a microtask when the promise settles, and resumes from the suspension point. The result is sequential by design. The loop asks for one item, waits for it, runs the body, and only then asks for the next one. If the body takes 500ms of async work per item and there are 100 items, the loop takes at least 50 seconds.

When the Loop Body Throws

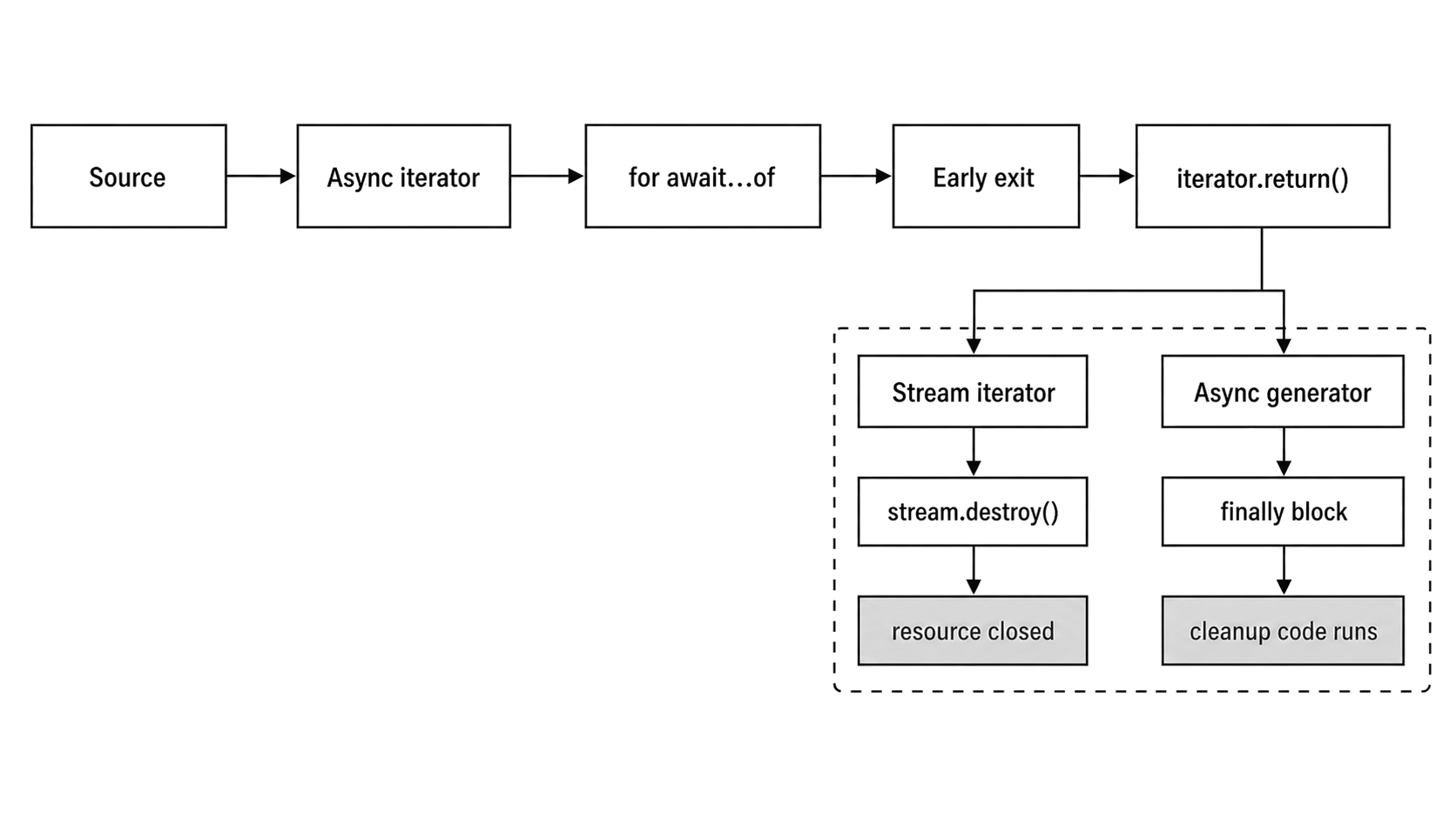

Once the loop body has a value, failures in your code are handled by the loop's cleanup machinery. If the body throws, the loop calls iterator.return() first when that method exists.

// Fragment: `readable` is an existing Readable stream.

for await (const chunk of readable) {

if (chunk.length > 1024) {

throw new Error('chunk too large');

}

}That return() call gives the iterator a chance to release resources before the original error continues outward. For a readable stream, return() calls stream.destroy(). For an async generator, return() triggers any finally block in the generator body. After cleanup, the error propagates to wherever the for await...of lives, usually a surrounding try/catch.

Early break and return

Explicit early exits use the same path. A break statement, or a return from the enclosing function, causes the runtime to call iterator.return() when the iterator provides it. The iterator gets a chance to release resources before control leaves the loop.

async function findFirst(stream) {

for await (const chunk of stream) {

if (chunk.includes('target')) return chunk;

}

return null;

}When return chunk executes, the loop calls return() on the iterator it obtained when the loop started. For the default Readable stream iterator, that destroys the stream, which closes the underlying file descriptor or socket through the stream's destroy path. The cleanup is protocol-driven, so the loop body does not need its own finally just to stop the stream on early exit.

Figure 7.2 — Early exit follows the iterator cleanup path. A stream iterator destroys the stream, while an async generator enters its finally block.

Errors from next()

The other failure path begins before the body receives a value. When the promise returned by next() rejects, the rejection becomes a throw from the for await...of statement. You handle it with the same try/catch you would use around any awaited operation:

// Fragment: `asyncSource` is an existing async iterable.

try {

for await (const event of asyncSource) {

console.log(event);

}

} catch (err) {

console.error('Source failed:', err.message);

}For a readable stream, an error event is converted by the stream's async iterator implementation into a rejected next() promise. The loop throws, and the surrounding try/catch receives the error. Source failures and loop-body failures can therefore share one local error path, even though they originate from different sides of the protocol.

The cleanup guarantee has a split. In Node v24.15, a custom async iterator whose own next() promise rejects does not receive a follow-up return() call from for await...of. Cleanup for source-side failures therefore belongs in the iterator or source implementation itself, not only in return().

Timing Implications

Because every iteration awaits a next() result, the loop still crosses an async continuation point when the promise is already resolved. If the iterator returns Promise.resolve(value), the continuation resumes later instead of staying in the same synchronous turn. That cost is usually lost in the noise for I/O-bound work, but it can count for CPU-bound iteration over data that was already available.

You can observe this directly:

async function* syncData() {

yield 1;

yield 2;

yield 3;

}

console.log('before');

for await (const n of syncData()) {

console.log(n);

}

console.log('after');The output is still before, 1, 2, 3, after. The visible order is sequential, but each awaited iterator result resumes through the async function continuation machinery described in the async/await subchapter.

Add this before the loop and the extra microtasks become visible:

let ticks = 0;

queueMicrotask(function tick() {

console.log('microtask');

if (++ticks < 4) queueMicrotask(tick);

});On Node v24.15, this setup can log microtask, microtask, 1, microtask, microtask, 2, 3, after. The queued microtasks can run at await points; the exact interleaving depends on how many microtasks are already queued and how the iterator produces its promises. For tight loops over synchronous data, use for...of with a regular iterator when the source is not actually async. Use for await...of when the source needs asynchronous delivery, cancellation, or promise-based errors.

Async Generators

Writing an object with Symbol.asyncIterator and a manual next() method works, but it is more ceremony than most code needs. Async generators, written with async function*, package that protocol work for you. Calling an async generator function returns an AsyncGenerator object that already implements [Symbol.asyncIterator](), next(), return(), and throw(). Inside the generator body, you yield values; outside, the consumer receives them through promise-returning next() calls.

async function* fetchPages(url) {

for (let page = 1; ; page++) {

const res = await fetch(`${url}?page=${page}`);

if (!res.ok) {

throw new Error(`HTTP ${res.status}`);

}

const data = await res.json();

if (data.items.length === 0) return;

yield data.items;

}

}This generator fetches paginated API results one page at a time. It awaits the HTTP response, checks the HTTP status, parses JSON, checks whether there are more items, and yields the items array. When there are no more items, return terminates the generator. The consumer still drives the pacing: no later page is requested until the consumer asks for the next value. This is a minimal example; production code usually adds request cancellation, retry policy, and response validation around the same protocol shape.

// Fragment: consumes the generator above.

for await (const items of fetchPages('https://api.example.com/things')) {

for (const item of items) {

console.log(item.name);

}

}Each iteration of the outer for await...of triggers one next() call on the generator. The generator runs until it hits a yield, produces the value, and suspends. After the consumer processes that value, the next next() call resumes the generator from the same point. It then moves forward to the next fetch, the next JSON parse, and the next yielded page.

Combining yield and await

Inside an async generator, await and yield are both suspension points, but they wait for different parties. await suspends the generator until a promise settles. yield suspends the generator until the consumer calls next() again. When you write yield await somePromise, the await resolves first, and the resolved value is then yielded to the consumer.

The difference is useful when you reason about where the generator is parked. At a yield point, it is waiting for the consumer. At an await point, it is waiting for I/O or another async operation. In a real generator, both kinds of waiting often appear in the same function.

Here's a generator that reads a file and yields each line after a transformation:

const { open } = require('node:fs/promises');

async function* transformLines(filePath, transform) {

const handle = await open(filePath, 'r');

try {

for await (const line of handle.readLines()) {

yield await transform(line);

}

} finally {

await handle.close();

}

}This generator has four suspension points. await open() pauses while the file opens. The inner for await...of awaits each line from the file handle. await transform(line) pauses while the transform function runs. Finally, yield pauses until the outer consumer asks for the next value. The finally block closes the handle if the transform throws or the consumer stops early.

yield* Delegation

Because async generators are async iterables themselves, one generator can delegate to another. yield* iterates through the delegated iterable and yields each value to the outer consumer:

async function* allPages(urls) {

for (const url of urls) {

yield* fetchPages(url);

}

}The consumer sees a flat sequence of yielded values, even though the outer generator is pulling from multiple sources. yield* works with any async iterable, including readable streams.

Controlling Generators from Outside

The control surface behind for await...of is small. An AsyncGenerator object exposes next(value), return(value), and throw(error), along with [Symbol.asyncIterator]() so it can be consumed by the loop syntax.

next(value) resumes the generator from the last yield point. The value you pass becomes the result of the yield expression inside the generator. Most code never uses that input channel because for await...of always calls next() with no argument, but manual stepping can send values back in.

return(value) asks the generator to finish. The generator's code jumps into any finally block. If that block only performs cleanup, the returned promise resolves to { value, done: true }. If the finally block itself yields values, those values are produced before the generator reaches its final { done: true } result. This is the method for await...of calls when you break or when the loop body throws.

throw(error) resumes the generator by throwing the error at the yield point. If the generator catches it internally, execution continues. If it doesn't catch it, the generator terminates and the returned promise rejects.

Most application code uses for await...of and never calls these methods directly. The loop handles next() and return() automatically. Understanding the methods still helps when you debug generator behavior or build higher-level abstractions. If a generator stops producing values earlier than expected, something may have called return() through a forgotten break or through an error that triggered the loop's implicit cleanup.

Cleanup with try/finally

That implicit cleanup is why resource-owning generators usually put acquisition and release in the same function. When the consumer calls return() through break, early return, or a loop-body throw, the generator's code jumps to the nearest finally block:

const { open } = require('node:fs/promises');

async function* readLines(filePath) {

const handle = await open(filePath, 'r');

try {

for await (const line of handle.readLines()) {

yield line;

}

} finally {

await handle.close();

}

}If the consumer breaks out of a for await...of loop over this generator, the return() call triggers the finally block and the file handle closes at the point of interruption. Without try/finally, breaking out of the loop would leave the file handle open until garbage collection, if it were collected at all.

The pattern is simple: open the resource, yield values inside try, and close the resource in finally. It keeps cleanup logic next to acquisition, and the async iteration protocol runs that cleanup when the consumer exits early through return().

How Readable Streams Implement Symbol.asyncIterator

Readable streams are one of the most common async iterables in Node. They gained Symbol.asyncIterator in Node 10, and support became non-experimental in Node 11.14. As of Node 24.x, the implementation lives in lib/internal/streams/readable.js. Readable.prototype[Symbol.asyncIterator]() delegates to an internal stream-to-async-iterator path, and readable.iterator(options) uses the same core path with options. Those internal names are useful when reading Node source, not for application code.

In Node 24.x, the actual iterator is built by an async generator named createAsyncIterator(). It registers a 'readable' listener, sets up eos(stream, { writable: false }, callback) to observe end, error, and premature close, and then runs a loop around stream.read().

That core loop repeatedly calls stream.read() and branches on three states: chunk available, terminal error, or normal completion. If a chunk comes back, the iterator yields it. If eos() recorded an error, the iterator throws it. If eos() recorded normal completion, it returns. Otherwise, it awaits a promise that will be resolved by the next 'readable' event or by the eos() callback.

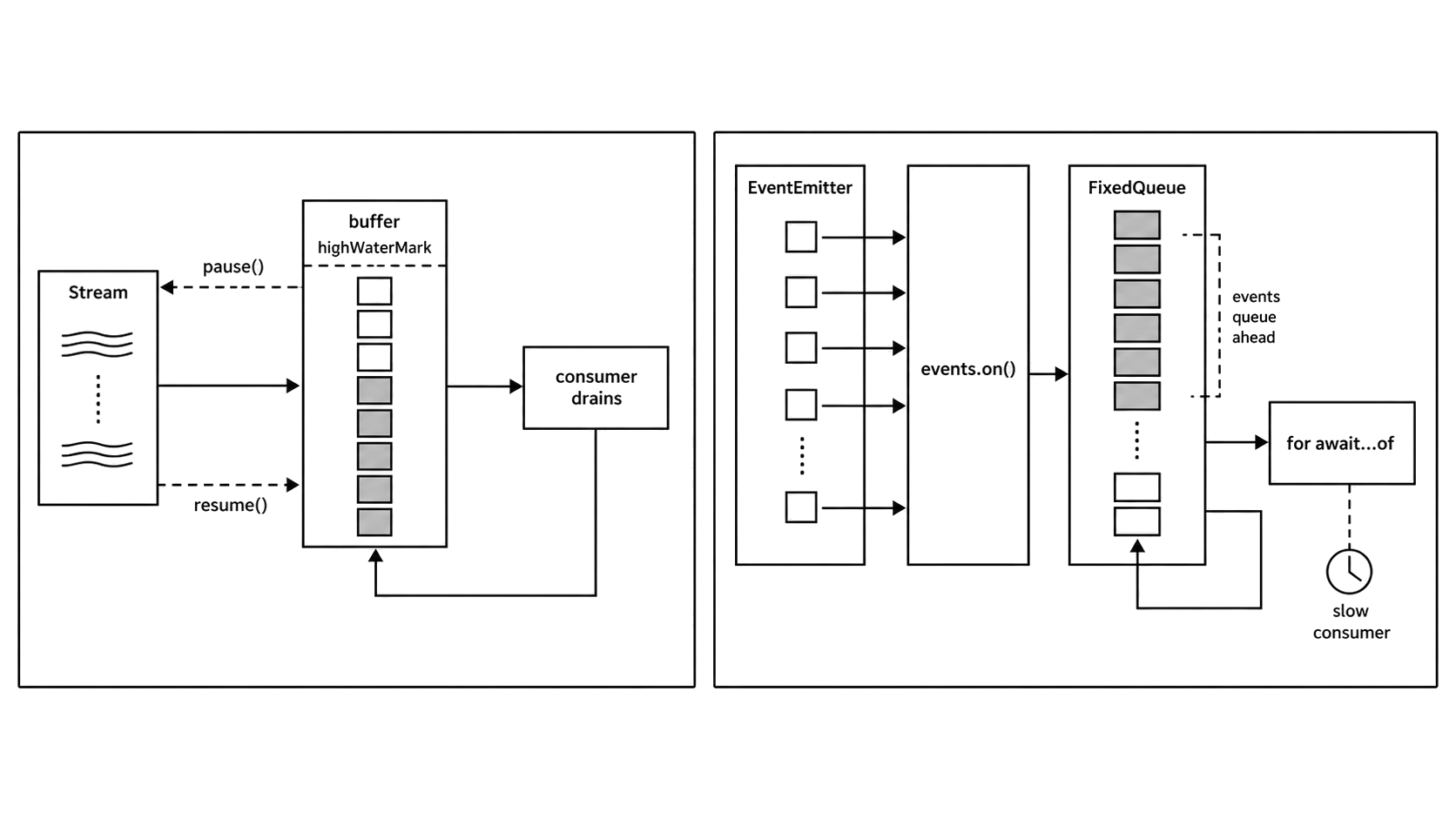

That means the stream's async iterator consumes through the readable API, not through 'data' events. The stream may buffer ahead up to its configured highWaterMark, and the iterator drains that buffer through read() as the consumer advances. A for await...of loop keeps this naturally sequential: get one chunk, run the body, then request the next chunk.

The same generator structure also explains cleanup. On normal full consumption, the loop reaches the stream's end and returns. On early exit or error after the iterator has started, the iterator's finally block destroys the stream unless readable.iterator({ destroyOnReturn: false }) was used. That option belongs to readable.iterator(), not to Symbol.asyncIterator().

Backpressure and highWaterMark

Because the iterator reads through stream.read(), it pulls one chunk at a time from the readable buffer. After the consumer processes a chunk and calls next() again, the iterator pulls another. This sequential pull pattern respects stream backpressure. The stream buffers data according to its highWaterMark threshold, and the async iterator drains that buffer one chunk per iteration. highWaterMark is a threshold, not a hard memory cap. Once the readable buffer reaches that threshold, Node stops calling the stream's internal _read() method until buffered data is consumed.

The contrast is readable.on('data', handler) in flowing mode. With 'data' events, the stream pushes as fast as it can. The handler fires synchronously for each chunk. If the handler starts async work and returns, the stream does not wait for that work; it keeps pushing. The handler must call stream.pause() and stream.resume() to control flow. With for await...of, manual per-chunk stream consumption has a defined pull point: the next chunk is requested only after the loop body reaches the next next() call.

This is why the implementation detail affects application code. The async iterator uses the 'readable' event and explicit stream.read() calls, and Node's stream docs recommend choosing one consumption style for a stream. Mixing on('data'), on('readable'), pipe(), and async iteration on the same stream can produce surprising behavior.

Resource Cleanup

The default stream iterator's return() method calls stream.destroy(). When you break out of a for await...of loop on a stream, the loop calls return(), and the stream is destroyed. File descriptors close. Network sockets terminate. Early exit has a defined cleanup path.

If you call stream[Symbol.asyncIterator]() manually and do not consume the full stream, call return() on the iterator yourself. The for await...of syntax does this automatically, but manual protocol usage requires manual cleanup. Forgetting return() on a partially consumed stream iterator can leave the stream open.

The separate readable.iterator(options) method lets you change that behavior. By default, return() destroys the stream. readable.iterator({ destroyOnReturn: false }) creates an async iterator that will not destroy the stream on early exit. Symbol.asyncIterator() itself takes no arguments, so you have to call readable.iterator() explicitly to pass options. This is useful when you want to resume reading later, but it shifts cleanup responsibility to you.

events.on() and events.once()

The previous subchapter on EventEmitter internals introduced events.on() and events.once() as adapters from EventEmitter dispatch to promise-based consumption. They are not streams, but they use the same async iteration contract on the consumer side.

events.on()

events.on(emitter, eventName, options) returns an AsyncIterator that yields arrays of event arguments. Every time the emitter fires the named event, the iterator produces the arguments from that event as one array:

const { EventEmitter, on } = require('node:events');

const ee = new EventEmitter();

async function consumeOne() {

for await (const [msg] of on(ee, 'message')) {

console.log('Got:', msg);

break;

}

}

consumeOne().catch(console.error);

ee.emit('message', 'hello');When ee.emit('message', 'hello') fires, the loop receives ['hello']. The destructuring [msg] pulls out the first argument, leaving the loop body to handle a plain value.

Internally, the adapter registers a listener for eventName on the emitter. When the event fires, that listener pushes the arguments array into an internal FixedQueue. If a next() call is already waiting for data, the queued value immediately resolves the pending promise. If the consumer is still processing a previous event, the value stays in the queue until the consumer calls next() again.

That queue is the important difference from stream backpressure. If events fire faster than the consumer processes them, the queue grows. Node 20.0 added close, highWatermark, and lowWatermark options to events.on(). Node 20.13 and Node 22.0 added the camel-cased highWaterMark and lowWaterMark names for consistency; the older lowercase-mark names still work. The defaults are effectively unbounded: highWaterMark defaults to Number.MAX_SAFE_INTEGER, and lowWaterMark defaults to 1. In Node v24.15, lowWaterMark must be at least 1.

Those watermarks are only real source flow control for emitters that implement pause() and resume(). A plain EventEmitter has neither. Passing finite watermarks to a plain emitter does not fail when you create the iterator; it fails when buffered events cross the high watermark and Node tries to call emitter.pause(). For high-throughput event sources, use a stream, an emitter with real pause/resume methods, or an explicit bounded queue.

Figure 7.3 — events.on() adapts pushed events into async iteration, but events can queue ahead when the emitter outruns the consumer; stream backpressure has a real pause path.

Error Handling in events.on()

The adapter also gives EventEmitter errors a promise-shaped path. By default, if the emitter fires an 'error' event while you are iterating some other event, the async iterator throws. The loop receives the error as a rejection from next(). Iterating the 'error' event itself treats it as the target event instead. You handle the rejection with try/catch:

// Fragment: `stream` is an existing EventEmitter-backed stream.

const { on } = require('node:events');

try {

for await (const [data] of on(stream, 'data', { close: ['end'] })) {

console.log(data);

}

} catch (err) {

console.error('Stream error:', err);

}You can also specify which events signal completion by passing close in the options. Without this option, the iterator runs indefinitely until the emitter errors or you break out. Passing event names to close tells the iterator when to finish:

// Fragment: `emitter` is an existing EventEmitter.

const { on } = require('node:events');

const iter = on(emitter, 'data', { close: ['end', 'finish'] });Now the iterator completes, returning { done: true }, when 'end' or 'finish' fires.

AbortSignal Support

For cancellation that does not depend on the emitter, events.on() accepts an AbortSignal:

const { EventEmitter, on } = require('node:events');

const ee = new EventEmitter();

const ac = new AbortController();

setTimeout(() => ac.abort(), 5000);

try {

for await (const [msg] of on(ee, 'message', { signal: ac.signal })) {

console.log(msg);

}

} catch (err) {

if (err.name !== 'AbortError') throw err;

}When the signal aborts, the iterator throws an AbortError. The loop exits, and cleanup runs. This is the standard way to add timeouts or cancellation to event-driven iteration. The iterator's internal listener is removed from the emitter on cancellation, so the emitter does not retain a dead listener.

events.once()

For a single event, async iteration is more structure than you need. events.once(emitter, eventName, options) returns a promise that resolves with an array of the event arguments the first time the event fires:

const { once } = require('node:events');

const { createServer } = require('node:http');

const server = createServer();

(async () => {

server.listen(0);

await once(server, 'listening');

console.log('Server is up');

server.close();

})();The promise resolves with the argument array when the target event fires. If the emitter fires an 'error' event before the target event, the promise rejects with that error. If you pass an AbortSignal and it aborts before the event fires, the promise rejects with an AbortError.

events.once() is the promise equivalent of emitter.once(eventName, listener), but instead of a callback, you get a promise you can await. It removes the listener after the event fires or after an error, so there is no iterator cleanup path to manage.

A practical pattern is using events.once() to wait for server readiness, socket connection, or any one-time lifecycle event. It is cleaner than wrapping emitter.once() in a new Promise() manually, and it handles the 'error' event edge case automatically.

events.on() models a continuing event source; events.once() models one awaited occurrence. Use once() for one-time lifecycle events such as server readiness, socket connection, or process exit. Use on() for ongoing events such as incoming messages or data chunks, and remember that the adapter buffers events when the consumer is slower than the emitter.

Building Custom Async Iterables

Streams, generators, and EventEmitter adapters cover many cases, but sometimes the source does not already expose the protocol. You may be wrapping a callback-based API, building a producer-consumer queue, or implementing a small data pipeline around an internal source.

Manual Implementation

The manual shape is the same protocol shown earlier: an object with a Symbol.asyncIterator method that returns an iterator with next(), and optionally return() and throw():

function createCounter(limit, delay) {

let count = 0;

return {

[Symbol.asyncIterator]() {

return {

async next() {

await new Promise(r => setTimeout(r, delay));

if (count < limit) {

return { value: count++, done: false };

}

return { value: undefined, done: true };

}

};

}

};

}Because next() is an async function, it returns a promise automatically. Each call waits for a timeout and then returns the next count value. When the count reaches the limit, it returns done: true. A consumer can use it with for await (const n of createCounter(5, 100)).

Wrapping Callback-Based APIs

For many custom sources, an async generator is still the simpler wrapper. Suppose a database cursor reads records one at a time through a callback API:

async function* iterateCursor(cursor) {

try {

while (true) {

const rec = await new Promise((resolve, reject) => {

cursor.next((err, row) => err ? reject(err) : resolve(row));

});

if (!rec) return;

yield rec;

}

} finally {

await cursor.close?.();

}

}Each pass through the loop wraps one callback invocation in a promise, awaits it, and yields the row. The try/finally closes the cursor whether the consumer reads all records or breaks early. await cursor.close?.() handles cursor APIs whose close method returns a promise and does nothing if the mock or adapter has no close method.

Queue-Based Async Iterable

When the producer and consumer are not in the same function, a queue-based async iterable gives you more control. The producer pushes values in. The consumer pulls values out with for await...of. The coordination problem is concrete: the producer may push while no consumer is waiting, and the consumer may call next() while no value is available.

Here's a minimal implementation:

function createQueue() {

const values = [];

const waiters = [];

const DONE = Symbol('done');

let ended = false;

const result = value => value === DONE

? { value: undefined, done: true }

: { value, done: false };

function finish() {

ended = true;

while (waiters.length) waiters.shift()(DONE);

}

function close() {

finish();

values.length = 0;

return Promise.resolve(result(DONE));

}

return {

push(value) {

if (ended) throw new Error('queue ended');

if (waiters.length) waiters.shift()(value);

else values.push(value);

},

end() { finish(); },

[Symbol.asyncIterator]() {

return {

next() {

if (values.length) return Promise.resolve(result(values.shift()));

if (ended) return Promise.resolve(result(DONE));

return new Promise(resolve => {

waiters.push(value => resolve(result(value)));

});

},

return: close

};

}

};

}The implementation keeps two arrays. values holds items pushed by the producer that have not been consumed yet. waiters holds callbacks from promises created by next() when no data was available. DONE is a private sentinel, so null and undefined can still be valid queue values. When the producer calls push(value), it first checks for a waiting consumer. If one exists, it resolves that consumer's promise directly. Otherwise, it queues the value. When the consumer calls next(), it checks for a queued value, then for the ended state, and finally parks a callback in waiters. end() marks the producer side closed while letting already queued values drain.

The two arrays hold unmatched producer and consumer operations. When producer and consumer are matched in time, data flows directly. When they are mismatched, the faster side queues its work until the slower side catches up. The iterator also implements return(), so breaking out of for await...of clears queued values and resolves pending waiters.

This simple version still has no backpressure. If the producer calls push() 10,000 times before the consumer starts iterating, the values array holds 10,000 entries in memory. For bounded producers, such as a fixed number of database rows or a finite API response, that may be acceptable. For unbounded producers, such as a live event stream or continuous sensor feed, you need a buffering limit and a way to signal the producer to slow down.

A practical approach is to track the buffer size and return a boolean from push() indicating whether the buffer is below a threshold. The producer checks the return value and backs off if the buffer is full. writable.write() uses the same flow-control signal shape: it returns false when the internal buffer exceeds highWaterMark (covered in Chapter 3).

When to Use Generators vs Manual Implementation

Use async generators first when one function owns both resource acquisition and value production. They handle cleanup through try/finally, and the protocol compliance is automatic: you yield values, and the generator object handles next(), return(), and throw() for you.

Manual Symbol.asyncIterator implementations make sense when you need direct control over pending promises, cancellation, producer-consumer queues, or custom return() and throw() behavior that doesn't map cleanly to generator control flow. They are library primitives more often than application code. for await...of still awaits each next() result, so a manual iterator does not remove the async continuation cost.

The queue-based pattern above is the most common case for manual implementation. The producer and consumer are decoupled; they may live in different modules or run in different timing contexts. An async generator assumes the producer and consumer meet inside the generator body, where values are both created and yielded. A queue explicitly separates them. The producer calls push(), the consumer iterates with for await...of, and neither side needs to know how the other is scheduled.

Patterns and Practical Considerations

Pipeline with Async Generators

Once a source speaks the async iteration protocol, generators can be composed into transformation pipelines. Each generator takes an async iterable as input and yields transformed values:

async function* map(source, fn) {

for await (const item of source) {

yield await fn(item);

}

}

async function* filter(source, predicate) {

for await (const item of source) {

if (await predicate(item)) {

yield item;

}

}

}You can chain them as filter(map(source, transform), predicate). Each stage pulls from the previous stage, transforms, and yields. Because the chain is pull-based, only one item flows through this generator chain at a time. There is no intermediate buffering between stages beyond what each async iterator implementation might buffer internally. Work starts when the final consumer asks for a value.

stream.pipeline() with Async Generators

The same generator stages can also participate in stream pipelines. Since Node 13, stream.pipeline() (covered in Chapter 3) accepts async generators as transform stages, so a pipeline can mix streams and generators:

const fs = require('node:fs');

const { pipeline } = require('node:stream/promises');

async function run() {

await pipeline(

fs.createReadStream('input.txt'),

async function* (source, { signal }) {

for await (const chunk of source) {

signal.throwIfAborted();

yield chunk.toString().toUpperCase();

}

},

fs.createWriteStream('output.txt')

);

}

run().catch(console.error);input.txt and output.txt are placeholders. The pipeline connects the read stream to the async generator and then to the write stream. Backpressure propagates through all three stages. If the write stream's buffer fills up, the pipeline waits before pulling another value from the generator, and the generator waits before pulling another chunk from the source. The generator acts as a transform stage without needing to subclass Transform or implement _transform(). For simple transformations, this is less ceremony than building a Transform stream.

The pipeline also handles errors and cleanup. If any active stage fails, the entire pipeline is torn down. The read stream gets destroyed, the write stream gets destroyed, and an async generator stage that has been entered receives return(), which triggers its finally block. A generator stage that never starts has no body or finally block to run.

The Serial Nature of for await...of

Every for await...of loop processes items one at a time. There is no built-in way to process multiple items in parallel because the protocol is sequential: call next(), await one result, process the value, then call next() again.

If you need parallel processing, add concurrency above the loop. One common pattern is to collect work into batches, then process each batch with Promise.all():

async function processBatched(source, batchSize, fn) {

let batch = [];

for await (const item of source) {

batch.push(Promise.resolve().then(() => fn(item)));

if (batch.length >= batchSize) {

await Promise.all(batch);

batch = [];

}

}

if (batch.length > 0) {

await Promise.all(batch);

}

}This collects up to batchSize promises, runs them concurrently, waits for the batch to complete, and then moves to the next batch. Promise.resolve().then(() => fn(item)) gives sync throws and async rejections the same promise-shaped path. Items within a batch are concurrent, but batches are sequential, which gives you bounded concurrency instead of fully serial work or unbounded fan-out.

Memory with events.on()

The earlier events.on() section deserves one more practical warning because it is easy to mistake the adapter for stream-like flow control. If your consumer does async work per event and the emitter fires events faster than the consumer processes them, memory grows. The default highWaterMark is Number.MAX_SAFE_INTEGER, so the queue is effectively unbounded unless you pass watermarks and the emitter supports pause() and resume().

For many use cases, such as a server emitting request events at moderate rates or a socket emitting data events with inherent I/O pacing, this is not a problem. The producer's natural rate limiting keeps the queue short. For synthetic event sources, such as timers firing at high frequency or programmatic emit() calls in a tight loop, the queue can grow sharply.

If that risk applies to the source you are consuming, use a readable stream with for await...of instead of events.on(). Streams have backpressure built in. Another option is a custom queue with a bounded buffer and explicit flow control, as shown in the queue section above.

One Consumer Shape

Streams, EventEmitters, database cursors, paginated APIs, file line readers, and WebSocket messages can all be consumed through for await...of once they expose Symbol.asyncIterator. Rejections become throws. Early exit calls return() when the iterator provides it. Processing is sequential unless you add explicit concurrency above the loop.

for await...of with Non-Async Iterables

There is one final edge of the protocol to account for. for await...of also works with regular sync iterables. If the object has Symbol.iterator but no Symbol.asyncIterator, the loop falls back to the sync protocol and wraps each { value, done } result in Promise.resolve(). This means you can write for await (const item of [1, 2, 3]) and it works. Each value gets awaited. If any value is a promise, it resolves before the loop body runs.

There is rarely a good reason to do this for ordinary sync data. Using for await...of on a sync iterable adds a microtask hop per iteration for no benefit. It is occasionally useful when you have an array of promises and want ordered consumption:

const urls = ['https://example.com/a', 'https://example.com/b'];

const promises = urls.map(url => fetch(url));

for await (const response of promises) {

console.log(response.status);

}The fetch() calls start when urls.map() runs, before the loop begins. The loop then awaits the promises in array order. If the second request finishes first, the loop still waits for the first promise before the body sees the second response. This is ordered consumption of already-started work, with the same observation order as await promises[0]; await promises[1]. If you want to observe all results after parallel completion, use Promise.all() instead (covered in the next subchapter).

The payoff is composition. A generator can consume a stream, transform each chunk, and expose the result through the same protocol. Another generator can filter that output. stream.pipeline() can wire the stages together with backpressure and error propagation. EventEmitter still has its place when listeners need synchronous dispatch during emit(), but events.on() is only an adapter with a buffer. When the consumer needs flow control, prefer a stream, a source that can pause and resume, or a bounded async iterable that makes pressure explicit.