Error-First Callbacks

Error-first callbacks are one of Node's original single-completion async contracts. The shape is (err, value): failure in the first slot, success data after it. A filesystem request, DNS lookup, child-process completion, or older userland operation can all report completion through the same kind of JavaScript function call.

Error-First Callbacks

That small signature carries more than it first appears to. The callback runs on the event-loop thread for that Node.js environment when Node reaches the completion point for the operation. In the default process environment, that is the process's primary JavaScript thread. Inside a worker, it is the worker's event-loop thread. A falsy first argument means normal completion. A truthy first argument is usually an Error.

The timing is part of the contract too. Some callbacks are synchronous by design, but core async operations schedule completion after the current stack has returned. The same JavaScript shape can therefore mean either "call this function now" or "keep this function and call it when the operation finishes." Most of the complexity in callback code comes from that second meaning.

Callback APIs Under Core Async Work

Callbacks sit under much of Node's older async surface area. Callback-based filesystem work and some DNS or child-process paths cross from JavaScript into native code and back through completion functions. Timers go through libuv's timer machinery. EventEmitter listeners and many stream callbacks can be plain JavaScript dispatch. Higher-level APIs may expose promises, async iterators, or events, but many I/O paths still have callback-shaped completion points in the runtime.

Keep that split precise. Promise jobs inside V8 are promise jobs. Promise-native Node APIs often use promise request wrappers, such as FSReqPromise, instead of storing a user callback. The runtime still uses native completion callbacks for many operating-system handoffs, but the public JavaScript shape can be callback-based or promise-based.

At the JavaScript level, a callback is only a function you pass as an argument for some other code to call. There is no special syntax, keyword, or class involved. The function reference itself is ordinary; the dangerous parts are timing, argument shape, and single invocation.

const fs = require("node:fs");

fs.readFile("config.json", "utf8", (err, data) => {

if (err) throw err;

console.log(data.trim());

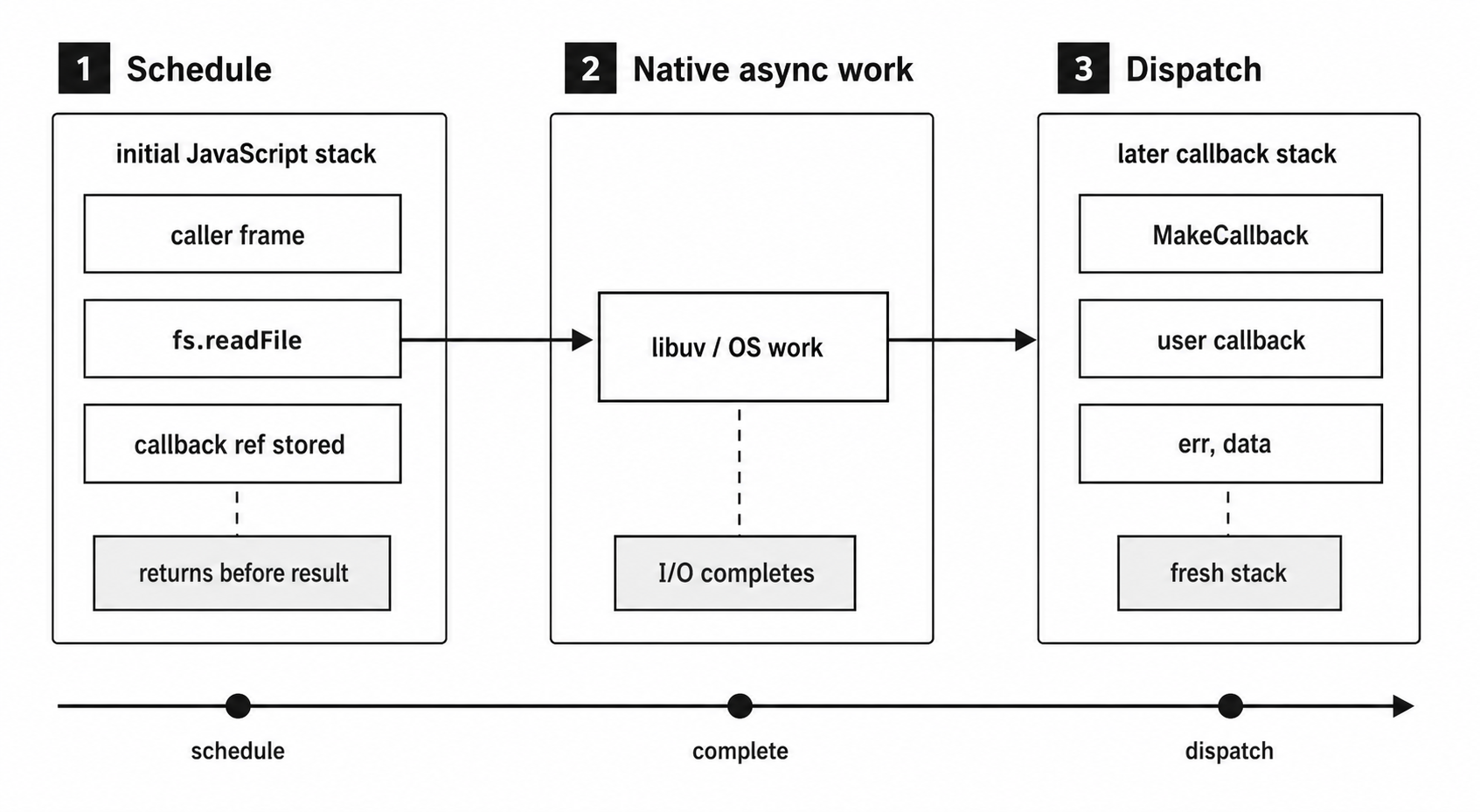

});This example assumes config.json exists. The anonymous arrow function is the third argument to fs.readFile. Node validates that argument, stores it in the read-file context, starts the native open/stat/read sequence, and returns before the I/O result is available. Your code keeps running. Later, maybe a millisecond later and maybe fifty, the event loop picks up the completed I/O result and calls your function with either an error or the file contents.

Figure 7.1 — A callback-based I/O request separates scheduling from completion. The setup stack returns while native work continues, and the user callback later runs on a new JavaScript stack.

The word "later" is doing real work there. The callback does not run synchronously inside readFile. It runs on a future turn of the event loop, after the call stack that set it up has already unwound.

Node's core APIs validate the callback argument synchronously. If you accidentally pass undefined instead of a function, readFile throws a TypeError immediately, before any I/O gets queued. That validation only checks the type. Node confirms the argument is a function and stores a reference to it. It does not inspect the function body, check for error handling, or verify that your callback does anything useful with the result. The function reference is opaque. What happens inside it is entirely your responsibility.

Synchronous vs Asynchronous Callbacks

The term "callback" applies to both synchronous and asynchronous functions passed as arguments, so the word alone does not tell you when the function runs.

const numbers = [3, 1, 4, 1, 5];

numbers.forEach((n) => {

console.log(n);

});

console.log("done");The function passed to forEach is a callback, but it is synchronous. It runs immediately, inline, on the same call stack. Every invocation of your callback completes before forEach returns. By the time console.log("done") runs, all five numbers have already been logged. The callback and the calling code share the same execution context. If the callback throws, the exception propagates through forEach, through your calling code, and can be caught by a surrounding try/catch. It behaves like ordinary sequential execution with a function call in the middle.

fs.readFile uses the same callback shape for a different execution model. When you pass a callback to readFile, the function returns before the I/O result exists. The callback has not been called yet. It runs later, on a fresh JavaScript stack, after libuv signals that the I/O operation completed. The original call frame is gone by then, and the synchronous flow has already moved on.

That split shapes async programming in Node. Synchronous callbacks are regular function calls with extra indirection. Asynchronous callbacks are deferred execution: the function runs later, in a later turn, on a different call stack. Error handling changes at that split.

Most APIs make the difference clear. A callback passed to a Node core API that performs one-shot I/O is asynchronous: fs.readFile, http.get, dns.lookup, child_process.exec. A callback passed to an array method such as map, filter, forEach, or reduce is synchronous. EventEmitter listeners, covered later in this chapter, are synchronous too: when you call emitter.emit("data", chunk), all registered listeners for "data" run synchronously, in registration order, before emit returns. The setTimeout callback is asynchronous. The Array.sort comparator is synchronous.

User-space libraries are where the difference can become unreliable. A library function might call your callback synchronously for a cached result and asynchronously for a cache miss. That inconsistent timing is a source of subtle bugs, and the Node ecosystem has a name for it: "releasing Zalgo." The rule is strict. If a function ever calls its callback asynchronously, it should always call it asynchronously, even when the result is immediately available. Node code historically used process.nextTick() for that deferral, but it is Node-specific and runs ahead of promise jobs. For many userland APIs, queueMicrotask() is the smaller portable deferral primitive.

Continuation-Passing Style

There is a formal name for passing a callback that receives the result: continuation-passing style, or CPS. Instead of returning a value directly, a function passes the result to its continuation, the callback function that represents what should happen next.

The rest of the small filesystem examples assume fs is already in scope.

Direct style looks like this:

const data = fs.readFileSync("config.json", "utf8");

console.log(data.trim());You call a function, it returns a value, and you use the value. The function returns both control and the result to the caller. The program counter moves to the next line. Everything is sequential, and everything is on the same stack.

CPS changes where the result goes. The function still returns control to its caller, but it does not return the result. It calls another function with the result instead:

fs.readFile("config.json", "utf8", (err, data) => {

if (err) return handleError(err);

console.log(data.trim());

});The callback is the continuation. It is the rest of your program from that point onward, packaged as a function argument. Everything you want to do with the file contents goes inside that callback, because that is the only place where data exists.

That inversion takes a moment to absorb. fs.readFile does return to its caller, and in practice it returns undefined. But the useful result does not come back through the return value. It arrives through a different channel: the callback invocation. The return value is irrelevant. The continuation carries the program forward.

The effect cascades when the continuation needs to start more async work. Each step's result gets passed to another callback, and that callback's result goes to another callback. Each step's "rest of the program" nests inside the previous step:

fs.readFile("config.json", "utf8", (err, raw) => {

if (err) return done(err);

let config;

try { config = JSON.parse(raw); } catch (err) { return done(err); }

fs.readFile(config.dataPath, "utf8", (err, data) => {

if (err) return done(err);

fs.writeFile("output.txt", data, done);

});

});Three async operations produce three levels of nesting. Each callback is a continuation of the previous one. The control flow reads top-to-bottom, but also outside-to-inside. The JSON.parse guard is local because that exception happens inside the callback's own stack, not in the stack that called fs.readFile. CPS works. Readability starts degrading quickly.

The terminology is important because CPS is a concept from programming language theory going back to the 1970s. Scheme compilers use CPS as an intermediate representation. Haskell's continuation monad is the same idea in a different syntax. The principle is the same across all of these: instead of returning a value to the caller, pass it forward to the next computation. Early Node adopted this pattern because JavaScript had first-class functions and no standardized promise or async function support yet. First-class functions made CPS ergonomic enough to use at scale. The error-first convention made it predictable enough for an ecosystem.

There is still a timing difference inside CPS itself. A function that takes a callback and calls it synchronously, like Array.forEach, is using synchronous CPS. A function that takes a callback and calls it on a later turn, like fs.readFile, is using asynchronous CPS. Node uses the async variant almost exclusively for I/O operations and the sync variant for iterative utilities. The async variant creates the difficult part, because the callback runs on a different call stack with a different error-handling context.

The try/catch Gap

The rule is simple: try/catch protects only the current call stack.

const fs = require("node:fs");

try {

fs.readFile("/nonexistent", "utf8", (err, data) => {

console.log(data.trim());

});

} catch (e) {

console.log("caught:", e.message);

}If /nonexistent does not exist, this code does not hit the catch block. The try/catch wraps the call to fs.readFile, and that call succeeds. It queues the I/O request and returns. No exception is thrown. The try block completes normally, and the program continues past the catch.

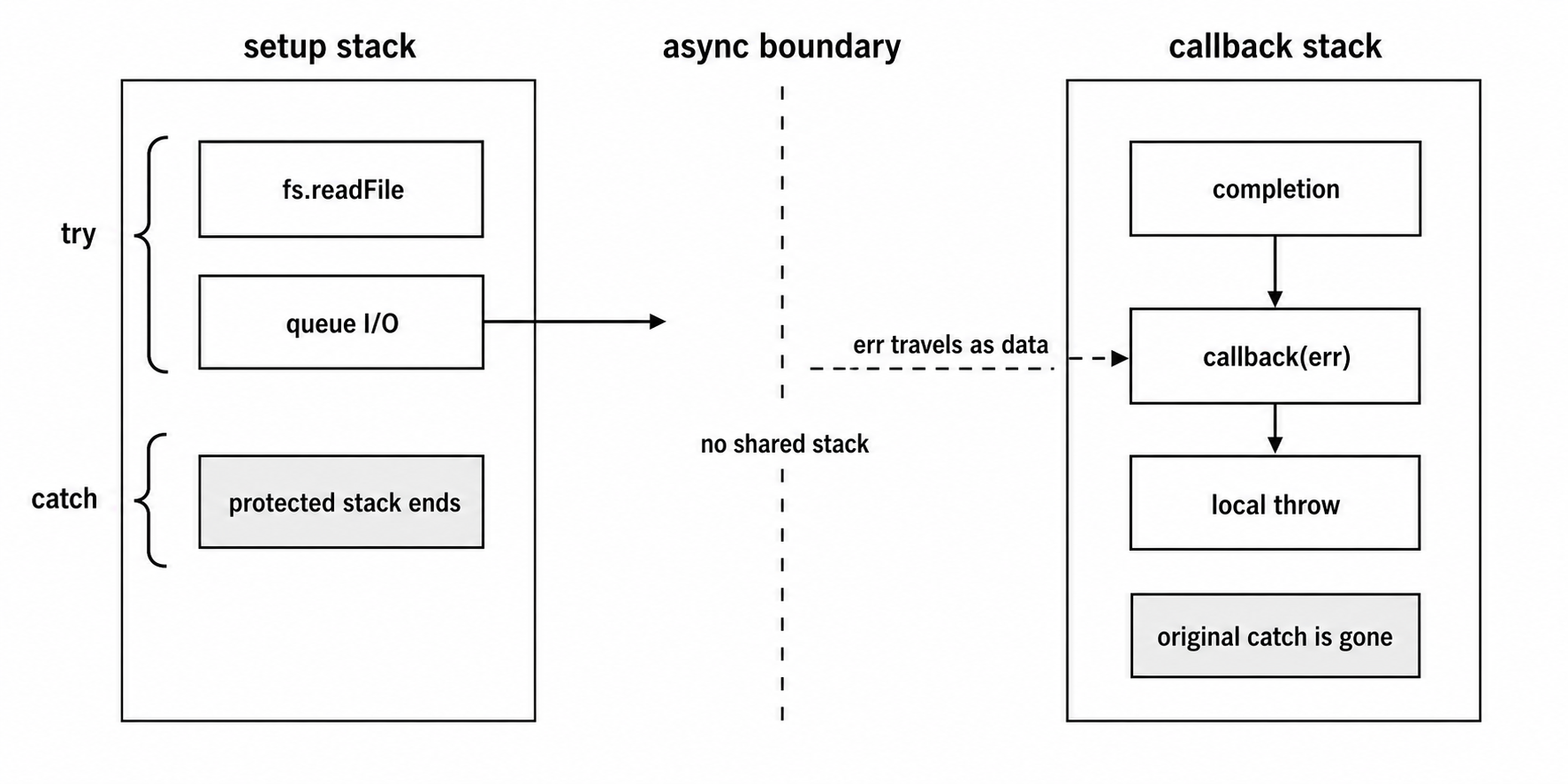

The error happens later. Libuv tries to open the file, gets an ENOENT from the kernel, and stores the error code in the request struct. A later completion dispatch reaches Node's filesystem binding. The JavaScript layer receives an Error object built from the ENOENT code and calls your callback with (err, undefined). By then the try/catch block is gone. The call stack has unwound. The catch clause has already been popped off the execution context. Your callback runs in a different call stack, one with no try/catch around it.

Inside that callback, err is a truthy Error object and data is undefined. Because the code ignores err, data.trim() throws a TypeError. That TypeError propagates up the callback's call stack and reaches Node's uncaught exception path. Without a process.on("uncaughtException") handler, Node prints the stack and exits.

That is the fundamental gap. Synchronous code can use try/catch because the error and the handler share the same call stack. Async callbacks run on a different call stack. The code that set them up has already returned.

When your callback executes, the stack includes native filesystem completion machinery, Node's callback dispatch, internal JavaScript read-file continuation functions, and then your callback. The original call to fs.readFile() has already returned. The try/catch you wrote existed only in the stack that ran fs.readFile(), and that stack was popped off the moment readFile returned. Two stacks, two execution contexts, no shared exception handler.

Figure 7.2 — A try/catch block protects only the stack that schedules work. An asynchronous failure crosses the gap as an err argument on the later callback stack.

The err argument exists because there is no other reliable way to communicate failure across that split. You cannot throw across it. You cannot use the original try/catch. The error has to travel as data, as a function argument, from the code that detected the failure in the C++ binding layer to the code that can handle it in your callback.

Many callback bugs look like errors disappearing. They do not disappear. They arrive as err arguments that nobody checks, or they turn into exceptions thrown inside callbacks with no local handler. Both patterns come from the same root cause: the async handoff creates a gap that synchronous error handling cannot bridge.

Why Error-First

The error-first callback convention is the solution Node adopted for that gap. Single-completion callback APIs in Node's core usually take an error as their first argument:

fs.readFile(path, encoding, (err, data) => { ... });

fs.writeFile(path, content, (err) => { ... });

fs.stat(path, (err, stats) => { ... });If the operation succeeds, err is null and the remaining arguments contain the result. If it fails, err is usually an Error object and the result arguments are undefined or absent entirely. fs.writeFile's callback only gets err, because a successful write has no return value. A few APIs can pass more specific error shapes such as AggregateError when multiple failures are reported together.

Putting failure first makes the required branch hard to miss. The error is the first thing you see in the parameter list, and the position supports a clean early-return pattern:

fs.readFile("data.json", "utf8", (err, raw) => {

if (err) return handleError(err);

let parsed;

try { parsed = JSON.parse(raw); } catch (err) { return handleError(err); }

processData(parsed);

});The callback first checks the I/O error and bails out if present. Only then does it handle local synchronous work. The return in both guards has two jobs: it hands the error to the handler and prevents execution from falling through to code that assumes valid data.

That guard appears in virtually every callback-based Node codebase. The if (err) return ... line at the top of a callback is the callback-era replacement for a local try/catch. Skip it, and the rest of the callback runs as if the operation succeeded. Eventually raw may be undefined, JSON.parse(undefined) may throw, and the stack trace will point at the parsing line rather than the I/O error that caused the bad input.

Userland did not begin with one clean style. Early modules used a mix of patterns: some put errors second, some emitted events, some threw synchronously for setup failures, and some used separate success and error callbacks. Node's documentation eventually named the dominant shape "Node style callbacks": a callback whose first argument is null on success or an error object on failure.

The convention is a contract between the API and the consumer. When you see (err, result) as a callback signature in Node's core, you know what to expect: err is either null or an Error, and result is valid only when err is null. Some community libraries broke this contract by accepting (result) only, using separate success and error callbacks, or passing error codes instead of Error objects. Those variations caused real friction. The single error-first callback became the standard because it was the simplest pattern that worked consistently.

There is one more practical detail: when err is truthy, its shape is important. Node core normally passes an instance of Error, a subclass like TypeError or RangeError, a system error with a code property such as ENOENT, or an aggregate error shape when the API can collect multiple failures. The error object carries a .message, a .stack trace, and often a .code string you can switch on. Community libraries are less consistent. Some pass plain strings, some pass objects with custom properties, and some pass error codes as numbers. If you build callback APIs, pass proper Error objects. Stack traces are useful. .code properties are useful. A string with an error message tells you what happened, but not where in the codebase it originated.

Filesystem Callback Dispatch

When you call fs.readFile(path, options, callback), the JavaScript function returns before the file contents exist. Behind that return, the operation has already been split into a small state machine.

The source names in this section are Node v24 implementation details, not public API. The JavaScript pieces are visible in Node's embedded fs and internal/fs/read/context sources. Native filesystem request and callback-scope names such as FSReqCallback, MakeCallback, and InternalCallbackScope sit underneath that JavaScript layer.

The public fs.readFile function validates the callback synchronously. It normalizes options. It constructs a ReadFileContext that stores the user callback, encoding, file descriptor state, accumulated buffers, current offset, abort signal, and any stored error. Then it creates a native-backed FSReqCallback, attaches that context to the request object, sets the request's oncomplete property to an internal JavaScript continuation called readFileAfterOpen, and calls the filesystem binding's open.

The success path is a chain of completions:

fs.readFilevalidates arguments and startsopen.readFileAfterOpenreceives the file descriptor and startsfstat.- The stat completion sizes the destination buffer or switches to chunk storage.

- Read completions keep filling buffers until EOF or the requested length.

- The close completion calls the original callback from the

ReadFileContext.

An open failure takes a shorter path. readFileAfterOpen sees the error and calls the user callback with that error because there is no file descriptor to close. The final close-completion callback is the success-path endpoint, plus the path for failures that happen after a descriptor exists. Either way, fs.readFile is several callback completions, not one.

At the native layer, FSReqCallback is an AsyncWrap-backed request object for filesystem work. The request wraps a libuv uv_fs_t. For asynchronous filesystem calls, libuv receives the loop, request struct, operation arguments, and a C callback. The filesystem operation normally runs through libuv's thread pool. The default pool size is four threads, controlled by UV_THREADPOOL_SIZE.

The result is stored in uv_fs_t.result, and that field is operation-specific. For uv_fs_open, it holds a file descriptor on success. For uv_fs_read, it holds the number of bytes read. For errors, it holds a negative libuv error code. The file bytes themselves live in the buffer passed to uv_fs_read; result is not the file contents.

When the libuv operation completes, Node's C++ filesystem completion path reads uv_fs_t.result. A negative result becomes a JavaScript error. A successful result becomes the JavaScript value expected by the internal continuation. FSReqCallback::Resolve() calls MakeCallback with (null, value). FSReqCallback::Reject() calls MakeCallback with the error.

MakeCallback is the native-to-JavaScript entry point that gives these callbacks their Node behavior. At this level it does three jobs:

- Create an

InternalCallbackScopefor async context andasync_hooks. - Call the JavaScript function with V8's

Function::Call. - Close the callback scope after the JavaScript function returns.

Closing that scope reaches the scheduling machinery. Node emits the async-hooks after event, unwinds the async context, and then reaches a task-queue checkpoint. If no next tick is scheduled, Node can run V8's microtask checkpoint directly. If a next tick or pending rejection warning exists, Node calls the JavaScript tick callback. That path drains the process.nextTick() queue, then runs V8 microtasks, then repeats while next ticks or promise rejection work remain.

The observable rule remains useful: after a native callback enters JavaScript and returns, Node reaches a checkpoint before it delivers the next libuv callback. In a CommonJS script, process.nextTick() scheduled inside an fs.readFile callback runs before a promise handler scheduled in the same callback. In ESM top-level evaluation there are extra ordering details because module evaluation itself runs through the microtask machinery, but inside this filesystem callback the next-tick-before-promise rule still holds.

The this binding is a trap. Internal read-file continuations use this.context, because MakeCallback calls them with the request wrapper as the receiver. Your user callback is different. The final JavaScript continuation retrieves context.callback and calls it as a plain function. In CommonJS, a non-strict regular function observes globalThis as this. In ESM or strict mode, it observes undefined. Arrow functions keep lexical this. Use the callback arguments. Do not depend on this in filesystem callbacks.

If your callback throws, the exception escapes from that JavaScript callback invocation and reaches Node's uncaught exception handling path. The stack comes from the completion dispatch path, not from the original fs.readFile() call that scheduled the work. That is the same async handoff problem from the previous section, viewed from inside the runtime.

Callback Patterns in Practice

The simplest async callback pattern is sequential: do one thing, then start the next thing from its callback.

fs.readFile("input.txt", "utf8", (err, data) => {

if (err) return console.error(err);

const upper = data.toUpperCase();

fs.writeFile("output.txt", upper, (err) => {

if (err) return console.error(err);

console.log("done");

});

});This reads a file, transforms the content, and writes the result. The second fs.writeFile only starts after the first fs.readFile completes. Two or three operations are still readable. Beyond that, the nesting gets deep and the indentation pushes the useful logic farther to the right.

Parallel operations introduce a different kind of state. If you need to read three files and process them together, nesting them sequentially would serialize the I/O, making each read wait for the previous one to finish. Three reads that could run in 5ms each would take 15ms total. The callback version has to start all three reads first and then join their completions.

A naive counter version looks like this:

const files = ["a.txt", "b.txt", "c.txt"];

const results = new Array(files.length);

let pending = files.length;

files.forEach((file, i) => {

fs.readFile(file, "utf8", (err, data) => {

if (err) return console.error(err);

results[i] = data;

if (--pending === 0) processAll(results);

});

});All three readFile calls fire immediately, without waiting for each other. Each callback decrements the pending counter and stores its result at the correct index. When the counter hits zero, all reads have completed, and processAll receives the results in the original order because i preserves the input position regardless of completion order.

Figure 7.3 — Parallel callback code starts independent work first, then joins completions through shared state. Success decrements the counter; the first failure takes a separate error path.

That code is a correct success-path parallel join for a non-empty file list. It is incomplete as production code. If b.txt does not exist, its callback receives an error, logs it, and returns. The counter never decrements for the errored entry. pending started at 3 and only reaches 1, so processAll never fires. If you move the decrement before the error check, processAll can fire with results[1] as undefined. Neither outcome is correct.

Error handling in parallel callbacks therefore needs more coordination. Inside each read callback, using the same results and pending variables, a first-error guard looks like this:

let errored = false;

fs.readFile(file, "utf8", (err, data) => {

if (errored) return;

if (err) {

errored = true;

return handleError(err);

}

results[i] = data;

if (--pending === 0) processAll(results);

});First error wins. Once any read fails, later callback completions are ignored by the guard. The reads already in flight still complete at the operating-system and libuv level; this guard is first-failure notification, not cancellation. That mirrors one visible part of Promise.all: reject on the first failure. You can also implement "collect all errors" semantics, similar to Promise.allSettled, but the boilerplate gets heavy. You need a separate errors array, a combined counter for successes and failures, and a final callback that receives both arrays. At that point, you are mostly reimplementing promise combinators with manual state tracking.

The same manual state shows up in waterfall code. A waterfall is a series of async operations where each result feeds into the next, while accumulated state also moves through the chain. Without an abstraction, that means passing extra values through closure scope. If step 3 needs the result of step 1, not just step 2, you either hoist a variable into an outer scope or pass it as a parameter through intermediate callbacks that do not use it.

Retry code adds one more layer. If an operation fails and you want to retry it with exponential backoff, you wrap the callback in a recursive function that calls setTimeout on failure and reissues the original async call. The retry count, backoff delay, and maximum retry count all live in closure variables around a recursive callback. It works, but reading the code requires mentally following recursion across async handoffs.

The async package, originally known through the async.js project by Caolan McMahon, appeared early in Node's history because these patterns were common and error-prone. It provided async.parallel, async.series, async.waterfall, async.retry, async.queue, and dozens of other flow-control utilities. In the callback era it became one of npm's heavily depended-on packages. It existed because raw callbacks made flow control a manual exercise in state tracking, and developers kept reimplementing the same patterns with the same bugs.

Callback Hell and Inversion of Control

The nesting problem has a name: callback hell. Leaving error guards out for a moment makes the shape visible; adding them makes the code longer.

getUser(userId, (err, user) => {

getOrders(user.id, (err, orders) => {

getOrderDetails(orders[0].id, (err, details) => {

getShippingStatus(details.trackingId, (err, status) => {

updateUI(user, orders, details, status);

});

});

});

});Four nested callbacks push each continuation one scope deeper. Reading the code means tracking which scope you are in and which variables are available from outer closures. If you add normal error guards, every level declares its own err, each one shadowing the previous one. The indentation is the visible symptom.

It is only the surface problem, though. Named functions flatten the indentation. Modular decomposition reduces the visual noise. You can rewrite nested callbacks as a sequence of named functions and make the page look orderly. The deeper issue, the one named functions do not fix, is inversion of control.

When you pass a callback to a function, you hand control of your program's continuation to someone else's code. You are saying: here is what should happen next; call it at the right time, exactly once, with the right arguments. The receiving function now owns the continuation. Control has moved from the caller to the callee.

That raises several trust problems.

Missing callback invocation. Some libraries had code paths where the callback was never called, leaving your program waiting indefinitely. A database driver might fail to call back on timeout. A middleware function might forget to call next() on a certain error path. There may be no timeout mechanism, no error, and no stack trace, because nothing threw. The program appears to freeze while pending request state accumulates.

Multiple callback invocation. A bug in the callee can call your callback twice. The bug is especially dangerous when your callback has side effects such as writing to a database or sending an HTTP response. Double-calling a callback often appears as a "headers already sent" error in Express, duplicate database records, or repeated external side effects. The second invocation can be quiet unless you have added a once-guard.

Mixed sync/async timing. If a library sometimes calls the callback synchronously on the current stack and sometimes asynchronously on a later turn, your code's behavior becomes order-dependent. Event listeners attached after a synchronous callback invocation will not see that earlier call. State that you expected to set before the callback runs may not be ready. This is the same "releasing Zalgo" problem from earlier. If a function ever calls its callback asynchronously, it should always call it asynchronously, even for cached results or trivial operations. process.nextTick() exists partly to defer a synchronous result into Node's next tick queue and preserve consistent behavior.

Wrong callback arguments. The error-first convention helps, but nothing enforces it at the language level. A library can pass a string instead of an Error object, put the error in the wrong position, or pass extra arguments you do not expect. There is no runtime interface check. The contract is conventional.

Named functions help with readability, but they do not solve these trust issues:

function onUser(err, user) {

if (err) return handleError(err);

getOrders(user.id, onOrders);

}

function onOrders(err, orders) {

if (err) return handleError(err);

getOrderDetails(orders[0].id, onDetails);

}

getUser(userId, onUser);The indentation is gone. Each step is named. The flow reads sequentially at the module level. Even so, you still hand onOrders to getOrders and trust that function to call it correctly. You still cannot guarantee single invocation. You still cannot enforce async timing. Readability has improved, but safety has not.

Some developers wrapped callbacks in once guards to prevent double-calling:

function once(fn) {

let called = false;

return function (...args) {

if (called) return;

called = true;

fn.apply(this, args);

};

}Passing once(callback) instead of callback means an accidental second call is silently ignored. The wrapper is defensive; it does not fix the callee. In development code, logging or throwing on the second call may be better because it exposes the bug. In side-effecting production paths, ignoring the duplicate can be the safer failure mode.

Middleware stacks, including Express applications, commonly exposed this failure mode when next() was called twice or omitted on an error path. Teams used lint rules, conventions, and wrapper utilities to reduce those mistakes. The architecture of callbacks still leaves the trust issue in place: a callback API gives the callee responsibility for timing, argument shape, and single invocation.

Promises were the structural answer. By returning an object that represents the future result, control stays with the caller. The caller decides when to .then(), can attach multiple handlers, gets asynchronous reaction handlers, and gets exactly-once settlement. A promise can only fulfill or reject once; additional calls to resolve() or reject() leave the settled state unchanged. That belongs to the next subchapter.

Callbacks Today

Despite promises and async/await dominating current Node.js code, callbacks have not gone away. They remain part of Node's public compatibility surface and its native completion machinery.

libuv is callback-based at the C API. Asynchronous filesystem operations receive a uv_fs_cb. Thread-pool work receives an after_work_cb on the loop thread after the worker finishes. Node's C++ binding layer uses those native callbacks to resume JavaScript work, either by calling a JavaScript callback through FSReqCallback or by resolving/rejecting a promise through a promise request path.

The public API shape determines which JavaScript object Node keeps. fs.readFile(path, callback) stores your user callback in a ReadFileContext and eventually calls it from the final read-file continuation. fs.promises.readFile(path) uses promise-oriented binding calls and FileHandle promise machinery. It still crosses libuv and native completion points for filesystem work, but it does not store your JavaScript callback because you did not pass one.

util.callbackify exists for converting a promise-returning function back into a callback-accepting one. It is the reverse of util.promisify. The direction sounds backwards, but the compatibility case is common. It is used where callback-based APIs need to call newer promise-based functions, and in libraries that need to maintain backward compatibility with callback consumers while internally using async/await.

Performance can be another reason callbacks persist on some hot paths. A callback API still allocates real objects: closures, native request wrappers, buffers, and whatever state the operation needs. In a specific workload, a callback path may allocate fewer promise-related objects and schedule less promise reaction work than a promise-heavy path. Treat that as a profiling hypothesis, not a rule. Some performance-sensitive libraries still offer callback APIs alongside promise APIs for this reason.

Those differences depend on the exact path, Node version, V8 version, and workload. V8 and Node have optimized promises heavily since the early promise APIs. For most application code, the readability and error-channel consistency of promises and async/await outweigh the allocation cost. Profile before optimizing.

EventEmitter listeners are callbacks too. When you write server.on("request", handler), that handler is a callback registered for the "request" event. The EventEmitter pattern, covered later in this chapter, is a multi-callback pattern: multiple listeners for the same event, called synchronously in registration order. Streams emit events with callbacks. The "data" handler on a Readable stream is a callback. The "error" handler is a callback.

Even setTimeout and setInterval are callback-based APIs. You pass a function, and it gets called later. The timer phase of the event loop fires timer callbacks whose timers have expired.

The Promise constructor itself takes a function argument. The (resolve, reject) => { ... } function you pass to new Promise() is the executor. It runs synchronously during construction, so it is a different shape from a Node-style async callback. The abstraction that replaced many callbacks still starts with a function you hand to someone else's code.

Callbacks are one of Node's lowest-level completion shapes. Higher-level patterns often wrap them, sit beside them, or meet them at integration points. The machinery described earlier in this subchapter - FSReqCallback, ReadFileContext, MakeCallback, and task-queue checkpoints - runs underneath callback-based filesystem APIs. Promise-native code uses a related path that resolves promises instead of calling user callbacks. Callback APIs, promise APIs, libuv completions, and task queues still meet at the same runtime split.