Async/Await: Suspension & Microtasks

Async functions in Node.js always return promises, but that surface rule is only the start of the mechanism. When you call an async function, V8 creates an outer promise and runs the body synchronously until it reaches an await, return, or unhandled throw. Each await turns the remaining work into a later continuation. V8 owns the async-function state that makes suspension and resumption possible. Node decides where the resulting promise jobs run relative to process.nextTick(), timers, I/O callbacks, and the rest of the event loop.

The snippets below use plain JavaScript unless a block says otherwise. Short fragments with top-level await assume an ES module or an enclosing async function. Names such as db, fetchUser, and transform stand for application-provided functions. Where CommonJS and ES modules schedule differently, the text names the context.

How async/await Works

The syntax makes asynchronous code read like direct control flow, but the observable shape is still a promise chain. Code after await runs in a later step of the same async function, and thrown errors become promise rejections unless local code catches them. Once an await is reached, the surrounding JavaScript stack keeps running, so execution order changes even when the awaited promise is already fulfilled.

Adding async changes a function's contract immediately. Call it and you get a promise every time. The body can return 42, throw an error, perform several asynchronous operations, or fall off the end; the caller still observes the outer promise created at function entry.

That contract is where many small bugs start. An async callback passed to Array.map() gives you an array of promises. An async comparator passed to Array.sort() gives sort() a promise where it expects a number. The failure sits at the split between a synchronous API and a function that always returns a promise.

async function getNumber() {

return 42;

}

const result = getNumber();

console.log(result instanceof Promise);The log prints true. The returned 42 becomes the fulfillment value of the promise returned from getNumber(). A missing return fulfills with undefined, while a thrown error rejects the same outer promise.

async function boom() {

throw new Error("broken");

}

boom().catch(e => console.log(e.message));The throw happens while boom() is being called, before the caller has attached the catch. The async wrapper catches the exception and rejects the outer promise, so the caller receives the failure through normal promise handling.

Returning a promise gives the outer promise another state to adopt. Returning a thenable goes through thenable assimilation, covered in the previous subchapter. Modern V8, including the V8 shipped with Node v24, optimizes the native-promise path, but the observable rule does not change: callers observe the async function's outer promise. If the body returns another promise, the outer promise adopts that promise's fulfillment or rejection; it is not the same object.

One return detail is worth keeping explicit because it affects error paths. return somePromise forwards the promise through the async function's resolution path. return await somePromise observes somePromise at an await point and then resolves the outer promise with the fulfilled value. Modern V8 has a fast path for native promises at await sites, so the decision should be about behavior: use return await inside try/catch and finally, and when stack quality is important. Direct return is fine for plain forwarding.

await Suspends One Function

await pauses the current async function. It does not pause the process, the event loop, or other JavaScript that is ready to run.

async function example() {

console.log("A");

const val = await Promise.resolve("B");

console.log(val);

console.log("C");

}

example();

console.log("D");Output: A, D, B, C.

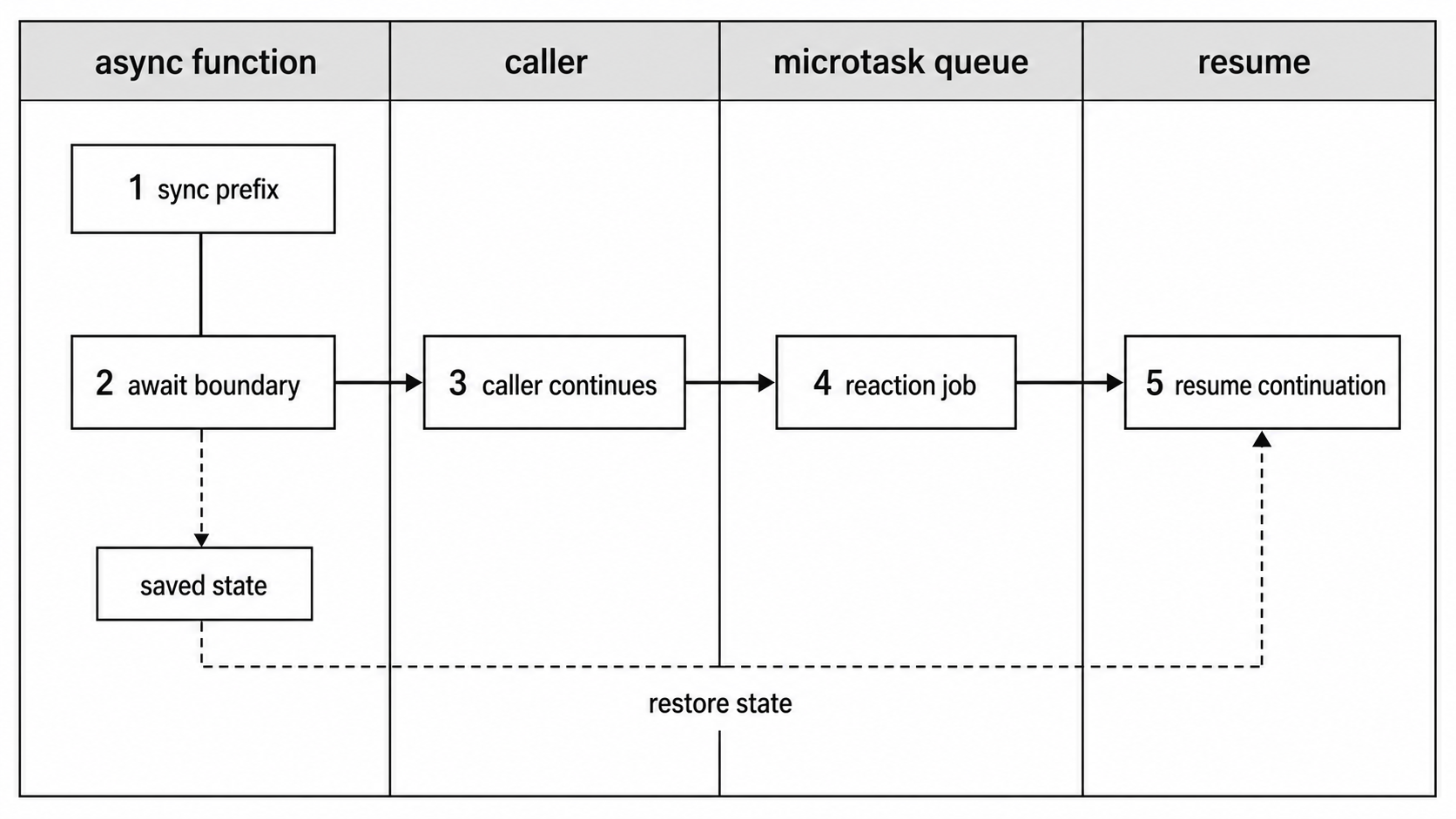

The first log runs on the current call stack. When V8 reaches await, it records the function state, attaches a reaction to the awaited promise, and returns control to the caller. The caller prints D. Later, during a microtask checkpoint, V8 resumes the async function with the fulfilled value "B" and runs the remaining logs.

Figure 1 — An await point saves the current function state, returns control to the caller, and resumes the remaining body later as promise microtask work.

Everything before the first await is synchronous. The caller does not regain control until the function reaches its first await, throws, or returns. If the body contains no await expressions at all, the whole body runs before control returns to the caller, although the result is still delivered through a promise.

async function noAwait() {

console.log("sync");

return "done";

}

noAwait();

console.log("after");Output: sync, after. A .then() attached to the returned promise still runs later as a microtask, because promise handlers keep the scheduling rule from the previous subchapter.

Plain values follow the same await path.

async function waitValue() {

const n = await 42;

console.log(n);

}

waitValue();V8 runs promise resolution for the value, suspends the function, and later resumes it in a microtask with 42. Generic code relies on that property when an input may be either a value or a promise. Hand-written await 42 usually adds only a scheduling edge.

The same handoff appears when the promise is already fulfilled. V8 has reduced the number of microtask turns on the common native-promise path, but it still preserves suspension: code after the await runs after the current synchronous stack empties.

Sequential awaits therefore create sequential work.

async function three() {

const a = await step1();

const b = await step2(a);

return step3(b);

}step2 starts only after step1 fulfills. step3 starts only after step2 fulfills. The total latency is the sum of those waits plus scheduler overhead, so that shape is right when each step depends on the previous result and wasteful when the operations are independent.

The Promise-Chain Shape

Async/await reads linearly, but each await still creates a continuation attached to a promise reaction.

async function fetchData(url) {

const response = await fetch(url);

const json = await response.json();

return json;

}Behaviorally, that has the same continuation shape as this:

function fetchData(url) {

return fetch(url)

.then(response => response.json())

.then(json => json);

}The first await waits for fetch(url) to settle before running the response step. The second waits for response.json() before returning the parsed value. The final return resolves the promise that the async function gave to its caller.

The error path maps onto the same structure.

async function fetchSafe(url) {

try {

const resp = await fetch(url);

return await resp.json();

} catch (e) {

console.error("failed:", e.message);

return null;

}

}Rejection from either awaited operation is thrown at the corresponding await site, so the catch block receives it through normal exception flow. You write direct-style control flow while promise scheduling still provides the machinery underneath.

The gain is lexical scope. A .then() chain splits the work into separate functions. Sharing local state across those steps means returning compound objects, closing over variables, or adding more functions. An async function keeps one lexical scope across suspension points, so response can remain available after later awaits because V8 stores the suspended execution context on the heap and restores it later.

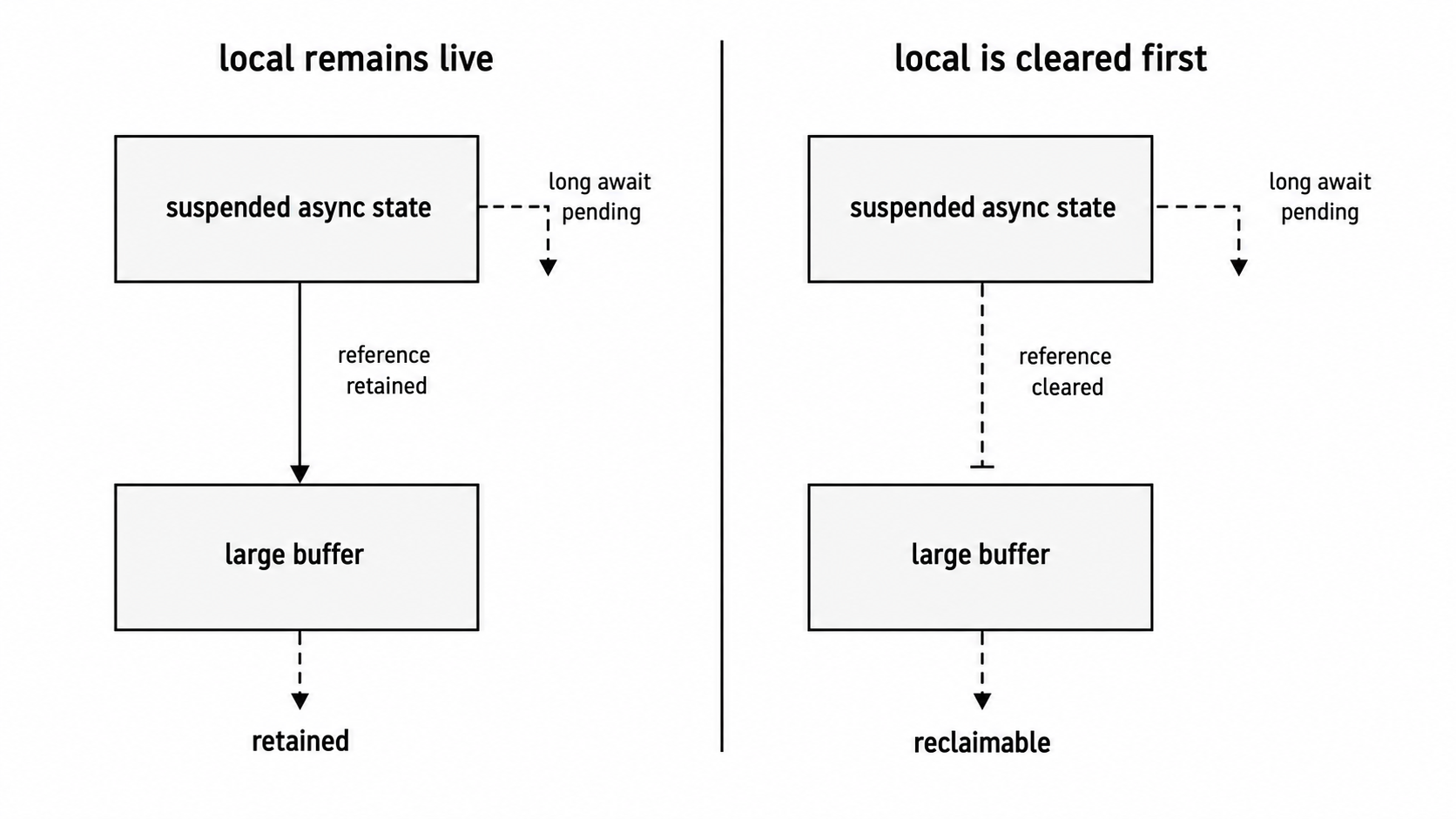

That convenience can retain memory longer than expected. Hold a 50 MB buffer in a local variable, hit await db.save(), and the buffer stays reachable while the database promise is pending. The async function object retains its scope; V8 can collect objects only after live variables stop referencing them.

The generator connection is mostly historical, but it explains the shape of older code. Before async/await landed in ES2017, many Node projects used generator functions with runner libraries. The runner called .next() after each yielded promise fulfilled and .throw() after rejection. Async functions turned that pattern into syntax with promise integration built into the engine. V8 still shares some suspension machinery with generators, including suspend and resume bytecode concepts, while promise resolution and outer-promise handling are specific to async functions.

V8's State Machine

Every await is a suspension point, and the machinery behind that point explains both the ordering and the cost model.

Keep the split clear: the promise-returning contract is JavaScript semantics. Object names and layout details in this section describe V8's current implementation and can shift between engine releases.

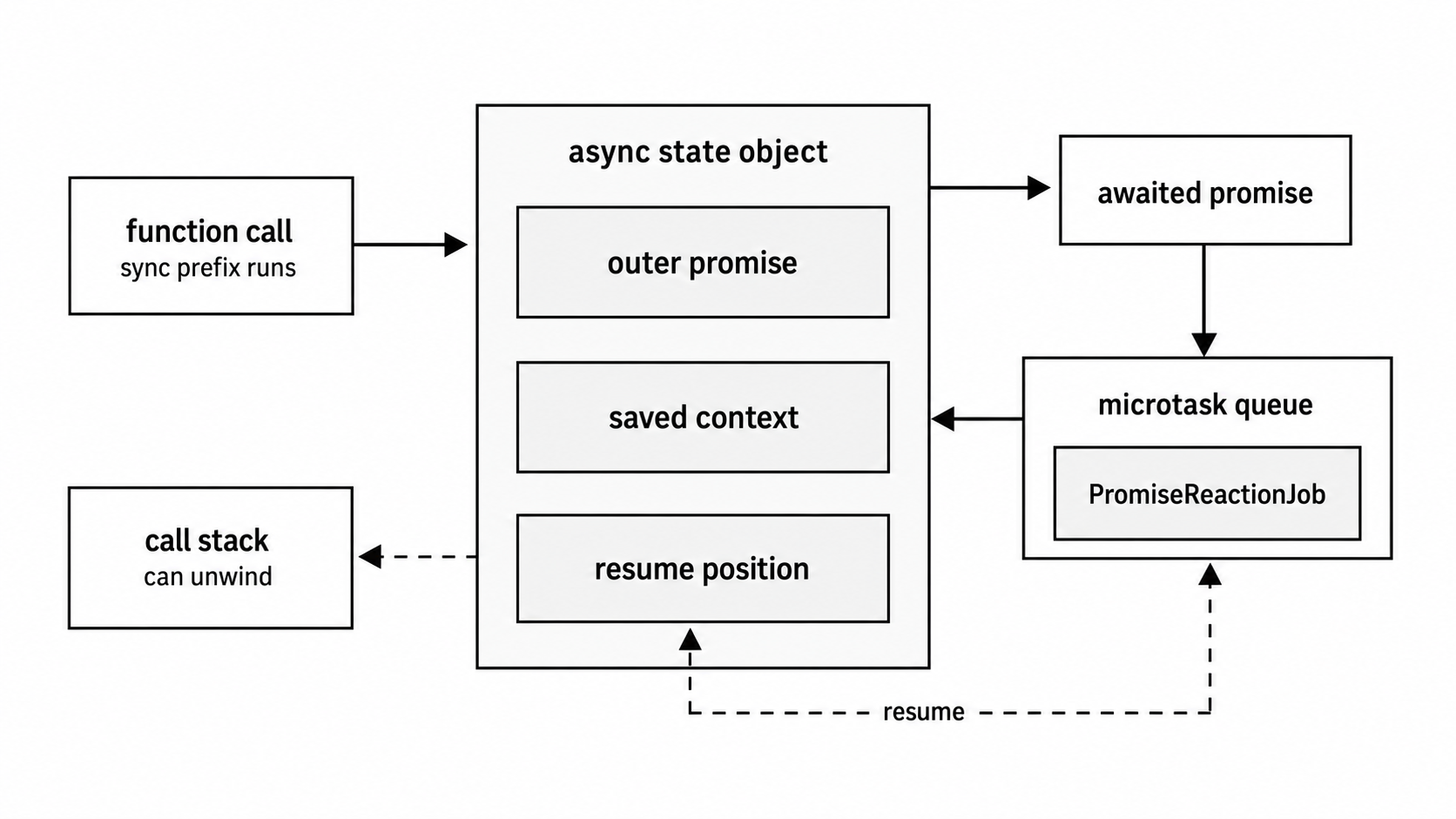

When V8 compiles an async function, it emits bytecode that can suspend and resume. On call, V8 creates the promise returned to the caller and an internal async function object that tracks execution state. Current V8 source names that object JSAsyncFunctionObject, extending the generator object shape with a promise field. It links the outer promise, the current continuation state, and the function context needed to resume after an awaited promise settles.

The outer promise is allocated at function entry, before the body has finished its synchronous prefix. If the function reaches return, V8 resolves that promise. If the function throws and local code leaves the error unhandled, V8 rejects it. If the function has five awaits, the same outer promise remains pending across all five suspensions.

The first part of the function runs like ordinary JavaScript. Local variables live in the active stack frame while execution is active. At an await, V8 evaluates the expression, runs promise resolution for the value, and attaches fulfillment and rejection reactions to the resulting promise. It then saves the current execution context: local variables, operand state, and bytecode position. The active stack frame can unwind, and the saved state lives on the heap until the continuation runs.

Figure 2 — V8 keeps the outer promise, saved execution context, awaited promise, and reaction job connected until the async function can resume.

When the awaited promise settles, V8 enqueues a PromiseReactionJob into its microtask queue. Node controls when V8 runs those jobs through embedder checkpoints, as covered in the previous subchapter. At ordinary CommonJS and native-callback checkpoints, Node drains process.nextTick() first, then asks V8 to drain promise microtasks. Node's v24 process docs expose the same user-visible rule for queueMicrotask() and process.nextTick(): CJS drains nextTick first, while ESM top-level evaluation is already in microtask processing and can order differently. When the reaction job for the await runs, V8 restores the async function's saved context and resumes at the bytecode immediately after the await expression.

Fulfillment resumes as a value. Rejection resumes as a throw. That is why ordinary try/catch works across an await point:

async function loadName(id) {

try {

const user = await fetchUser(id);

return user.name;

} catch (e) {

return null;

}

}V8 resumes the function inside the original try region. If the promise rejected, the resumed bytecode throws the rejection reason at the await site, and the catch block receives it through the normal exception path. JavaScript is still using exception machinery here; the asynchronous part is how control reaches that point again.

Older V8 versions paid more per await. Before V8 7.2, awaiting an already-fulfilled native promise involved extra promise allocation and extra microtask turns. V8's fast-async work changed both the implementation and the spec path for the native-promise case, reducing the common await path to one microtask turn. Node v24 has that later optimized path, plus follow-on work around promise resolution, async stack traces, and allocation.

The fast path applies to native promises from the current realm. Thenables take the full assimilation path because the engine must read and call the .then property. That property can run user code, throw, or resolve later, so V8 has to honor the protocol instead of treating it like a native promise.

The object layout is worth treating modestly. In current V8 source, JSAsyncFunctionObject extends JSGeneratorObject and adds the outer promise field. The generator part carries fields for the function, context, receiver, continuation, resume mode, and saved interpreter registers. Those names are implementation detail, not an API contract. Await resume handlers are created from shared builtins around the await operation, promise objects carry the reaction lists, microtask scheduling belongs to promise reactions, and suspended execution state belongs to the async function object.

Allocation usually starts in the young generation of V8's heap. Short async functions finish there and die cheaply. A function suspended on a slow network call may survive a young-generation collection and move to old generation. The object itself is small; retained locals count more. A suspended handler with a parsed request body, a large Buffer, and a closure over the request object can keep much more memory alive than the async machinery itself.

Async stack traces add another layer. A normal stack covers the current synchronous call stack. Await points split execution across microtask turns, so V8 stores metadata that lets it reconstruct the chain of async calls. When the chain crosses real await points, current Node.js releases can show frames like this:

Error: oops

at innerFn (file.js:12:11)

at async middleFn (file.js:8:20)

at async outerFn (file.js:3:18)The async frames mark await points. Directly returning another promise can produce a shorter stack and omit intermediate async functions, which is one reason return await is still useful at error paths. The metadata turns a rejected async flow into a stack trace that names the logical callers, which is the useful part when the innermost function only says readFromReplica or parseConfig.

One low-level detail explains many ordering bugs: await resumes through a PromiseReactionJob, the same job type described in the promise chapter. Await continuations share ordering with .then() handlers and queueMicrotask(). Node's nextTick queue has priority at ordinary checkpoint edges, but it does not interrupt an active V8 microtask drain. That split explains most "why did this log first" cases in async/await code.

Ordering Rules You Actually See

Code after await runs as a microtask.

console.log("1");

async function run() {

console.log("2");

await Promise.resolve();

console.log("3");

}

run();

console.log("4");Output: 1, 2, 4, 3. Synchronous code runs first. The await continuation runs when the microtask queue drains.

When multiple async functions suspend, their continuations run in the order they enter the microtask queue.

async function a() {

console.log("a1");

await Promise.resolve();

console.log("a2");

}

async function b() {

console.log("b1");

await Promise.resolve();

console.log("b2");

}

a();

b();The output is a1, b1, a2, b2. Both synchronous prefixes run immediately. Then the await continuations drain FIFO.

Extra awaits add extra turns. If x() prints before two awaits and y() prints before one await, calling x(); y(); can produce x1, y1, x2, y2, x3. x resumes, prints x2, hits another await, and goes to the back of the microtask queue. y resumes before x's second continuation.

Node-specific ordering still applies, but the module system is important for top-level examples. In CommonJS, process.nextTick() runs before V8 promise microtasks at the checkpoint after the script returns.

// CommonJS, or `node -e "..."`

async function run() {

await Promise.resolve();

console.log("await");

}

run();

process.nextTick(() => console.log("nextTick"));CommonJS output: nextTick, await. The await queues a promise reaction, while process.nextTick() queues into Node's separate nextTick queue. Node drains nextTick first, then V8's microtask queue.

In an ES module, top-level module evaluation is already inside microtask processing. Node's docs call out that the same top-level source prints await, then nextTick. Inside normal callbacks entered from libuv, the ordinary checkpoint rule still applies after the callback returns.

Node's current process docs mark process.nextTick() as legacy. Prefer queueMicrotask() unless you need Node-specific nextTick priority or argument passing.

Fire-and-forget code changes ordering for a simpler reason: the async function starts immediately, runs until its first await, and then the caller continues without keeping a completion handle.

async function save(data) {

await db.insert(data);

console.log("saved");

}

save(myData);

console.log("continuing");continuing prints before saved. That may be intended, but the failure path still needs an explicit catch when the promise is intentionally detached.

Error Handling

Use try/catch around the awaits whose failures you can handle.

async function loadUser(id) {

try {

const user = await fetchUser(id);

return user;

} catch (e) {

console.error("fetch failed:", e.message);

return null;

}

}If fetchUser(id) rejects, the await expression throws and the catch block receives the rejection reason. If local catch blocks do not handle it, the async function's returned promise rejects.

async function loadUser(id) {

const user = await fetchUser(id);

return user;

}

loadUser(99).catch(e => console.error(e.message));The rejection travels through returned promises until a caller handles it. Leave it unhandled and Node treats it as an unhandled rejection, covered in the previous subchapter.

Several awaits can share a catch block when the response to failure is the same. The catch block becomes the split for that whole region, so keep the region small enough that the log message still identifies the operation that failed.

async function pipeline() {

try {

const raw = await fetchData();

const parsed = await parseData(raw);

return await saveData(parsed);

} catch (e) {

console.error("pipeline failed:", e);

}

}Broad catch blocks are fine when every step has the same recovery. Use smaller regions when the message or recovery differs by step. The next version wraps only fetchData(): if parseData(raw) can fail asynchronously or throw synchronously, it should be handled at a separate edge with its own message.

async function pipeline() {

let raw;

try {

raw = await fetchData();

} catch (e) {

throw new Error("fetch failed", { cause: e });

}

return parseData(raw);

}The common bug is a promise that gets created and discarded.

async function processJob() {

doSomethingAsync();

console.log("done");

}doSomethingAsync() starts, but its returned promise goes nowhere. If it rejects, local error handling is absent. Linters call these floating promises. Treat them as defects unless fire-and-forget is intentional and error handling is attached.

doSomethingAsync().catch(e => {

console.error("background task failed:", e);

});A recurring legitimate use for return await is catching a returned promise inside the current function.

async function risky() {

try {

return await doSomethingAsync();

} catch (e) {

console.error("caught:", e);

}

}With direct return, the try block observes only the synchronous call to doSomethingAsync(). A later rejection bypasses that catch and is handled by whoever awaits or catches the returned promise. With return await, the function stays inside the try region until the promise settles. Use that form when local cleanup, wrapping, logging, or stack quality is required.

Direct return is leaner when the function only forwards a promise:

function getUser(id) {

return db.query("SELECT * FROM users WHERE id = ?", [id]);

}An async wrapper gives you room for transformation or local error handling:

async function getUser(id) {

const row = await db.query("SELECT * FROM users WHERE id = ?", [id]);

return normalizeUser(row);

}Both functions return promises to the caller. The second version also allocates async-function state. That cost is usually fine; in library internals processing very high call rates, measure it.

finally works with awaited cleanup.

async function withLock(resource, fn) {

await resource.lock();

try {

return await fn();

} finally {

await resource.unlock();

}

}If fn() rejects and unlock() rejects too, the finally rejection wins. This is the same rule as synchronous finally: a throw during cleanup replaces the earlier error. Cleanup code should be small, tested, and noisy when it fails.

Cleanup often belongs in finally even when the function returns from try. The return await fn() form keeps the protected operation inside the try region until it settles; direct return fn() can run cleanup before the returned promise has finished. That behavior is important for locks, temporary files, spans, transactions, and file handles.

Patterns That Count

Sequential awaits are explicit, and sometimes they are exactly the right shape.

for (const migration of migrations) {

await runMigration(migration);

}Database migrations, ordered writes, and rate-limited calls often need one operation to finish before the next starts.

Independent work should start together.

async function fetchAll(urls) {

const responses = await Promise.all(

urls.map(url => fetch(url))

);

return Promise.all(responses.map(r => r.json()));

}Calling fetch(url) starts the work. await waits for the result. When operations are independent, collect the promises first and await them together. Subchapter 06 covers combinators in detail, including failure behavior and bounded concurrency.

That difference between start and wait is easy to lose because await fetch(url) looks like one operation. The function call starts the operation; await observes completion. Keeping those roles separate is what lets you overlap independent work.

Array iteration methods deserve suspicion around async callbacks.

urls.forEach(async (url) => {

const res = await fetch(url);

console.log(await res.text());

});

console.log("done");forEach ignores the promises returned by the async callback, so the final log runs immediately. Errors inside the callbacks become unhandled unless each callback catches them. filter, some, and every do not await predicate promises either; promise objects are truthy, so the result is usually wrong. sort expects a numeric comparator result, not a promise. Use for...of for sequential work or Promise.all(urls.map(...)) for concurrent work.

Top-level await in ES modules makes async IIFEs less common, but CommonJS still uses the pattern:

(async () => {

const config = await loadConfig();

const server = await startServer(config);

console.log("listening on", server.address().port);

})();That pattern is fine in scripts and older CommonJS modules. In ESM on Node v24, module-scope await is available.

Avoid wrapping async code in a fresh Promise constructor.

async function getData(url) {

return new Promise(async (resolve) => {

const data = await fetch(url);

resolve(data);

});

}The outer async function already returns a promise. The async executor creates another promise path and can lose rejections in ugly ways. Write the function directly.

async function getData(url) {

return fetch(url);

}Wrap callback APIs with a promise when the source is callback-based. Avoid wrapping promises with more promises.

An async Promise executor creates a broken promise edge. The executor passed to new Promise() is expected to call resolve or reject directly. Marking that executor async makes it return its own promise. If the executor throws after an await, that rejection belongs to the executor's promise, while the outer promise can remain pending depending on the code path. The bad failure mode is a promise that neither fulfills nor rejects.

Production Shape

In production Node v24 code, async/await should be the default shape for application logic. It matches the ecosystem: HTTP clients, database drivers, queues, test runners, and framework hooks mostly speak promises now.

Performance concerns usually point somewhere else. Network, disk, database, and timer latency normally dominate await continuation overhead. If profiling says async overhead is visible, the first fix is usually batching, limiting concurrency, or removing accidental sequential waits. Rewriting readable async/await code into raw .then() chains buys little outside library internals and tight loops.

await does not cancel work. It waits for the promise you give it. Cancellation must be part of the API you call, usually through an AbortSignal or a driver-specific cancellation handle.

async function fetchJsonWithTimeout(url, ms) {

const controller = new AbortController();

const timer = setTimeout(() => controller.abort(), ms);

try {

const response = await fetch(url, { signal: controller.signal });

return await response.json();

} finally {

clearTimeout(timer);

}

}Memory Around Await

Memory needs more attention than the syntax suggests. Every suspended async function can retain locals that remain live across the suspension. Keep scopes lean around awaits, and drop large references before long waits.

async function handle(req) {

let body = await readBody(req);

const parsed = parseRequest(body);

body = null;

return db.save(parsed);

}Setting body = null makes the intent plain: the raw payload can die before the database await. V8 may still make its own optimization choices, but removing the reference gives the collector permission.

Figure 3 — Locals that remain reachable across an await stay alive with the suspended async-function state; clearing large references before a long wait can reduce retained memory.

Large fan-outs need bounds.

const results = await Promise.all(

items.map(item => transform(item))

);For 100 items, this is fine. For 100,000 items, it creates 100,000 in-flight inputs to Promise.all(). If transform is async and suspends, it can also create a large number of suspended continuations. Batch the work or use a concurrency limiter. The thread pool, database, remote API, and heap all have limits, even when the syntax makes the fan-out one line.

A simple batch shape is boring and effective:

for (let i = 0; i < items.length; i += 100) {

const batch = items.slice(i, i + 100);

await Promise.all(batch.map(item => transform(item)));

}That keeps only 100 transforms in flight. The right number depends on the downstream system. Databases, queues, and APIs usually tell you the limit through latency, errors, or rate-limit headers.

Production defaults:

- Use async/await for request handlers and business logic.

- Treat floating promises as bugs unless they have an explicit

.catch(). - Use

return awaitinsidetry/catchorfinally; return the promise directly elsewhere. - Use

Promise.all()for independent work, with bounded concurrency for large batches. - Pass cancellation signals or driver cancellation handles across long waits.

- Keep large buffers and parsed payloads out of scope before long awaits.

- Prefer

for...offor ordered async loops. - Avoid async callbacks with

forEach,filter,some,every, andsortunless the API explicitly consumes promises. - Profile before replacing async/await with

.then()for speed.

Cost Model

Each await normally involves a promise reaction, a microtask turn, and a suspend/resume of async function state. Node v24 makes this path cheap enough for ordinary application code, but it is not free.

Raw .then() chains can sometimes win in microbenchmarks because they avoid the async function object and some state restoration. The size and direction of that difference depends on the Node and V8 version, the shape of the function, and whether promise hooks, async hooks, or debugging are active. Benchmark under the target runtime before trading readable async/await code for hand-built chains.

The cost becomes visible in code that creates huge numbers of async function instances with tiny bodies:

const out = await Promise.all(

items.map(async item => transformSyncPart(item))

);If transformSyncPart() is effectively synchronous, the async callback adds promise and async-function allocation for no scheduling benefit. Use a synchronous map() for synchronous work. Keep async functions for work that crosses an async handoff.

The more common performance failure is accidental serialization:

for (const user of users) {

await sendEmail(user);

}This is correct for rate-limited or ordered sends and slow for independent sends. Syntax gives you no proof of intent; the data dependency tells you.

Debugging has improved enough that keeping async/await is usually worth it. Async stack traces are enabled by default in current Node.js releases. The inspector can step across await points and show locals while a function is suspended. You get readable source and usable stack traces, which beats a hand-built promise chain in most application code.