Node.js EventEmitter: Listeners, Errors & Leak Warnings

EventEmitter is Node's synchronous dispatch primitive. It maps event names to listener functions, then calls those listeners immediately, in registration order, when emit() runs. Streams, servers, sockets, child processes, and many userland APIs build on that same shape to expose repeated state changes and lifecycle events.

EventEmitter Internals

Two details shape most real-world EventEmitter bugs. An unhandled 'error' event throws, and listener-count warnings are a leak signal rather than a hard limit. The warning appears when too many listeners collect under one event name, because that often means code is registering listeners repeatedly without removing them.

Most core I/O objects developers use directly, including servers, streams, sockets, and child processes, inherit this behavior. The implementation lives in lib/events.js in the Node.js source. The surface API (on, emit, off) hides the part people most often forget: emit() is synchronous. A listener runs on the same call stack as the code that emitted the event.

The streams chapters introduced EventEmitter as a user-facing pattern. This subchapter follows the mechanism underneath it: the internal storage, the dispatch path, the error event contract that can crash a process, and the way EventEmitter sits beside callbacks, promises, and async/await as a coordination pattern.

The _events Object

The fields in this section describe Node v24's current implementation, not a public API contract. _events, _eventsCount, _maxListeners, and the storage layout explain the behavior, but production code should use the documented inspection methods instead of depending on these private fields.

When you create a new EventEmitter, three internal properties get initialized:

const EventEmitter = require('node:events');

const ee = new EventEmitter();

console.log(ee._events); // [Object: null prototype] {}

console.log(ee._eventsCount); // 0

console.log(ee._maxListeners); // undefinedThe shorter examples that follow assume this same EventEmitter import and an emitter instance named ee unless they show their own setup.

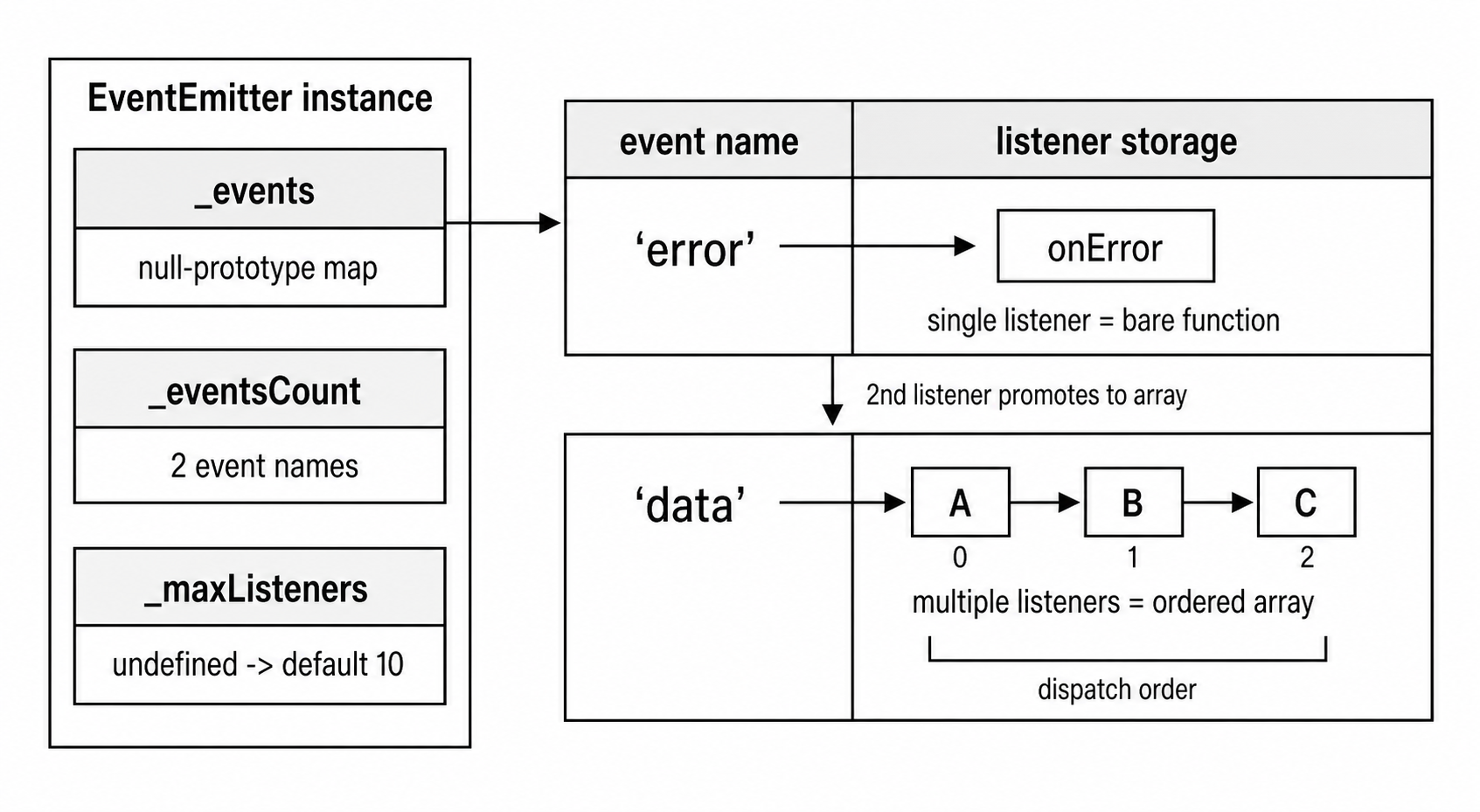

The first field, _events, is a null-prototype object. The Node v24 source creates it with the equivalent of { __proto__: null }. That object has no inherited toString, no inherited hasOwnProperty, and no inherited constructor. This avoids prototype-chain collisions where an event named toString, valueOf, or constructor could otherwise find an inherited property instead of a registered listener. With a null-prototype object, a lookup that does not find a directly set key returns undefined.

The keys of _events are event names, and the values are either a single function or an array of functions. The optimization that surprises people is the single-listener path: when only one listener exists for an event, Node stores the function directly on the key, with no array wrapper. The second listener promotes that value to an array. Removing listeners can demote it back to a single function. This avoids array allocations for the common case where an event has exactly one listener, such as many 'error', 'close', or 'finish' handlers on streams.

Beside the storage object, _eventsCount tracks the number of event names with at least one listener. Node can use that integer when it only needs to know whether any event names exist. For example, eventNames() can return an empty array immediately when _eventsCount is 0 instead of iterating _events.

The third field, _maxListeners, starts as undefined, which means "use the default." The default is 10, pulled from EventEmitter.defaultMaxListeners. You can override it per instance with setMaxListeners(). Setting it to 0 or Infinity disables the warning entirely, but the warning is often the first visible sign of listener growth, so disabling it should be deliberate.

Figure 7.10 — EventEmitter keeps a small event-name map beside listener-count state. A name with one listener can point directly at a function; multiple listeners become an ordered listener array.

EventEmitter.init()

Construction does little beyond setting up those fields. EventEmitter.init() is called by the constructor, and subclass constructors can call it directly. It checks whether _events is missing or inherited from the prototype. In either case, it assigns a fresh null-prototype object and sets _eventsCount to 0. It also initializes _maxListeners to undefined unless the instance already has a value.

The inherited-property check exists because of JavaScript prototype inheritance. If a subclass prototype has an _events object, every instance would otherwise see that same object through the prototype chain. The guard detects this by comparing this._events === ObjectGetPrototypeOf(this)._events. When they match, the instance is seeing an inherited _events rather than its own, so init() creates a fresh one. Without this check, two instances of the same subclass could accidentally share event registrations through their shared prototype.

Because these fields are regular enumerable properties, they show up in places that can surprise you: Object.keys(), spread operations, and some serialization paths. JSON.stringify() includes enumerable values that can be represented as JSON, so a fresh emitter serializes _events and _eventsCount but omits _maxListeners while it is undefined. If you serialize an EventEmitter subclass, add a toJSON() method or explicitly filter these implementation fields.

Listener Registration

on() and addListener()

on(eventName, listener) and addListener(eventName, listener) are the same function. addListener is an alias assigned to the same method reference. Both add the listener to the end of the listener set for that event name. If no listener exists yet, the function is stored directly. If one listener already exists as a bare function, the value is promoted to a two-element array.

const ee = new EventEmitter();

function fn1() {}

function fn2() {}

ee.on('data', fn1);

console.log(ee._events.data === fn1); // true - bare function

ee.on('data', fn2);

console.log(Array.isArray(ee._events.data)); // true - promoted

console.log(ee._events.data.length); // 2That storage choice does not change ordering. Listeners fire in the order they were added. on() appends to the end. prependListener() inserts at the beginning with array unshift() semantics, so the prepended listener fires first. prependOnceListener() does the same thing with once-only semantics. The prepend variants exist for code that must observe or intercept an event before later handlers process it, such as logging middleware or validation checks.

Every registration path also validates the listener before touching emitter state. Passing anything other than a function, such as a string, a number, or undefined, throws a TypeError. The validation happens inside _addListener, the internal function that all registration methods funnel through, and it runs synchronously before the 'newListener' event fires.

The newListener Event

After validation but before the listener is actually added, EventEmitter emits a 'newListener' event with the event name and listener function as arguments. This fires before the listener is appended to the array. That ordering is intentional: if the 'newListener' handler calls on() for the same event, the listener it adds is inserted before the listener that triggered the 'newListener' emission.

ee.on('newListener', (event, listener) => {

console.log(`Adding listener for ${event}`);

});

ee.on('connection', () => {});

// logs: "Adding listener for connection"The 'newListener' event fires for every on(), once(), addListener(), prependListener(), and prependOnceListener() call. Its counterpart, 'removeListener', fires after a listener is removed. That asymmetry is documented behavior.

The before/after split gives each hook a different view of state. 'newListener' runs while the new listener is still pending, so it can observe or augment registration by adding a different listener for the same event before the one currently being registered. It cannot cancel the pending registration by calling off(), because that listener is not in the emitter yet. 'removeListener' runs after removal, so the emitter state already reflects the change.

These hooks mostly belong to debugging and instrumentation. Diagnostic tools can use 'newListener' to track which events are being listened to across an application, and APM libraries may use it to instrument event handlers. Production application code rarely needs it directly, but tooling sometimes needs to observe registration without changing the call site that registered the listener.

There is one sharp edge in that self-observation. Once a 'newListener' handler is registered, later registrations for 'newListener' also emit 'newListener'. If that handler keeps adding more 'newListener' listeners without a guard, it can create an infinite loop. The source does not guard against this; the listener has to.

once()

once(eventName, listener) keeps the same registration shape but changes what gets stored. Instead of storing your listener directly, EventEmitter creates a wrapper around it. When the wrapper is called, it first removes itself with off(), then calls your original function. The listener fires exactly one time, then it is gone.

ee.once('ready', () => {

console.log('fired once');

});

ee.emit('ready'); // logs "fired once"

ee.emit('ready'); // nothingBecause the stored function is the wrapper, Node keeps a link back to your original function. The wrapper has a .listener property pointing at the original. removeListener() checks both the stored wrapper and that .listener property when searching for a match. Without this, you would have no way to remove a once() listener before it fires, because the function stored in _events would not be the function you passed to once().

When emit() reaches a once() listener, the sequence is:

emit()encounters the wrapper function in the listeners array (or as the bare function).- The wrapper calls

this.removeListener(type, wrapper)- this removes the wrapper from_events. - The wrapper then calls

listener.apply(this, args)- your original function runs. - If your function throws, it's already been removed. The throw propagates, but the listener is gone regardless.

The order is important because removal happens before user code runs. If your once() listener throws, the listener has already been removed. The error propagates, but the listener will not fire again even if you catch the error and re-emit the event. If the listener itself emits the same event on the same emitter, the once listener is already gone, so it will not recurse.

off() and removeListener()

off() is an alias for removeListener(). It searches the listener set for a match, using === strict equality and checking .listener for once() wrappers. It removes one matching registration. If one listener remains, the array is demoted back to a bare function. If no listeners remain, the key is deleted from _events and _eventsCount is decremented.

The "one matching registration" rule is important when the same function has been registered more than once for the same event. Calling off() once removes only one occurrence. In Node v24, removal walks from the end of the listener array, so the most recently added matching listener is removed first. The other registration stays.

If removal happens during an emit() call, the in-progress emission keeps a stable view of the listener set that existed when it began. Subsequent emissions see the updated listener set.

rawListeners()

That wrapper behavior also creates a difference during inspection. rawListeners() returns a copy of the listeners array including the wrapper functions for once() registrations. listeners() unwraps once() wrappers and returns the original listener functions. If you need to inspect the wrapper that Node installed, use rawListeners(). The wrappers created by once() have a .listener property pointing back to the original function, but a .listener property alone does not prove a function came from once(); user code can put that property on a normal listener too.

Synchronous Dispatch: emit()

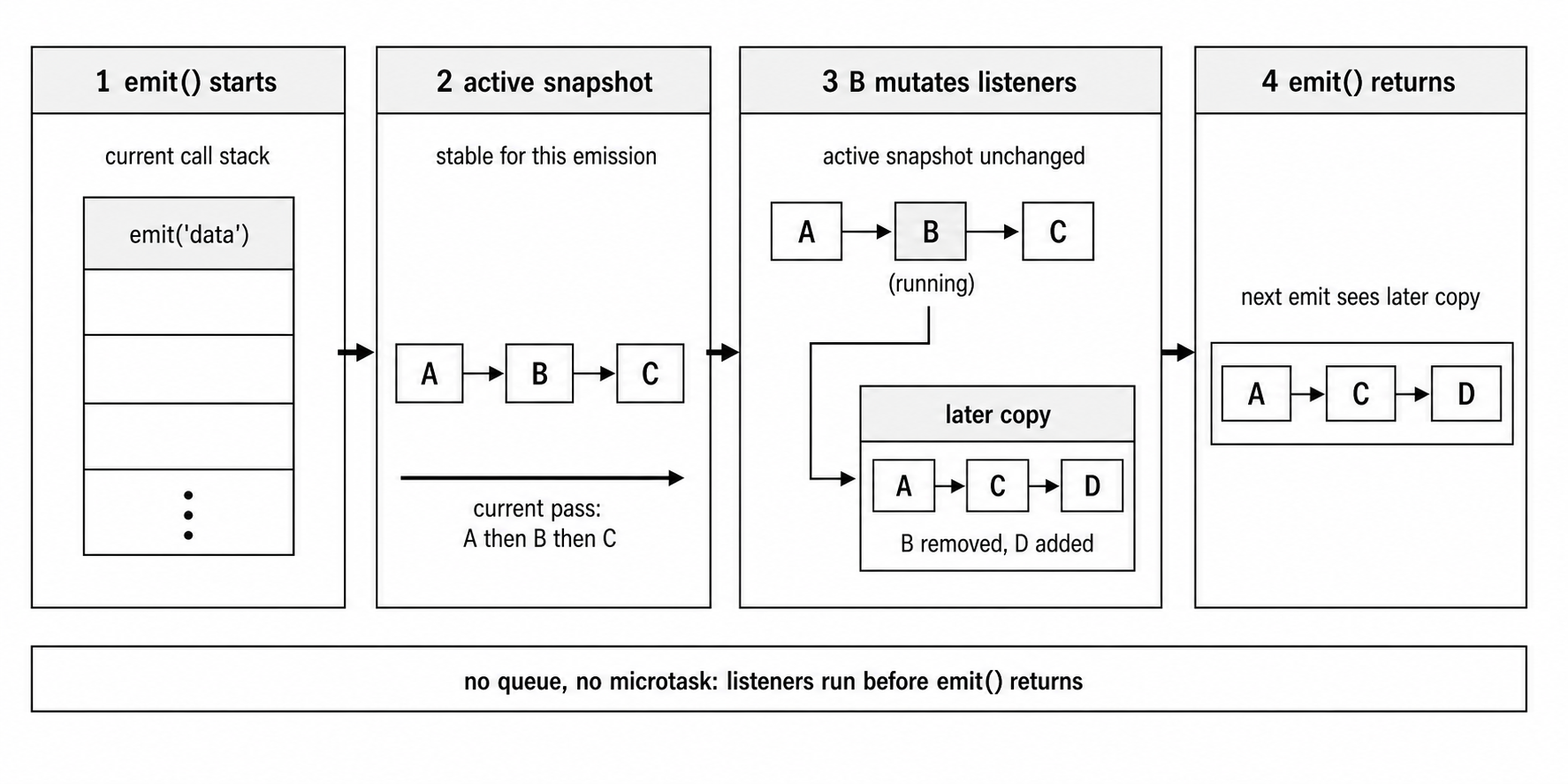

The behavior that ties those registration details together is synchronous dispatch. When you call emit('data', chunk), every listener registered for 'data' runs on the current call stack, in registration order, before emit() returns. There is no queueing, no deferring, and no microtask scheduling.

ee.on('tick', () => console.log('A'));

ee.on('tick', () => console.log('B'));

console.log('before');

ee.emit('tick');

console.log('after');

// before -> A -> B -> afterThe ordering is deterministic. 'A' and 'B' print between 'before' and 'after'. The emit() call does not return until both listeners have finished executing. If listener A takes 500ms of synchronous computation, listener B waits. Everything after the emit() call waits. The entire call stack above emit() is blocked.

The name can be misleading here. EventEmitter is event-driven, but listener dispatch is not automatically asynchronous. emit() is a synchronous function-call loop.

That synchronous model affects application behavior. If a stream has a 'data' listener that does heavy processing, the stream cannot deliver the next chunk until the listener returns. In a server, a slow 'connection' listener blocks JavaScript from processing later connection events until that listener returns; the kernel may still queue connections according to the socket backlog. The event loop (covered in Chapter 1) cannot advance to the next phase while the listener is running.

The blocking behavior is visible in a small example:

ee.on('work', () => {

const start = Date.now();

while (Date.now() - start < 200) {} // 200ms spin

console.log('listener done');

});

console.log('before emit');

ee.emit('work');

console.log('after emit'); // 200ms laterThe 'after emit' line prints 200ms after 'before emit', because emit() does not return until the busy-wait loop completes. This is the same behavior you would get from a plain function call, because emit() is a loop of function calls.

How emit() Actually Works

The implementation in lib/events.js follows this shape:

- If the event name is

'error', handle the special error contract (more on this later). - Look up

this._events[type]. If nothing's there, returnfalse. - If it's a single function, call it with the provided arguments.

- If it's an array, mark that array as currently emitting, iterate it in order, then clear the emitting marker.

The mutation handling in step 4 is where the dispatch path becomes more than a plain for loop. In Node v24, the implementation does not eagerly copy the listener array on every multi-listener emit(). Instead, it tracks when an array is being emitted. If code mutates that listener array during emission, Node clones the array at mutation time and updates _events[type] to point at the new mutable copy. The in-progress emit() keeps iterating the original array, so listeners attached when emission began still run in order.

ee.on('test', function handler() {

ee.off('test', handler); // remove self during emit

});

ee.on('test', () => console.log('second'));

ee.emit('test'); // "second" still firesThe second listener fires even though the first listener removed itself. The in-progress emit() behaves as if it is iterating over the listener set that existed when emit() was invoked. Listeners added during an emit() do not fire for the current emission; they are visible on the next one.

Without that isolation, ordinary array mutation would make listener ordering fragile. Suppose the active listeners are [A, B, C]. The loop is at index 0, calling A. A removes itself, and the active array shifts to [B, C]. The loop increments to index 1, which is now C, so B is skipped. Node's mutation isolation prevents that class of bug while avoiding an unconditional array copy on the common dispatch path.

Figure 7.11 — emit() runs on the current call stack and walks the listener set in order. If that listener set is changed mid-emit, the active pass keeps its stable view while later emissions see the updated copy.

Arguments and Return Value

emit() passes all arguments after the event name directly to each listener. There is no cloning, wrapping, or intermediate object. If you pass an object as an argument, every listener receives the same object reference, so mutating it in one listener affects the listeners that run later:

ee.on('req', (ctx) => { ctx.modified = true; });

ee.on('req', (ctx) => { console.log(ctx.modified); }); // true

ee.emit('req', { modified: false });This shared-reference behavior is intentional and matches how function arguments work in JavaScript generally. It also means listeners can interfere with each other. A listener that modifies its arguments is modifying the same object that later listeners see. HTTP middleware stacks have a related shared-reference hazard: each layer receives the same req and res objects, so mutation order is significant even though the dispatch mechanism is different. In general-purpose EventEmitter usage, mutating shared arguments is a common source of ordering bugs.

The return value of emit() is a boolean: true if at least one listener was registered for the event, false otherwise. That result is useful for the error event pattern, and it also gives userland code a cheap way to know whether an optional notification had any subscribers. Node internals often use more direct listener-state checks for their own behavior; for example, Readable streams track 'data' listener presence when deciding whether to enter flowing mode.

When a Listener Throws

Synchronous dispatch also means synchronous failure. If any listener throws an exception, emit() stops iterating. The remaining listeners for that event do not fire. The exception propagates up through emit() to whatever code called it. There is no try/catch inside emit(); the error surfaces naturally through the call stack.

So a throwing listener in position 2 of 5 prevents listeners 3, 4, and 5 from running. The caller of emit() needs to handle the error or let it propagate to the process-level uncaughtException handler (covered in Chapter 1). There is no mechanism to "resume" emission after a throw; the remaining listeners are simply skipped.

That choice keeps failures visible. Adding a try/catch around each listener call would mask errors and make debugging harder. If a listener throws after partially modifying shared data, silently continuing to later listeners could produce corrupted state. Stopping emission and surfacing the error is the safer default.

If you genuinely need fault isolation between listeners, you have to implement it yourself:

for (const listener of ee.listeners('data')) {

try {

listener(chunk);

} catch (err) {

console.error('Listener failed:', err);

}

}That wrapper changes the semantics, so it should be a deliberate design choice. Most code relies on the default behavior of stopping on throw.

Walking Through lib/events.js

The source for EventEmitter lives in lib/events.js. The file is around 1,200 lines, and much of it is devoted to input validation, deprecation warnings, and edge case handling. The core logic is smaller than the file size suggests. The implementation details below match Node v24; public API contracts are the stable part, while helper names and private markers can change across releases.

The _addListener Internal

All listener registration routes through an internal _addListener function. It takes the target emitter, the event name, the listener function, and a boolean prepend flag. In broad strokes, it:

- Checks that

listeneris a function. ThrowsTypeErrorif not. - Gets or creates the

_eventsobject. - If a

'newListener'event exists on the emitter, emits it first (before adding the new listener). - Looks up

existing = events[type]. - If

existingisundefined, setsevents[type] = listenerand increments_eventsCount. - If

existingis a function (single listener), converts to array:events[type] = prepend ? [listener, existing] : [existing, listener]. - If

existingis an array, either pushes (append) or unshifts (prepend) the new listener. - After adding, checks the listener count against

_maxListeners. If exceeded and a warning hasn't been issued for this event yet, firesprocess.emitWarning().

The final warning check uses a flag on the listener array itself, the warned property, so the warning fires only once per event name. Once you have been warned about having 11 listeners on 'data', adding the 12th or 13th listener does not produce another warning. The warning resets if all listeners are removed and then re-added past the threshold.

The 'newListener' step controls the observable state during registration. Because that emission happens before the listener is added to _events, the handler sees the emitter state before the new listener exists. If the handler calls listenerCount() for the event being added, it returns the count without the new listener. The new listener appears in _events only after _addListener returns from the storage update.

That same ordering means that if the 'newListener' handler throws, the listener never gets added. The throw propagates out of on() / once() / addListener(), and the emitter state has not been modified.

The emit() Implementation

The actual emit method on EventEmitter.prototype uses rest parameters: function emit(type, ...args). The signature is simple. The optimization lives in dispatch, not in argument handling.

The single-listener case, a bare function in _events[type], calls the handler directly with no array handling. For multiple listeners, Node increments an internal emitting counter on the array, iterates it in order, and decrements the counter afterward. If listener registration or removal mutates that same event while emission is in progress, the mutation path clones the listener array before changing it. The internal arrayClone() helper has optimized cases for small listener arrays and falls back to ArrayPrototypeSlice for larger ones.

The difference between a single handler and an array of handlers is the main fast path. Many events on many emitters have exactly one listener, so the array path is skipped entirely in the common case. That is important because EventEmitter dispatch appears in hot I/O-facing paths: stream chunks, socket lifecycle events, server connection events, child-process events, and userland event forwarding all run through this machinery.

The method sets this to the emitter instance for each listener call. When you write:

ee.on('event', function() {

console.log(this === ee); // true

});this is the emitter. Arrow functions ignore this binding, so it applies only to regular function expressions. In older code, the this binding was how listeners accessed the emitter instance. Modern code often uses arrow functions and captures the emitter in a closure instead, making the binding irrelevant for many new call sites. It is still part of the behavior, and older libraries depend on it.

Error Event Special Handling

Before normal listener dispatch, emit() checks whether type === 'error'. That branch handles the special error event contract. If events.errorMonitor listeners exist, Node emits to that monitor symbol first, but monitor listeners do not count as 'error' handlers. If no normal 'error' listener exists, the implementation does the following:

- If the first argument (

er) is an instance ofError, throws it directly. - If

eris something else (a string, a number,undefined), creates anERR_UNHANDLED_ERRORwrapper whose message includes the inspected value. - Sets the wrapper's

.contextproperty to the original value, then throws the wrapper.

That is why emit('error', 'connection refused') produces a message like "Unhandled error. ('connection refused')" instead of throwing the string directly. The thrown wrapper also has code: 'ERR_UNHANDLED_ERROR', and its .context property lets you recover the original value if needed.

getEventListeners() and eventNames()

eventNames() returns an array of event names that have at least one listener. It uses Reflect.ownKeys() rather than Object.keys(), so it includes Symbol-typed event names. EventEmitter supports Symbols as event names, though they are uncommon in ordinary application code. Symbols are useful for private or non-colliding event names; a library can use Symbol('internal') as an event name that will not accidentally collide with user-defined event names.

getEventListeners(emitter, event) is a static method on the events module, not an instance method. It returns a copy of the listeners array. The copy is intentional; modifying the returned array does not affect the emitter's internal state. This static method also works with EventTarget instances, making it a universal listener inspection tool.

listenerCount() exists in two forms: emitter.listenerCount(eventName) as an instance method, and the older static EventEmitter.listenerCount(emitter, eventName) kept for compatibility. Both return the number of listeners for a given event. The instance method is the clearer form for normal code. Internally, the count can be derived from _events[eventName] as 0, 1, or the array length.

captureRejections

Node added captureRejections in v13.4 and v12.16. When it is enabled, either per instance through the constructor option or globally through EventEmitter.captureRejections = true, EventEmitter treats non-nullish listener return values as possible thenables. If a listener returns a promise that rejects, the rejection is caught and routed through the emitter's rejection handling path.

const ee = new EventEmitter({ captureRejections: true });

ee.on('event', async () => {

throw new Error('async failure');

});

ee.on('error', (err) => {

console.log(err.message); // "async failure"

});

ee.emit('event');The 'error' listener in that example does not run before emit('event') returns. emit() starts the async listener synchronously; rejection routing happens after the returned promise rejects.

Without captureRejections, the async listener returns a rejected promise that nobody awaits. It becomes an unhandled rejection: the process.on('unhandledRejection') handler fires, and depending on your Node version and flags, the process may terminate. With captureRejections, EventEmitter attaches a rejection handler to the returned thenable. The emitter can also define a Symbol.for('nodejs.rejection') method to customize how rejections are handled instead of routing them to 'error'.

Internally, after calling each listener, emit() looks at the return value. If captureRejections is enabled, the return value is not null or undefined, and the value behaves like a thenable, Node attaches .then(undefined, rejectionHandler). In current Node, that rejection handler schedules the actual routing with process.nextTick(). The routing function calls emitter[Symbol.for('nodejs.rejection')](err, type, ...args) if that method exists, or temporarily disables capture on the emitter and calls emitter.emit('error', err) as a fallback.

This connects EventEmitter's synchronous dispatch model to async/await error flow without making dispatch itself asynchronous. The listener still starts synchronously because emit() calls it on the current stack. If the listener is an async function, it returns a promise immediately: the function body runs synchronously up to the first await, then the promise is returned. captureRejections attaches a rejection handler to that promise. The rejection is observed asynchronously, and Node routes the resulting error on a later turn of the scheduling machinery. The error routing to 'error' is therefore asynchronous relative to the original emit() call, even though the initial listener invocation was synchronous.

There is a performance cost to that bridge. When captureRejections is off, which is the default, listener return values do not need promise rejection plumbing. When it is on, non-nullish return values are treated as possible thenables, so Node has to inspect .then and attach a rejection handler when appropriate. For emitters that fire thousands of events per second, this check adds overhead. That is why it is opt-in.

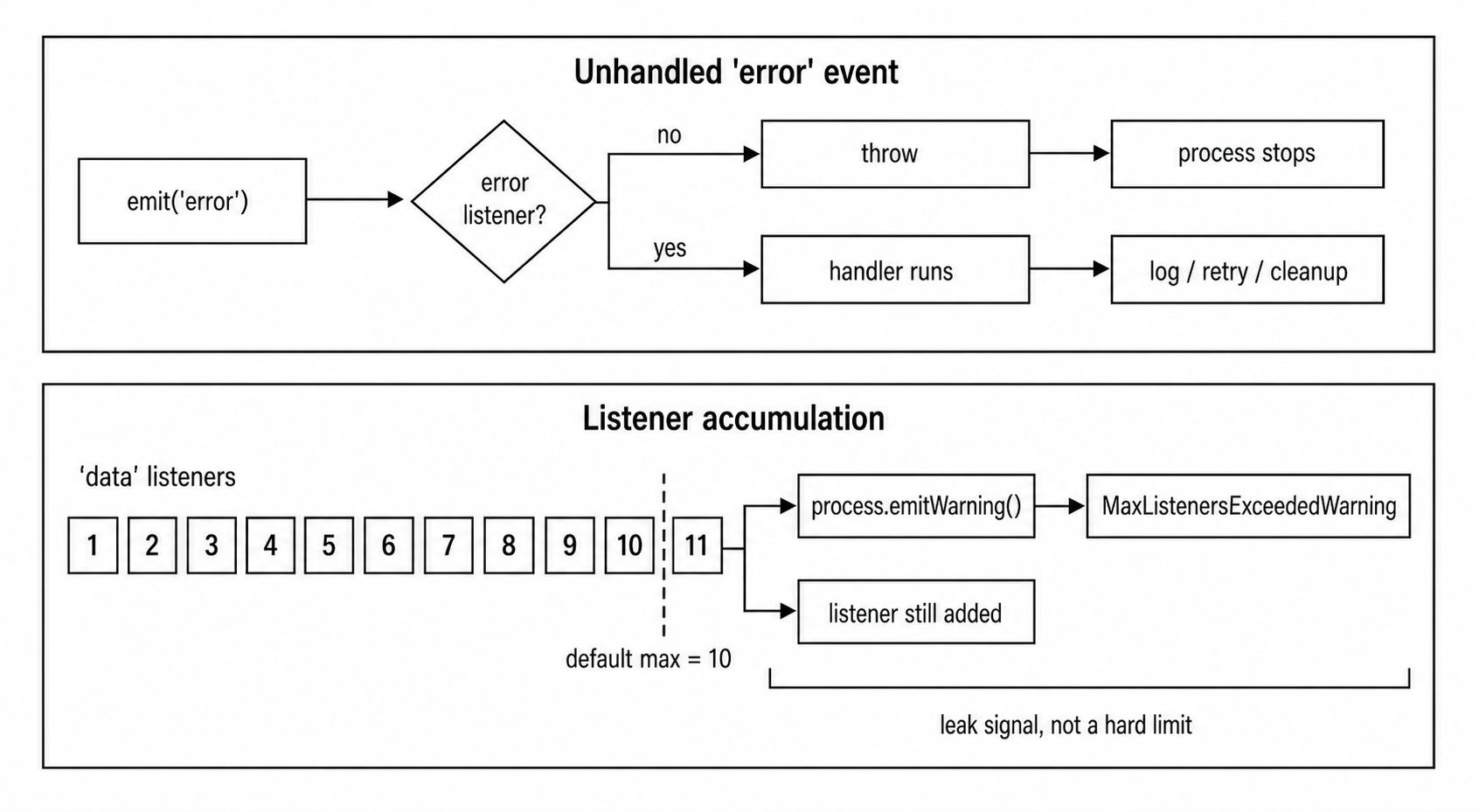

The Error Event Contract

The 'error' event has behavior that no other event name has. If you call emit('error', err) and there are no listeners for 'error', Node does not return false and continue. It throws. If the first argument is an Error instance, Node throws that directly. If the value is something else, Node throws an ERR_UNHANDLED_ERROR wrapper whose message includes the original value.

const ee = new EventEmitter();

ee.emit('error', new Error('boom'));

// Throws: Error: boom

// The process crashes if nothing catches this.This design reflects a core principle in the runtime: silent I/O failures are worse than visible crashes. If a TCP server encounters a network error, you need to know about it. If a stream's underlying resource fails, you need to know. The 'error' event contract forces code to acknowledge those errors or accept a crash.

The thrown error propagates up the call stack from emit(). If emit() is wrapped in a try/catch, that catch block sees it. Otherwise, it becomes an uncaughtException (covered in Chapter 1). In most production code, the uncaughtException handler logs and exits.

The consequence is simple: attach an 'error' listener to any emitter whose API can emit operational errors, especially streams, sockets, servers, child processes, and long-lived custom emitters.

const net = require('node:net');

const server = net.createServer();

server.on('error', (err) => {

console.error('Server error:', err.message);

});

server.listen(0);Without the error handler, a bind failure such as EADDRINUSE would throw from the emit('error', ...) call inside the listen() implementation and crash the process. With the handler, the application can log and decide what to do: retry on a different port, wait and retry, or exit gracefully. The snippet uses listen(0) so Node asks the OS for an available port; application servers usually listen on a configured port instead.

Why This Design Exists

The error event contract exists because emitters are often tied to external resources: sockets, files, streams, child processes, TLS sessions. When those resources fail, ignoring the failure usually corrupts the surrounding application state. Connections hang, data gets dropped, and cleanup paths never run.

The crash-on-unhandled-error approach forces the issue. You either handle the error or the process stops. In development, an unhandled socket or stream error may be noisy, but it exposes a missing error path instead of letting the resource fail silently. In production, the handler is where code logs the error, cleans up resources, and decides whether the process can continue.

Most classes that extend EventEmitter and can encounter operational failures follow this contract: net.Server, net.Socket, http.Server, http.IncomingMessage, fs.ReadStream, child_process.ChildProcess, and tls.TLSSocket. They emit 'error' for failures the caller is expected to handle.

captureRejections and the Error Event

The error event also interacts with captureRejections. When an async listener rejects and captureRejections is on, the rejection flows through emit('error', rejectionReason). If there is no error listener, the same rule applies: the process crashes. Enabling captureRejections without an 'error' handler changes the crash path from "unhandled rejection" to "thrown error from emit('error')."

There is also a timing difference. Without captureRejections, the async listener's rejection is reported through the process unhandled-rejection path after the original emit() call has returned. With captureRejections, Node observes the rejected promise and schedules rejection routing back through the emitter. If there is no 'error' handler, that later emit('error') throws. The stack trace changes, and the crash still happens after the original event emission.

Domain Integration (Legacy)

Domains, through the node:domain module, can still alter EventEmitter error handling for domain-bound emitters. Domains are deprecated, but compatibility code remains in Node, so you may still see domain-related branches when reading source or old applications. New code should use normal 'error' listeners, structured cleanup, and process-level crash handling instead of domains.

Figure 7.12 — The two operational guardrails are visible in production: unhandled 'error' events fail loudly, and accumulating listeners surface as max-listener warnings before they become larger leaks.

Memory Leaks and maxListeners

The default maxListeners value is 10. When the 11th listener is added to one event name, Node prints a warning through process.emitWarning(). Representative output looks like this:

MaxListenersExceededWarning: Possible EventEmitter memory leak

detected. 11 data listeners added to [EventEmitter]. MaxListeners

is 10. Use emitter.setMaxListeners() to increase limit.This is a warning, not an error. The listener still gets added, and the emitter still works. The warning exists because unbounded listener growth is one of the most common memory leaks in Node applications.

A common leak pattern:

function handleRequest(req, res) {

db.on('change', () => { /* respond to change */ });

// oops - never removes the listener

}Every request adds a listener to the db emitter. After 1000 requests, the emitter has 1000 'change' listeners. After 100,000 requests, it has 100,000. Each listener holds a closure over req and res, so those objects cannot be garbage collected either. Memory grows linearly with request count. Every time db emits 'change', it calls all 100,000 listeners synchronously on the same tick. The application slows down before it runs out of memory.

The warning object has a stack, and running Node with --trace-warnings prints where the 11th listener was added. That stack trace is the starting point for finding the leak. The warning message includes the constructor name of the emitter and the event name, so you can see which emitter and which event are accumulating listeners.

The MaxListenersExceededWarning is emitted via process.emitWarning(). You can listen for it programmatically:

process.on('warning', (warning) => {

if (warning.name === 'MaxListenersExceededWarning') {

console.log(warning.emitter, warning.type, warning.count);

}

});The warning object has emitter (the EventEmitter instance), type (the event name), and count (the current listener count) properties. Those fields are enough for automated leak detection in production.

Controlling the Limit

When the listener count is expected to be higher, setMaxListeners(n) sets the limit per instance:

ee.setMaxListeners(20); // raise for this emitter

ee.setMaxListeners(0); // disable warning entirely

ee.setMaxListeners(Infinity); // same effectEventEmitter.defaultMaxListeners is the global default. Changing it affects all emitters that have not called setMaxListeners(). Emitters created after the change inherit the new default. Emitters created before the change also use the new default, because the check reads EventEmitter.defaultMaxListeners at registration time, not at construction time.

EventEmitter.defaultMaxListeners = 20;Node 15.4 added events.setMaxListeners(n, ...targets), which sets the limit on multiple emitters at once. Use it during process initialization for emitters that all need a higher limit:

const { setMaxListeners } = require('node:events');

setMaxListeners(50, server, db, cache, queue);Current Node.js releases also provide events.getMaxListeners(emitter), which returns the effective limit for an emitter. If the instance has not called setMaxListeners(), it returns the current default. This static method works with both EventEmitter and EventTarget instances.

Legitimate High Listener Counts

Sometimes 10 listeners for one event name is genuinely too low. A process-level emitter might legitimately have more than 10 listeners for 'SIGTERM' if several subsystems register shutdown cleanup. A shared database connection pool might have 20+ listeners for 'error' if multiple modules subscribe independently. In those cases, raise the limit and move on.

The limit should still express an expectation. Setting maxListeners to Infinity as a band-aid hides potential leaks. A specific number that matches the expected listener count still catches growth beyond that expectation. If you expect 25 modules to listen for 'change' events, setting the limit to 30 is close enough to detect unexpected growth and high enough to avoid false warnings.

Detecting Leaks in Practice

The maxListeners warning is the first line of defense, but it only triggers once per event name. If you need continuous monitoring, use listenerCount() in health checks or metrics:

setInterval(() => {

const count = ee.listenerCount('data');

if (count > 100) console.warn(`data listeners: ${count}`);

}, 60_000);In production monitoring, exporting listener counts as metrics, such as Prometheus gauges, lets you graph listener growth over time. A monotonically increasing listener count is a leak. A stable count with occasional spikes during deployments or reconnections is normal.

Another common leak source is event forwarding. If an intermediary object forwards events from one emitter to another:

source.on('data', (chunk) => dest.emit('data', chunk));and the intermediary is created per request while source persists, the same leak appears as unbounded listener growth on source. The fix is the same: remove the listener when the intermediary is done.

removeAllListeners and Cleanup

removeAllListeners() is the broad cleanup tool. With no arguments, it removes every listener from every event. With an event name argument, it removes all listeners for that specific event. If the emitter has a 'removeListener' observer, Node emits one notification for each listener being removed.

The cleanup pattern for short-lived subscriptions:

function subscribe(emitter) {

const handler = (data) => handleUpdate(data);

emitter.on('update', handler);

return () => emitter.off('update', handler);

}

const unsub = subscribe(db);

// ... later

unsub();Returning an unsubscribe function is a common pattern in Node code. It avoids keeping a reference to the handler function in a wider scope. The closure captures the handler, and calling the returned function removes it. The ownership is clear: the code that creates the subscription also receives the function that tears it down.

For once() listeners, cleanup is automatic only after the event fires. If the event never fires, the once() listener stays registered forever. This is another source of memory leaks: registering once() handlers for events that might never occur. A once('drain') on a writable stream that never fills its buffer, for instance, will sit there indefinitely.

Modern AbortSignal integration helps with this. events.once() and events.on() both accept a signal option. Aborting the signal cancels the waiting listener, preventing the leak. With once imported from node:events, a timeout wrapper looks like this:

async function waitWithTimeout(server) {

const ac = new AbortController();

const timeout = setTimeout(() => ac.abort(), 5000);

try {

await once(server, 'listening', { signal: ac.signal });

} finally {

clearTimeout(timeout);

}

}If the 'listening' event does not fire within 5 seconds, the AbortController aborts, the promise rejects with an AbortError, and the internal listener is cleaned up.

Async Context for Custom Emitters

Ordinary EventEmitter listeners run in the async context of the code that calls emit(). That is usually correct when a callback, timer, promise continuation, or stream implementation emits directly. It can be wrong for a custom emitter that represents work from another asynchronous resource, because diagnostics and AsyncLocalStorage may need a stable context for every listener fired by that emitter.

Node provides EventEmitterAsyncResource for that case:

const { EventEmitterAsyncResource } = require('node:events');

const bus = new EventEmitterAsyncResource({ name: 'JobBus' });

bus.on('done', () => { /* runs in bus async context */ });

bus.emit('done');

bus.emitDestroy();EventEmitterAsyncResource extends EventEmitter, but its emit() runs listeners inside the async resource context owned by the emitter. Call emitDestroy() when that resource lifecycle is finished so async-hooks consumers see the teardown. Most application emitters do not need this class. It is useful when infrastructure code must preserve async context across custom event dispatch.

EventEmitter in the Async Pattern Landscape

Node-style completion callbacks, promises, and async/await usually represent a single completion. A callback conventionally fires once (covered in Chapter 7.1). A promise resolves or rejects once (covered in Chapter 7.2). An async function returns one result (covered in Chapter 7.3). EventEmitter breaks that shape. It can emit the same event name many times over a long-lived object lifecycle, and multiple listeners can react to each occurrence independently.

That makes EventEmitter fit ongoing, repeating state changes: new connections on a server, chunks arriving on a stream, file changes from a watcher, log lines from a child process. These are events in the original sense: things that happen repeatedly, at unpredictable times, and multiple consumers might care about each one.

Callbacks give one function a completion signal. Promises give one awaitable a resolution or rejection. EventEmitter gives many listeners repeated signals over time.

The tradeoff is lifecycle management. With a promise, you get a result and you are done. With EventEmitter, code has to decide when to start listening, when to stop, and what happens to listeners that are never removed. The memory leak section above is the concrete manifestation of that tradeoff.

When to Use Which

Choosing between these patterns comes down to cardinality and timing:

For one result with a clear completion point, use a promise or callback: database query, file read, HTTP request. You call a function, and you get one answer.

For one result whose timing is event-driven, use events.once() to wrap a one-time event as a promise: server startup, first connection, child-process exit.

For multiple push-based results over time, use EventEmitter with on(): stream data, socket messages, file changes. The producer pushes data to consumers as it arrives.

For multiple pull-based results over time, use an async iterator through events.on(). The use case is similar, but the consumer controls the pace. The next subchapter covers that pattern in depth.

EventEmitter underpins most built-in modules. Streams (covered in Chapter 3) extend EventEmitter. net.Server extends EventEmitter. http.Server extends net.Server, which extends EventEmitter. child_process.ChildProcess extends EventEmitter. fs.FSWatcher extends EventEmitter. The process object itself is an EventEmitter instance. Understanding EventEmitter internals means understanding the base behavior of nearly every I/O object in the runtime.

Bridging EventEmitter to Promises and Async Iteration

The events module provides two static functions that adapt EventEmitter's push-based model to promises and async iteration.

events.once(emitter, eventName) returns a promise that resolves when the event fires. The resolved value is an array of the arguments passed to emit(). It registers a once() listener internally, and also attaches an 'error' listener that causes the promise to reject if an error is emitted before the target event. The exception is when the target event is 'error' itself: events.once(emitter, 'error') treats 'error' as the event you asked for and resolves with the emitted arguments. When the success or error path settles the promise, the internal listeners are cleaned up. With once from node:events and net from node:net, the useful part is short:

const server = net.createServer();

const listening = once(server, 'listening');

server.listen(0);

await listening;

console.log('Server is ready on', server.address().port);

server.close();This is the concise way to await a one-time event inside an async context. The 'listening' event passes no arguments, so the resolved array is empty; the address comes from server.address() after the event fires. Without events.once(), you would write the promise wrapper yourself and could easily miss the error case or forget to clean up the success listener when the error fires. events.once() handles both paths.

For events that pass arguments, the promise resolves to an array of those arguments. await once(server, 'connection') gives you [socket]. await once(child, 'exit') gives you [code, signal] for a child process. The array wrapping is consistent regardless of argument count.

One ordering caveat is important when several events can fire in the same synchronous batch or the same process.nextTick() drain. Do not write sequential awaits if the second event may be emitted before the first await continuation runs:

const ready = once(ee, 'ready');

const opened = once(ee, 'opened');

startWork();

await Promise.all([ready, opened]);Creating both promises first installs both listeners before startWork() can emit. A sequence like await once(ee, 'ready'); await once(ee, 'opened') can miss 'opened' if both events fire before promise continuations run.

events.on(emitter, eventName) returns an AsyncIterator. Each time the event fires, the iterator yields the arguments as an array. This connects EventEmitter to for await...of.

const { on } = require('node:events');

async function consume(stream) {

for await (const [chunk] of on(stream, 'data')) {

process.stdout.write(chunk);

}

}The async iterator buffers events that arrive between iterations and yields them in order. It runs indefinitely by default. You specify which events signal completion with the close option, or use an AbortSignal to cancel. If the emitter emits 'error', the iterator throws. On the Node v24 baseline, highWaterMark and lowWaterMark are the current option spellings; older highWatermark and lowWatermark spellings still work for compatibility. When the emitter supports pause() and resume(), those watermarks let the iterator apply backpressure to a growing event buffer.

The buffer is not free. By default, events.on() can buffer up to Number.MAX_SAFE_INTEGER events. That prevents immediate loss when the consumer is briefly busy, but a fast producer and a slow loop can still grow memory. Use close, an AbortSignal, or watermarks on pause/resume-capable emitters when the producer can outpace the consumer.

Both events.once() and events.on() accept an AbortSignal for cancellation. This is the recommended way to add timeouts or cancellation to event waiting:

async function waitForListening(server) {

const ac = new AbortController();

const timeout = setTimeout(() => ac.abort(), 10_000);

try {

await once(server, 'listening', { signal: ac.signal });

} catch (err) {

if (err.code === 'ABORT_ERR') console.log('Timed out');

} finally { clearTimeout(timeout); }

}EventTarget: The Web Standard Alternative

The runtime also provides EventTarget, the browser-style event API. It follows the DOM EventTarget spec: addEventListener(), removeEventListener(), dispatchEvent(). Events are Event objects with a type property, not arbitrary argument lists.

EventTarget exists mainly for Web API compatibility. AbortController uses it. MessagePort uses it. The global WebSocket uses web-compatible event behavior. EventTarget dispatch works with Event objects, while EventEmitter passes raw arguments. For runtime-native code, EventEmitter remains the standard. EventTarget shows up where browser compatibility is the goal.

The key behavioral differences follow from those shapes. EventTarget's dispatchEvent() expects an Event object; EventEmitter's emit() accepts any arguments. EventTarget listener removal requires the exact same function reference and the same capture option; EventEmitter just needs the function reference. EventTarget has no 'error' event contract, so there is no special throw-on-unhandled behavior. EventTarget supports the { once: true } option on addEventListener(), similar to EventEmitter's once() but passed as an option rather than exposed as a separate method.

For most runtime-native work, EventEmitter is what you use. EventTarget is there for web-compatible APIs, and the two coexist without conflict. events.getEventListeners() and events.setMaxListeners() work with both types, providing a unified API surface for tooling that needs to inspect either kind of event source.

The operational rule is simple: EventEmitter is not a background scheduler. It is a synchronous listener registry with a few contracts layered on top: 'error' must be handled, listener growth must be watched, and long-lived subscriptions must be cleaned up. Once those constraints are clear, streams, servers, sockets, child processes, and async-iterator adapters are easier to reason about because they all inherit the same basic event behavior.